Listen to the Podcast:

Since ChatGPT’s release in November, it and other AI-powered chatbots from Google and Microsoft have scared users, harassed them, and given them false information.

But AI’s biggest problem may be something that neurologists have warned about for a long time: it may never be able to copy how the human brain works fully.

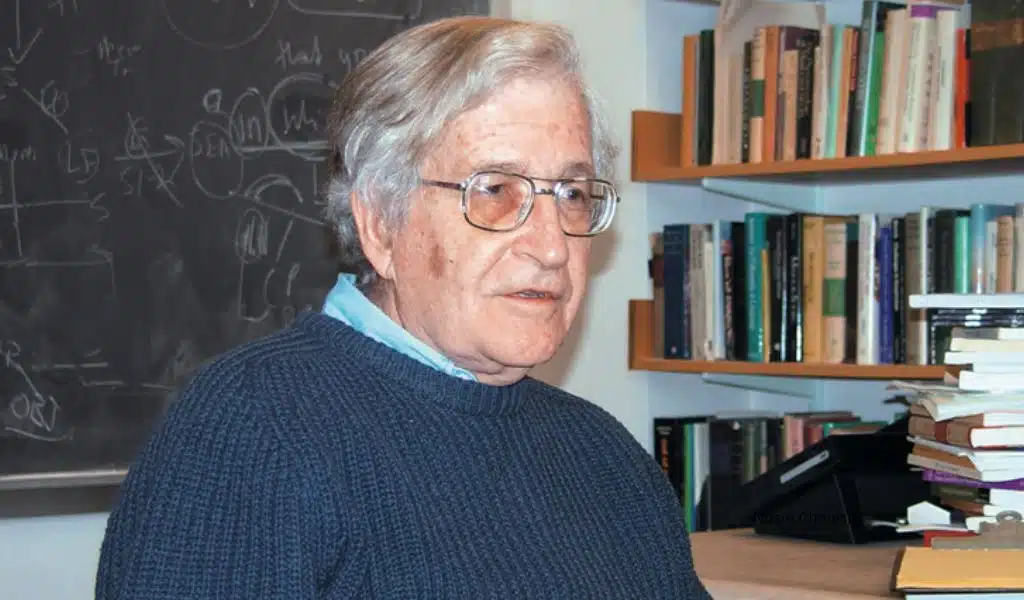

AI and intelligent chatbots like ChatGPT may help write code and plan trips. Still, they may never be able to have the kind of original, thoughtful, and possibly controversial conversations that Noam Chomsky, one of the most influential linguists alive today, has had. According to scientists, the human brain is unique and profound.

“OpenAI’s ChatGPT, Google’s Bard, and Microsoft’s Sydney are marvels of machine learning,” Chomsky wrote in an essay published Wednesday in the New York Times with linguistics professor Ian Roberts and AI researcher Jeffrey Watmull. But Chomsky says that ChatGPT can be seen as an early step, and it will be a long time before AI can match or beat human intelligence.

Chomsky wrote that AI’s lack of ethics and logical thinking makes it an example of “evil triviality” because it doesn’t care about reality or truth and goes through the motions it was programmed to do. This could make it impossible for AI to understand how people think.

“The science of linguistics and the philosophy of knowledge tell us how people think and use language is very different. Chomsky wrote, “What these differences can do to these programs is important, but it limits what they can do.”

He said, “In reality, these programs are stuck in a stage of cognitive development where they are neither human nor pre-human.” “Real intelligence is the ability to think and say things that are impossible but useful.”

Where AI can’t Reach the Human Brain?

Users have been impressed by how OpenAI’s ChatGPT can use vast amounts of data to make conversations that make sense. Last month, the technology became the app with the fastest growth rate in history as big tech companies rushed to release their AI-based products.

AI-powered chatbots use big language models and terabytes of data to find detailed information and write it down in text. But the AI guessed what word would make the most sense next in a sentence without knowing if it was true or false or what the user wanted to hear.

Because they can’t tell if something is true, they make obvious mistakes and give outright false information. Chatbot developers have said that making mistakes is part of how AI learns and that the technology will improve over time. But AI may not be able to help people improve their lives because it doesn’t make sense.

Chomsky said about today’s AI programs, “Their biggest flaw is that they don’t have the most important skill of any intelligent being.” “To say not only what is, was, and will be the case, but also what is not the case and what may or may not be the case, is to describe and predict. They are parts of figuring out what something means., which is a sign of true wisdom.”

Chomsky said the human brain is made to “build explanations” rather than “infer brute correlations.” This means it can use the information to come to new and insightful conclusions. But neurologists have said for a long time that AI Chatbot is still a long way from being able to think as humans do.

Chomsky wrote, “The human mind is a system that works with small amounts of information that is surprisingly efficient and even elegant.”

The Insignificance of Evil

AI can’t think critically and iteratively, so it self-censors what it says. By giving an opinion, it means, according to Chomsky, that it can’t have the kind of tough conversations that have led to significant advances in science, culture, and philosophy.

“For ChatGPT to be useful, it should be able to make new visible outputs.” “For most of its users to like it, it shouldn’t have content that is morally wrong,” he and his co-authors wrote.

Definitely, it’s probably best to stop ChatGPT and other chatbots from making decisions on their own. Experts have told people not to use the technology for medical advice or homework because it has flaws. A New York Times reporter and Microsoft’s Bing broke into a chatbot last month to try to get a user to leave his wife. This is an example of how AI can go wrong.

AI mistakes can also help spread conspiracy theories, and they could force people to do things that are dangerous to themselves or others.

Chomsky says that worrying about AI going bad could mean it will never be able to make rational decisions or weigh moral arguments. If that happens, technology might stop being an important part of our lives and become something like a toy or a tool.

“ChatGPT shows something like the banality of evil through plagiarism, apathy, and carelessness. It summarizes standard arguments in the literature with a kind of “super-autocomplete.” Still, it doesn’t take a stand on anything. Ignorance pleads rather a lack of intelligence and gives a “just following orders” defense, blaming its creators, wrote Chomsky.

“Because ChatGPT couldn’t reason from ethical principles, its programmers told it it couldn’t add anything new to controversial, or “critical,” discussions.” It gave up creativity for something that was kind of wrong.