Every technology cycle has one phrase that gets dragged into meetings, pitch decks, investor calls, product pages, and LinkedIn posts until it stops meaning anything useful. In 2026, that phrase is agentic AI 2026. Apparently, every product is now agentic. Your chatbot is agentic. Your workflow automation is agentic. Your dashboard with a “generate summary” button is agentic. Somewhere, someone probably renamed a cron job “autonomous intelligence” and added a glowing purple gradient to the homepage.

The problem is not that AI agents are fake. The problem is that too many products wearing the “agentic” badge are not agents in any serious sense. They are often LLM wrappers connected to APIs, scheduled tasks, templates, and a suspicious amount of human babysitting.

Useful? Sometimes.

Autonomous? Let’s not get emotionally carried away.

IBM defines agentic AI as an AI system that can accomplish a specific goal with limited supervision, while Google Cloud describes AI agents as systems that pursue goals, complete tasks, and show reasoning, planning, memory, and some level of autonomy. That is the standard buyers should use before believing the sales deck.

Why Agentic AI 2026 Became a Marketing Costume

The phrase agentic AI 2026 is commercially irresistible because it sounds more advanced than “automation” and more magical than “workflow software.”

For founders, it makes the product look future-proof.

For investors, it sounds like the next platform shift.

For buyers, it promises productivity without hiring more people.

For marketing teams, it is basically a piñata stuffed with buzzwords.

But CTOs and AI buyers need to ask a painfully boring question:

What exactly is agentic here?

Not “does it use an LLM?”

Not “does it call an API?”

Not “does it write a polite email?”

Not “does the landing page say autonomous seventeen times?”

The real questions are harder.

| Buyer Question | Why It Matters |

|---|---|

| Does the system maintain state? | It should know where a workflow stands. |

| Does it have usable memory? | It should not forget what it already tried. |

| Can it recover from failure? | Real work gets messy. Demos do not. |

| Does it respect permissions? | Autonomy without access control is chaos with a login. |

| Does it leave an audit trail? | If it breaks something, someone needs to know what happened. |

| Is someone accountable? | “The model decided” is not a governance policy. |

Reuters reported Gartner’s warning that many vendors are engaging in “agent washing,” meaning they rebrand assistants and chatbots as agentic tools without significant agentic capability. Gartner also said more than 40% of agentic AI projects could be canceled by the end of 2027 due to rising costs and unclear business value.

So yes, the hype is real. But so is the disappointment invoice.

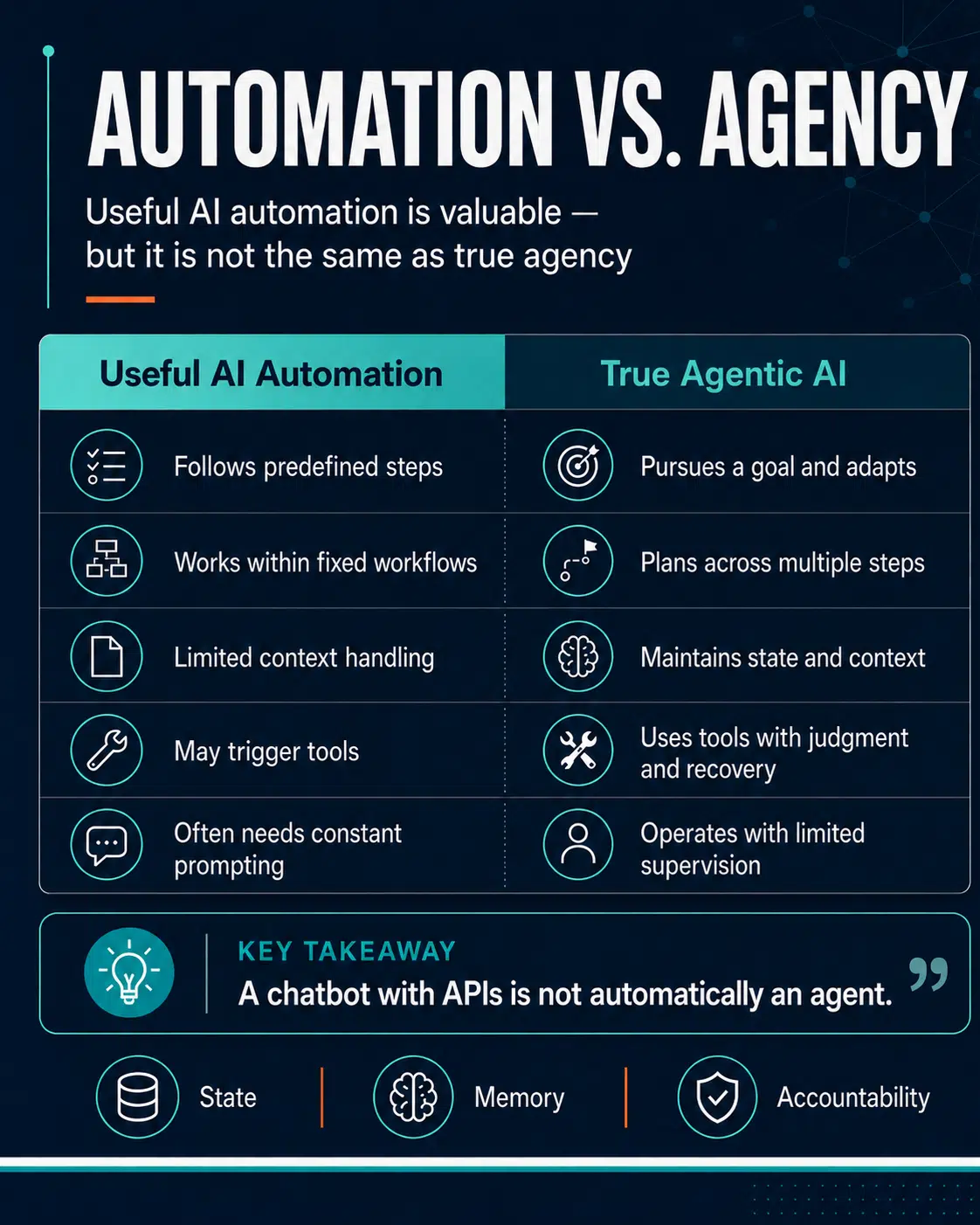

The Difference Between Useful AI Automation and Real Agency

Let’s be fair. Not every product needs to be a fully autonomous agent.

A tool that summarizes meetings can be useful.

A tool that drafts emails can be useful.

A tool that routes customer tickets can be useful.

A tool that extracts invoice data can be useful.

The problem starts when ordinary automation gets dressed up as autonomous AI because “automation” sounds too 2019.

Here is the practical distinction.

| Category | What It Does | What It Is Not |

|---|---|---|

| AI Assistant | Responds to prompts and helps with tasks | Not autonomous by default |

| AI Automation | Executes defined workflows | Not necessarily adaptive |

| LLM Wrapper | Uses a language model around an app or process | Not automatically agentic |

| AI Agent | Pursues goals, plans steps, uses tools, adapts, and tracks progress | Should not need constant babysitting |

| Enterprise Agentic System | Coordinates tools, data, permissions, memory, logs, and governance | Not just a chatbot with API access |

Google Cloud lists reasoning, acting, observing, planning, and collaborating as key features of AI agents. OpenAI’s Agents documentation also points to state, handoffs, guardrails, human review, observability, and evaluations as workflows become more complex. In plain English: real agents need operational plumbing, not just confident sentences.

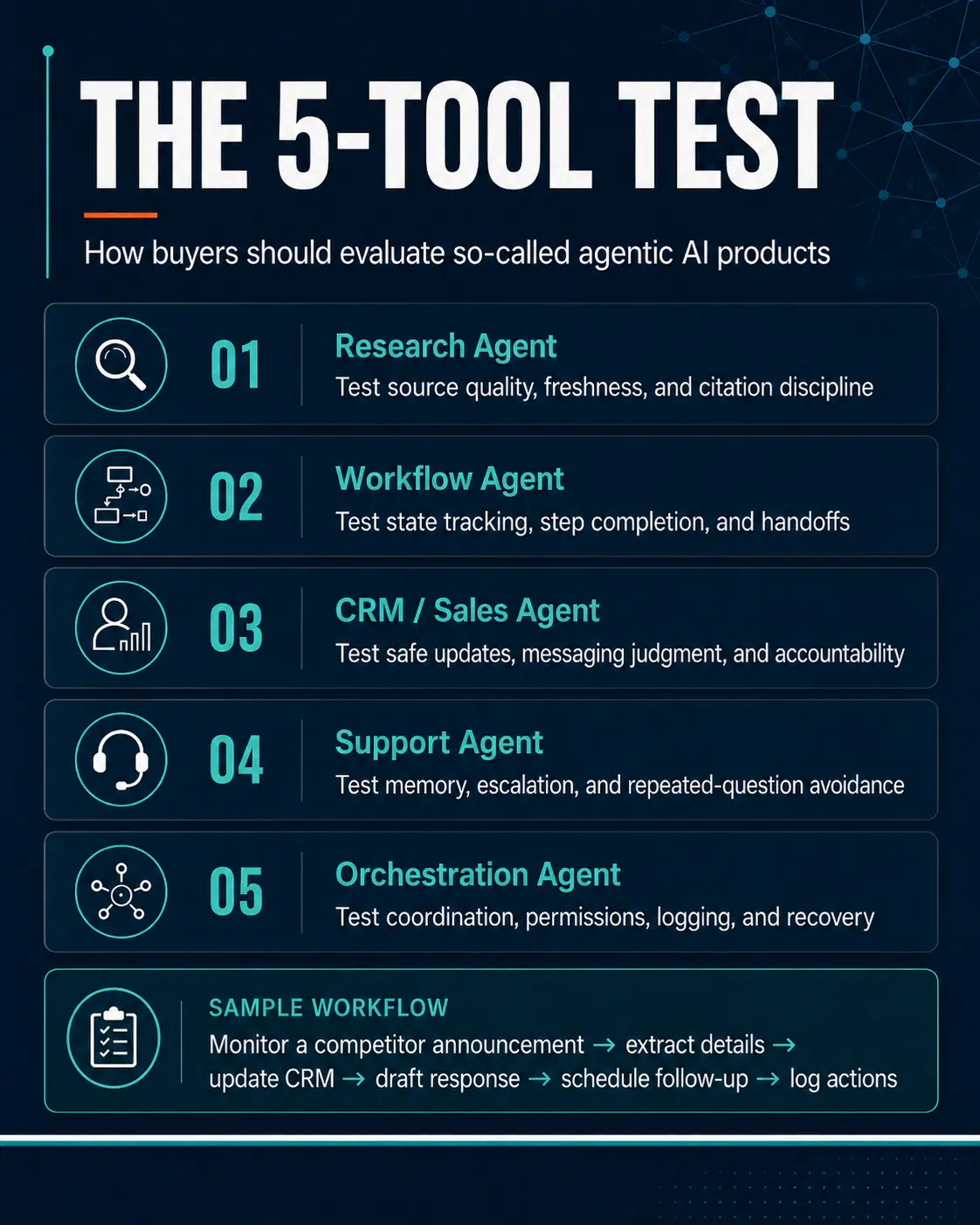

The Five-Tool Test Buyers Should Run

Instead of believing the phrase “agentic AI,” buyers should run a workflow test.

Not a cute demo.

Not “summarize this PDF.”

Not “write a friendly email.”

A real workflow.

Here is the test I would use:

Workflow: Monitor a competitor announcement, extract key business details, compare them with internal positioning, update a CRM note, draft a sales response, schedule a follow-up task, and generate an audit trail.

That workflow forces the system to handle research, judgment, state, memory, tools, permissions, and handoffs. In other words, exactly where AI agents limitations become visible.

Five Agentic Tool Categories to Test

| Tool Type | What to Test | Where It Usually Breaks |

|---|---|---|

| Research Agent | Can it find, verify, and cite fresh information? | Source quality, outdated context, weak confidence signals |

| Workflow Agent | Can it move through multi-step tasks without losing state? | Duplicate actions, skipped steps, broken handoffs |

| CRM/Sales Agent | Can it update records and draft useful responses safely? | Wrong account context, risky messaging, poor accountability |

| Support Agent | Can it remember issue history and escalate properly? | Repeated questions, shallow memory, weak escalation judgment |

| Orchestration Agent | Can it coordinate tools, agents, approvals, and logs? | Observability, permissions, failure recovery, auditability |

This is where the fantasy usually meets the furniture.

A research agent may summarize well but fail to separate fresh data from stale data. A CRM agent may write a beautiful email but update the wrong account. A support agent may sound helpful while asking the customer for the same order number three times. Congratulations, your agent has achieved sentience at the level of a confused help desk script.

Before trusting any AI platform, test it against real creative and business workflows. A good tool should reduce friction, not add another layer of confusion.

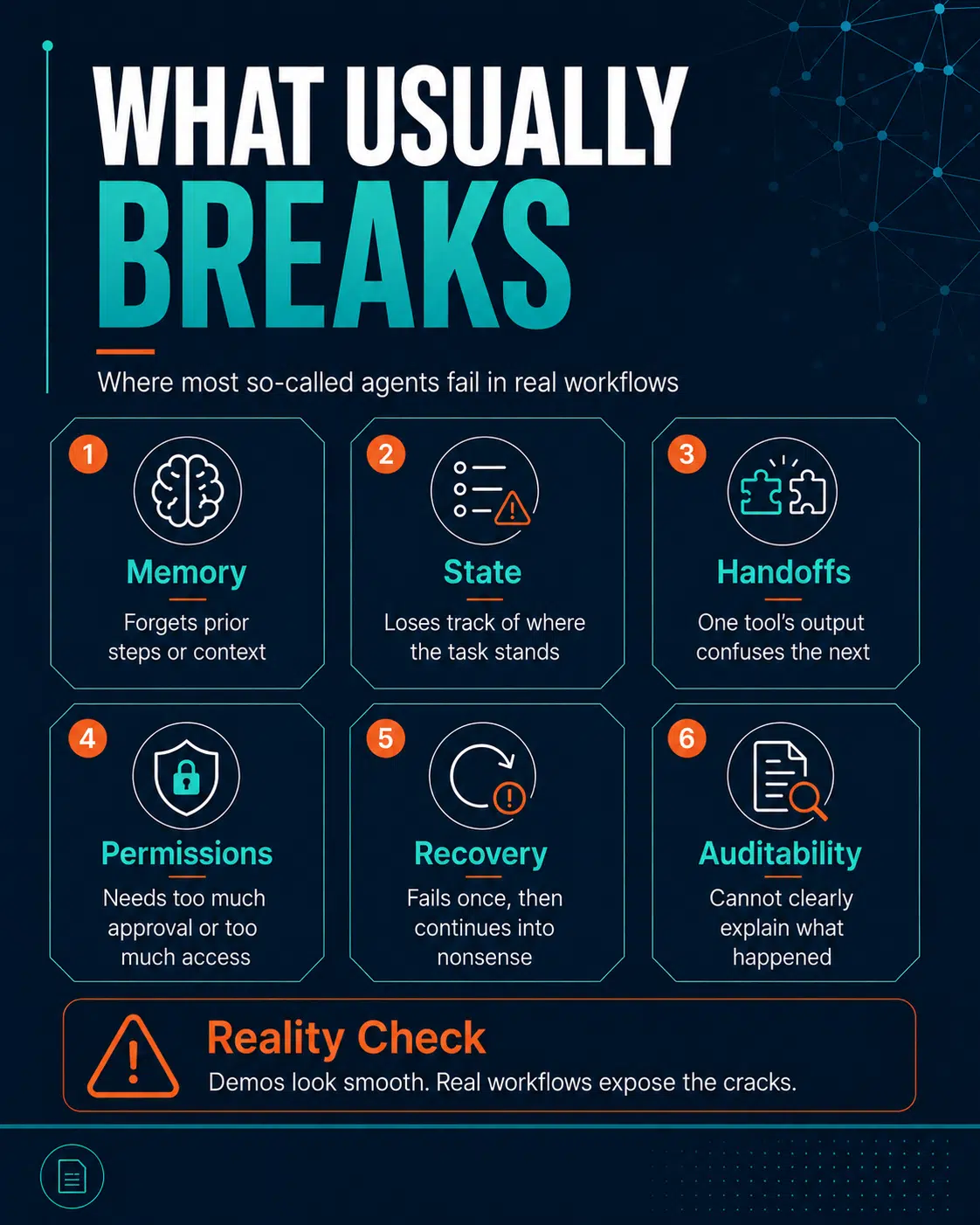

What Usually Breaks First

When agentic products are tested against real workflows, the failure pattern is not mysterious.

The common weak points are:

- Memory: The system forgets previous steps or treats chat history as durable memory.

- State: It cannot reliably tell whether a task is pending, complete, blocked, or duplicated.

- Handoffs: One tool produces output that another tool misreads.

- Permissions: It either needs approval for everything or gets too much access.

- Recovery: It fails once and then continues confidently into nonsense.

- Auditability: It cannot clearly explain what it did, why it did it, or what changed.

LangChain’s State of Agent Engineering report found that 57% of surveyed respondents had agents in production, while 32% cited quality as a top barrier. It also found that nearly 89% had implemented some form of observability, showing that serious teams know agents cannot be trusted at scale without visibility.

That is the important part. Real builders are not saying, “Just vibe-code a magic worker and let it roam through Salesforce.” They are talking about tracing, evals, debugging, observability, and quality.

Less appealing? Yes.

More useful than another “autonomous workforce” keynote? Absolutely.

The Enterprise Problem: Agent Sprawl Is Coming

The bigger issue is not one bad agent. It is too many poorly governed agents.

Gartner predicted that by 2028, an average global Fortune 500 enterprise could have more than 150,000 agents in use, up from fewer than 15 in 2025. Gartner also said only 13% of organizations believe they have the right AI agent governance in place.

That should make every CTO sit up straight.

Because 150,000 agents do not sound like innovation if nobody knows:

- who created them,

- what systems they can access,

- what data they can read,

- what actions they can take,

- what logs they produce,

- who owns their mistakes.

At that point, you do not have digital labor. You have a corporate ant colony with O Auth tokens.

McKinsey also argues that scaling agentic AI depends on strong data foundations, modernized architecture, data quality, governance, and operating-model changes. It specifically notes that agentic AI places greater pressure on access control, lineage, traceability, and governance because agents coordinate models and data sources continuously.

Translation: You cannot sprinkle agents over a messy data stack and expect enlightenment.

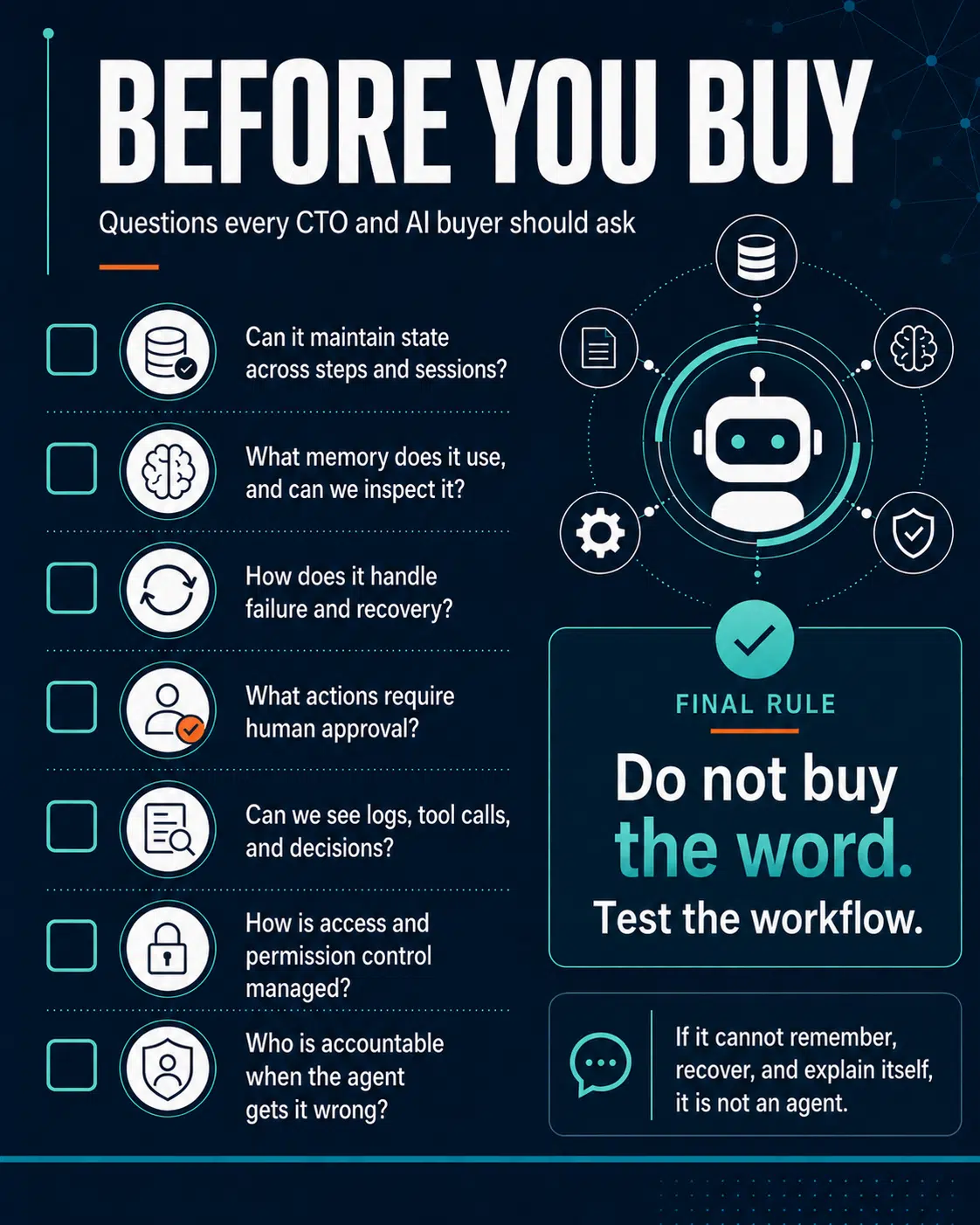

The Buyer Checklist for Agentic AI 2026

Before buying any agentic AI 2026 product, CTOs, founders, and AI buyers should ask direct questions. Not inspirational questions. Not “where do you see the future of work?” questions. Actual questions.

| Area | Questions Buyers Should Ask |

|---|---|

| State | Can the agent track workflow progress across steps and sessions? |

| Memory | What memory does it use, and can that memory be inspected or corrected? |

| Permissions | What actions require approval before execution? |

| Guardrails | How does the system block unsafe, wrong, or unauthorized actions? |

| Observability | Can we see tool calls, retrieved data, decisions, and failures? |

| Evaluation | How do you test agent quality before and after deployment? |

| Recovery | What happens when a tool fails or returns bad data? |

| Audit Trail | Can the system explain what it changed and why? |

| Accountability | Who is responsible when the agent causes damage? |

The best vendors will answer these clearly.

The weak ones will say something like, “Our proprietary reasoning engine handles that dynamically.”

That is usually your cue to protect the budget and slowly back away from the demo.

Human-in-the-Loop Is Not Always a Flex

A lot of vendors proudly mention “human-in-the-loop.” Sometimes that is a good thing. For sensitive workflows, human review is necessary. OpenAI’s agent documentation, for example, describes human review as a way to pause a run so a person or policy can approve or reject a sensitive action.

But let’s not pretend every human-in-the-loop design is a masterpiece of responsible AI.

Sometimes it means:

- The system cannot be trusted alone,

- The workflow is not truly autonomous,

- The model fails too often,

- The vendor needs humans to cover the cracks.

Human review is valuable when it is intentional. It is less impressive when it exists because the “agent” keeps wandering into walls.

The Real Definition Buyers Should Use

Here is the definition I would use.

A real agentic AI system should be able to:

- understand a goal,

- plan steps,

- use approved tools,

- maintain state,

- use memory when necessary,

- adapt to new information,

- recover from failure,

- respect permissions,

- produce logs,

- explain what it did,

- and make accountability possible.

Everything else may still be useful. But it is not the full agentic promise.

And that is the point. This is not an argument against AI automation. It is an argument against sloppy labeling.

Call a chatbot a chatbot.

Call automation.

Call a workflow assistant a workflow assistant.

Call an LLM wrapper an LLM wrapper.

There is dignity in accurate naming. There is also less chance of buying a product that needs six engineers, three consultants, and a prayer circle to operate safely.

Final Thoughts

Agentic AI 2026 is overused because it sells a future most products have not earned yet. Real agency requires more than prompts, plugins, API calls, and a landing page full of words like “autonomous,” “adaptive,” and “next-generation.”

It requires state, memory, recovery, permissions, observability, governance, and accountability.

Some serious AI agents will emerge. Some already show pieces of the right architecture. But most products calling themselves agentic should be treated as suspects until proven otherwise.

For CTOs, founders, and AI buyers, the rule is simple: Do not buy the word. Test the workflow.

If the product cannot remember what happened, understand where it is in the task, recover when something breaks, explain what it did, and show who is accountable, then it is not an agent. It is an LLM wrapper in a blazer. And frankly, the blazer is doing most of the work.