A computer might be able to read your thinking if you’re willing to lay perfectly still for 16 hours inside a large metal tube and allow magnets to bombard your brain as you listen raptly to popular podcasts.

At the very least, its rough contours. In a recent study, University of Texas at Austin researchers trained an AI model to understand the main idea of a small number of phrases as listeners did, pointing to a near future in which artificial intelligence can help us better understand how humans think.

The episodes were Modern Love, The Moth Radio Hour, and The Anthropocene Reviewed, and the program examined fMRI scans of people listening to, or even just remembering, sentences from those three programs. The content of those sentences was then reconstructed using the brain imaging data. For instance, when one subject heard “I don’t have my driver’s license yet,” the algorithm analyzed the person’s brain scans and returned “She has not even started to learn to drive yet”—not an exact replica of the original text, but a near approximation of the idea conveyed. Additionally, the program was able to analyze fMRI data from users who watched short films and provide approximations of summaries for the particular scenes, providing evidence that the AI was not simply extracting words from the brain scans but also underlying meanings.

The results, which were published in Nature Neuroscience earlier this month, contribute to a new area of study that turns the accepted notion of AI on its head. Researchers have used ideas from the human brain to construct intelligent devices for many years. Layers of artificial “neurons”—a collection of equations that act like nerve cells by sending outputs to one another—are the foundation of programs like ChatGPT, Midjourney, and more contemporary voice-cloning software. Even though the design of “intelligent” computer programs has long been influenced by human cognition, many things about how our brains actually function remain a mystery. In a shift from that strategy, researchers are now seeking to better understand the mind by studying synthetic neural networks instead of our biological ones. MIT cognitive scientist Evelina Fedorenko says it’s “unquestionably leading to advancements that we just couldn’t imagine a few years ago.”

The AI program’s purported capability of mind reading has generated controversy on social media and in the press. However, that element of the research is “more of a parlor trick,” according to Alexander Huth, a neurologist at UT Austin and a lead author of the Nature paper. Most brain-scanning techniques produce extremely low-resolution data, and the models used in this study were relatively imprecise and customized for each participant individually. As a result, we are still a very long way from developing a program that can be plugged into anyone’s brain and understand what they are thinking. The essential importance of this work comes in anticipating which brain regions become active while hearing or visualizing words, which may provide deeper understanding of the precise ways in which our neurons cooperate to produce language, one of humanity’s defining characteristics.

According to Huth, the achievement of creating a program that can accurately recreate the meaning of words largely serves as “proof-of-principle that these models actually capture a lot about how the brain processes language.” Neuroscientists and linguists depended on verbal descriptions of the brain’s language network that were vague and difficult to connect directly to observable brain activity prior to this fledgling AI revolution. It was difficult or even impossible to verify theories on the precise linguistic functions that various brain regions might be in charge of, let alone the fundamental query of how the brain learns a language. (Perhaps one area is responsible for sound recognition, another for syntax, and so on.) But now that AI models have been developed, scientists can more precisely define what those processes entail. According to Jerry Tang, the study’s second lead author and a computer scientist at UT Austin, the advantages could go beyond academic concerns—helping those with particular disabilities, for instance. He explained to me, “Our ultimate goal is to assist in restoring communication to people who have lost their ability to speak.”

There has been significant opposition to the notion that AI can aid brain research, particularly among linguistically oriented neuroscientists. That’s because neural networks, which are excellent at identifying statistical patterns, don’t appear to possess fundamental components of how people interpret language, such as a grasp of word meaning. It also makes intuitive sense how human cognition differs from that of machines: A software like GPT-4 learns by analyzing terabytes of data from books and websites, while kids only learn a language with a tiny fraction of that quantity of words. GPT-4 can compose decent essays and perform exceptionally well on standardized tests. The neuroscientist Jean-Rémi King informed me about his research from the late 2000s: “Teachers warned us that artificial neural networks are truly not the same as biological neural networks. Simply put, this was a metaphor. King is one of many experts who now directs Meta’s research on the brain and AI and challenges that dated notion. “We don’t think of this as a metaphor,” he said to me. We view artificial intelligence as a very helpful model of how the brain processes information.

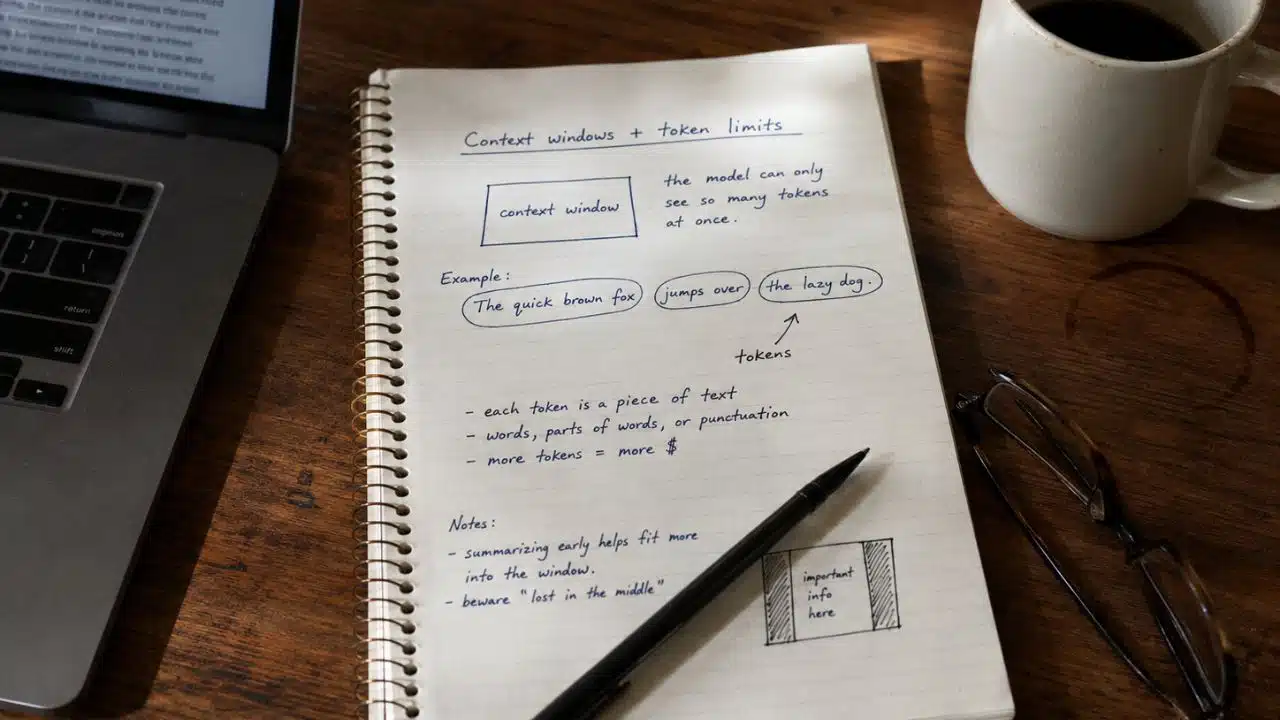

The inner workings of sophisticated AI programs have been demonstrated to provide a promising mathematical model of how our minds interpret language in recent years, according to scientists. The underlying neural network of ChatGPT or a similar program converts the sentences you type into a series of integers. fMRI scans can record a subject’s neurons’ responses to the same words, and a computer can interpret those scans as essentially another set of statistics. To build two massive data sets—one of how a machine represents language and another for a human—these operations are repeated on countless phrases. The relationship between these data sets can then be mapped by researchers using an approach called an encoding model. Once that is complete, the encoding model may start extrapolating: Using the AI’s response to a sentence as a guide, it can then forecast how neurons in the brain will respond to it.

It seems like new studies employing AI to examine the language network in the brain are published every few weeks. According to Nancy Kanwisher, a neurologist at MIT, each of these models might constitute “a computationally precise hypothesis about what might be going on in the brain.” AI, for example, could provide insight into outstanding questions like what precisely the human brain is trying to do while learning a language—not simply that a person is learning to speak, but the precise neural processes through which communication occurs. It is hypothesized that if a computer model that was trained with a specific goal—such as learning to predict the next word in a sequence or assess the grammatical coherence of a sentence—proves to be the best at anticipating brain responses, it is possible that the human mind shares that goal. Perhaps, like GPT-4, our minds function by figuring out which words are most likely to follow one another. Thus, a computational theory of the brain is developed from the inner workings of a language model.

There are several debates and opposing theories because these computational methods are very new. Francisco Pereira, the National Institute of Mental Health‘s director of machine learning, told me that there is no reason why the representation you learn from language models must have anything to do with how the brain interprets a sentence. However, it doesn’t follow that a relationship cannot exist; there are other ways to determine whether one does. Even though AI algorithms aren’t exact replicas of the brain, they are effective research tools because, unlike the brain, they can be dissected, scrutinized, and altered practically infinitely. For instance, to determine what those particular clusters of neurons do, cognitive scientists can test how different types of sentences elicit different types of brain responses and attempt to predict the responses of targeted brain regions. “And then step into territory that is unknown,” said Greta Tuckute, an expert on the relationship between the brain and language at MIT.

For the time being, AI might not be useful for precisely replicating that uncharted neural terrain, but rather for creating heuristics for doing so. According to Anna Ivanova, a cognitive scientist at MIT, “If you have a map that reproduces every little detail of the world, the map is useless because it is the same size as the world.” She was quoting a classic Borges story. “Therefore, abstraction is required.” Scientists are starting to map the linguistic geography of the brain by defining and evaluating what to keep and discard—picking among streets, landmarks, and buildings, then evaluating how useful the resulting map is.