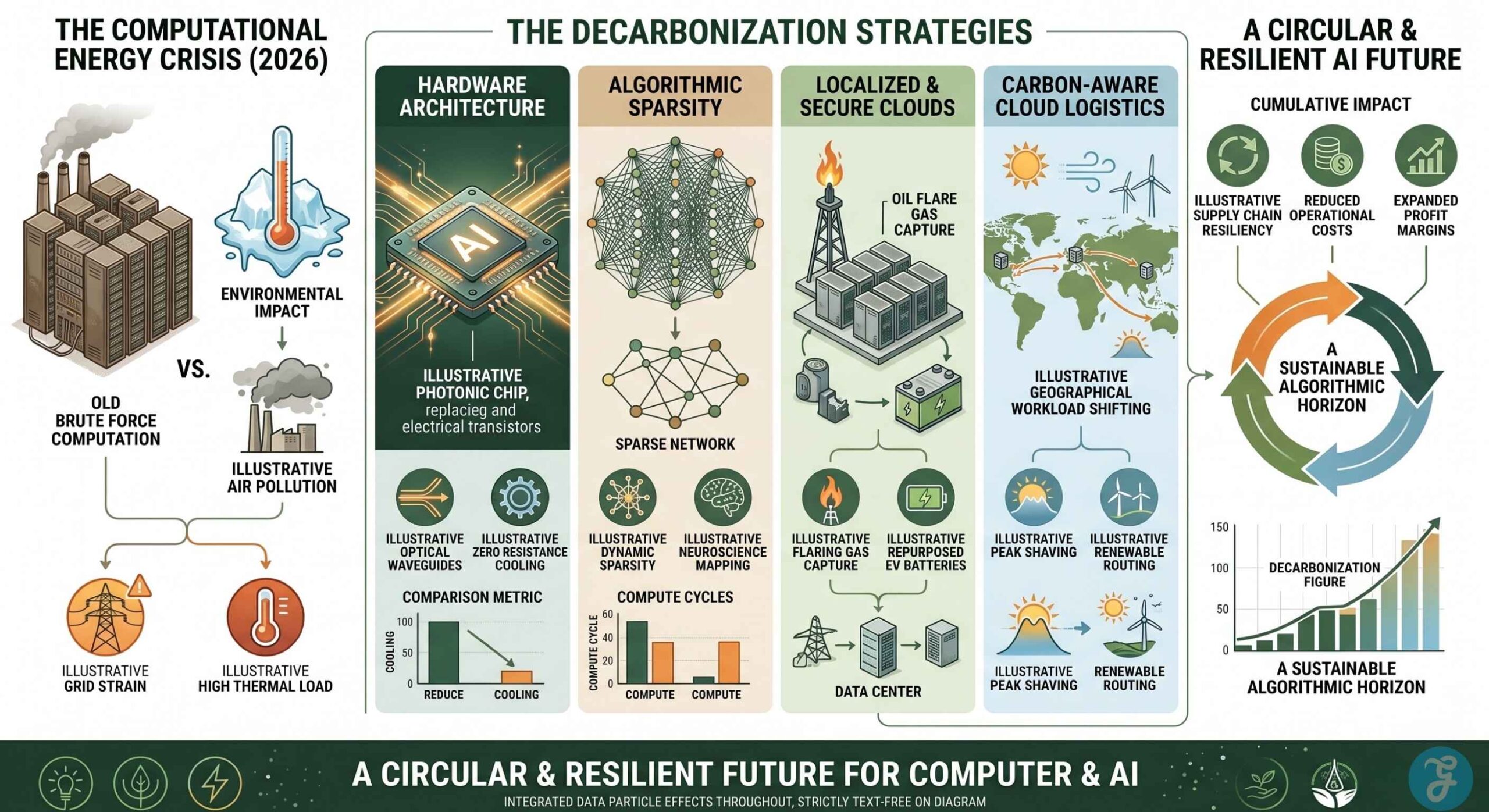

Sustainable AI Labs are fundamentally changing how the tech sector scales massive computational power in 2026. The generative artificial intelligence boom created an unprecedented energy crisis over the last few years. Major technology companies quickly realized that building larger neural networks through brute force computation is no longer physically or economically viable.

The electrical grid simply cannot support the massive thermal cooling and power demands of traditional data centers. Today, the priority has shifted entirely from raw accuracy to energy efficiency and decarbonized infrastructure. Software engineers and hardware architects are now focusing on Floating Point Operations per watt and carbon-aware routing to ensure the future of machine learning remains ecologically responsible.

Identifying the true pioneers in this space requires looking past basic carbon offset credits and focusing on organizations that are fundamentally redesigning the architecture of compute. The transition toward a fully sustainable digital ecosystem demands a massive shift in how enterprise businesses approach technology. Hardware is now being engineered to minimize thermal output while software algorithms are being trained to operate on extreme sparsity.

Leading Innovators in Green Compute Architecture

Navigating the complex landscape of green computing requires a deep understanding of how different methodologies solve specific thermal and electrical bottlenecks. The organizations listed below are tackling the crisis from entirely different angles. Some are redesigning the physical layout of the microchip, while others are utilizing stranded energy resources to power localized microgrids.

1. Allen Institute for AI (AI2) Green AI Team

The Allen Institute for AI has fundamentally changed how the technology industry evaluates algorithmic success by introducing crucial environmental metrics into the development pipeline. Operating out of Seattle, this foundational research lab originally coined the term Green AI to actively combat the industry trend of buying pure accuracy through massive computational brute force. They are forcing developers to account for carbon emissions before deploying massive language models into commercial production.

Target Operator: Open source developers and academic researchers prioritizing carbon transparency

Primary Innovation: They established the foundational metrics for energy to accuracy ratios and actively developed essential open-source carbon tracking tools for software engineers.

Deployment Constraint: Their specific tools focus heavily on academic evaluation and software metrics rather than directly altering commercial data center physical infrastructure.

2. Crusoe Energy Systems

Operating at the critical intersection of climate technology and artificial intelligence, Crusoe Energy Systems takes massive compute operations entirely off the strained traditional power grid. Headquartered in Denver, this company solves two major environmental crises simultaneously.

They capture waste methane flare gas directly from oil fields and use it to power modular data centers located directly on the extraction site. As of early 2026, their localized clean energy microgrids have become even more robust through a strategic partnership with Redwood Materials to integrate second-life electric vehicle batteries.

Target Operator: Heavy compute enterprises seeking completely off-grid modular data center solutions

Primary Innovation: They uniquely capture stranded methane flare gas and utilize recycled EV batteries to create localized microgrids specifically designed for heavy machine learning workloads.

Deployment Constraint: The physical location of these data centers is tied directly to existing oil and gas infrastructure, which limits geographical flexibility for enterprise deployment.

3. MIT CSAIL Energy Efficient Machine Learning Group

This prestigious academic laboratory, located in Cambridge, focuses entirely on the rigorous hardware and software co-design required to make deep learning sustainable at the edge of the network. Led by pioneers in hardware architecture, they specialize in model pruning and deep compression techniques. Their research proves that massive neural networks do not always need to live on giant centralized servers. By engineering algorithms that fit onto highly constrained devices, they are decentralizing the power requirements of artificial intelligence.

Target Operator: Hardware developers and mobile application engineers running localized edge inference

Primary Innovation: They lead the industry in TinyML and deep model compression techniques that eliminate the need for constant energy-hungry cloud server connectivity.

Deployment Constraint: Massive foundational artificial intelligence models still require traditional hyperscaler cloud infrastructure for their initial highly intensive training phases.

4. Point2 Technology

Located in San Jose, this deep technology startup is actively solving the massive internal energy bottlenecks that plague modern enterprise server clusters. Moving data between graphical processing units within a massive artificial intelligence data center uses an astronomical amount of electricity due to the physical limitations of standard copper wiring. Point2 Technology recently won the prestigious 2026 BloombergNEF Pioneer award for climate innovation by addressing this exact physical limitation.

Target Operator: Data center architects looking to drastically reduce internal server cluster energy consumption

Primary Innovation: Their proprietary radio frequency interconnects over plastic waveguides solve the massive electrical resistance and thermal issues of internal server communication.

Deployment Constraint: Implementation requires significant hardware retrofitting and specialized installation within existing legacy enterprise server racks.

5. Stanford Center for Research on Foundation Models (CRFM)

The Stanford CRFM operates as the leading academic body auditing the environmental impact and exact energy sources of corporate artificial intelligence. Based in California, they develop comprehensive Ecosystem Graphs and transparency indexes that evaluate major foundation models deployed by massive technology conglomerates. They are actively lobbying the federal government for standardized energy reporting metrics to ensure the industry cannot hide behind vague sustainability pledges.

Target Operator: Policy makers and enterprise compliance teams evaluating the environmental impact of major language models

Primary Innovation: They enforce industry accountability by publishing rigorous transparency indexes regarding the specific computing energy sources of massive technology companies.

Deployment Constraint: They function strictly as an auditing and research body rather than a direct provider of commercial hardware or software decarbonization solutions.

6. Lightmatter

By fundamentally redesigning the physical architecture of the artificial intelligence microchip, Lightmatter is practically eliminating the massive thermal cooling requirements of modern data centers. Operating out of Boston, this company utilizes advanced photonic computing. Instead of using traditional electrical transistors to perform the massive matrix multiplications required for machine learning, their specialized chips use microscopic arrays of light. Because light travels without generating the massive electrical resistance of copper wires, their processors operate at a fraction of standard temperatures.

Target Operator: Forward-thinking data center operators experiencing severe power and thermal cooling limitations

Primary Innovation: Their photonic processors utilize arrays of light instead of electrical transistors to perform massive calculations at a fraction of standard operating temperatures.

Deployment Constraint: Photonic computing remains a highly specialized architecture that requires customized software compilers and dedicated backend engineering support.

7. Hugging Face Sustainability and Climate Research

While Hugging Face operates as a global entity, its United States-based research hub in New York has taken the absolute lead in democratizing carbon-aware artificial intelligence for enterprise software developers. They integrate sophisticated environmental impact tools directly into the largest open source model repository in the world.

They provide developers with real-time application programming interfaces to measure the exact carbon cost of both training and running inference on specific open source models.

Target Operator: Enterprise software developers selecting open source models for broad commercial deployment

Primary Innovation: They democratize carbon-aware computing by integrating real-time environmental impact tracking directly next to performance benchmarks on their platform.

Deployment Constraint: The absolute accuracy of their carbon tracking relies heavily on the self-reported energy data of the external hardware providers hosting the specific models.

8. ThirdAI

Based in Houston, this innovative startup is tackling the artificial intelligence energy crisis entirely through highly advanced software architecture and dynamic algorithms. They recognize that graphical processing units are incredibly power-hungry and expensive.

ThirdAI utilizes dynamic sparsity algorithms that allow massive multi-billion parameter neural networks to be trained highly efficiently on standard commercial central processing units. This radically cuts the energy consumed during continuous commercial software development.

Target Operator: Organizations wanting to train large neural networks without investing in expensive, energy-intensive hardware clusters

Primary Innovation: Their dynamic sparsity algorithms allow massive multi-billion parameter models to be trained highly efficiently on standard commercial processors.

Deployment Constraint: Extremely specialized visual rendering and complex spatial tasks may still require traditional graphics processing unit acceleration to function properly.

9. UC Berkeley AI Research (BAIR) Sky Computing Lab

This specialized research group located in Berkeley is pioneering the global logistics of sustainable cloud architecture through highly advanced dynamic orchestration. They specialize in carbon-aware routing for massive cloud providers.

Their research focuses on dynamic cloud orchestration that moves massive training workloads seamlessly across different geographical data centers in real time based on local power grid conditions. This ensures that massive computations are only executed when local green energy is abundant.

Target Operator: Global cloud service providers and hyperscalers managing massive distributed software training workloads

Primary Innovation: They pioneered carbon-aware routing to dynamically shift computing tasks to geographic regions currently generating a surplus of renewable energy.

Deployment Constraint: Real-time geographical workload shifting requires highly sophisticated network orchestration and an absolute minimal data transfer latency to remain effective.

10. Numenta

Headquartered in Redwood City, Numenta applies the highly efficient principles of human neuroscience directly to machine learning architecture. The human brain operates on roughly twenty watts of power while performing tasks that require megawatts of electricity from traditional server farms.

Numenta maps the highly sparse neural structures of the human brain and translates them into commercial software algorithms. This allows existing neural networks to achieve identical accuracy while utilizing a mere fraction of the traditional compute cycles.

Target Operator: Software engineers seeking to radically cut electrical energy consumption during continuous commercial model inference

Primary Innovation: They map highly sparse neural structures from the human brain to achieve identical algorithmic accuracy using a fraction of the compute cycles.

Deployment Constraint: The software requires deep integration into the foundational layers of existing neural network architectures to achieve its maximum operational efficiency.

Comparing Core Decarbonization Technologies

Understanding exactly how these organizations capture efficiency is essential for corporate procurement teams evaluating long-term infrastructure investments. Hardware solutions often require massive capital expenditure, while software optimizations can be deployed rapidly across existing server fleets. This comparative breakdown highlights the core technologies utilized across the sector to transform power-hungry hardware into sustainable assets.

| Sustainable AI Lab | Core Decarbonization Technology | Primary Enterprise Benefit |

| Allen Institute for AI | Energy to Accuracy Evaluation Metrics | Standardized software carbon tracking |

| Crusoe Energy Systems | Stranded Methane & EV Battery Microgrids | Completely off-grid compute infrastructure |

| MIT CSAIL | TinyML & Deep Model Compression | Localized edge inference without cloud servers |

| Point2 Technology | Radio Frequency Plastic Waveguides | Reduced internal server cluster energy waste |

| Stanford CRFM | Corporate Ecosystem Transparency Graphs | Regulatory compliance and energy auditing |

| Lightmatter | Photonic Light Array Processors | Elimination of massive thermal cooling systems |

| Hugging Face | Real Time Model Carbon APIs | Democratized developer environmental tracking |

| ThirdAI | Dynamic Sparsity Algorithms | CPU-based training replacing power-hungry GPUs |

| UC Berkeley BAIR | Carbon Aware Cloud Routing | Dynamic workload shifting to renewable grids |

| Numenta | Neuroscience-Based Software Sparsity | Massive reduction in continuous inference energy |

The Economics of Carbon Aware Compute

The transition toward sustainable computing is driven just as much by brutal economic realities as it is by environmental responsibility. Building massive artificial intelligence infrastructure requires balancing steep Capital Expenditures against crippling Operational Expenditures.

In 2026, the cost of electricity and the millions of gallons of purified water required to cool traditional server farms have skyrocketed. Data center operators are facing severe margin compression if they continue to rely on outdated brute force architectures.

Reducing the thermal output of a microchip directly translates to massive financial savings on facility air conditioning and liquid cooling systems. Furthermore, the global implementation of strict corporate carbon taxation means that running inefficient code carries a heavy financial penalty.

By utilizing dynamic sparsity or photonic processors, companies can drastically lower their operational overhead. This financial reality ensures that green computing is no longer a philanthropic corporate initiative but a baseline requirement for maintaining profitability in the modern software sector.

Finally, Securing a Sustainable Algorithmic Horizon

The integration of artificial intelligence into every facet of the global economy is an irreversible trend. However, the unchecked energy consumption associated with this technological leap threatened to destabilize power grids and accelerate global climate change. The ten laboratories and startups highlighted in this analysis are actively preventing that catastrophic outcome by reimagining the very foundation of computational architecture.

By pushing the boundaries of photonic hardware and highly optimized software sparsity, these innovators are securing a sustainable future for the technology industry. They are proving that incredible advancements in machine learning do not have to come at the expense of the global environment. Embracing these advanced decarbonization strategies ensures that the United States remains at the absolute forefront of both artificial intelligence capability and environmental stewardship.

A Note on Methodology: This selection reflects extensive research and technical analysis conducted by our editorial team. While these sustainable AI labs are numbered for structural clarity, this list does not represent a strict hierarchy or a definitive ranking. Each innovator featured here provides distinct, highly valuable breakthroughs within the broader US sustainable computing ecosystem.

Frequently Asked Questions About US Sustainable AI Labs

1. What is the fundamental difference between Red AI and Green AI?

Red AI refers to the process of improving artificial intelligence accuracy by simply throwing massive amounts of computational power and electricity at a problem without regard for the environmental cost. Green AI is a development methodology that prioritizes efficiency and actively measures the environmental carbon footprint of training a model to ensure the computational cost is justifiable.

2. How does model sparsity actually reduce energy consumption?

Traditional dense neural networks calculate every single parameter during a computational process, even if the parameter has zero impact on the final answer. Model sparsity utilizes advanced algorithms to identify and ignore the useless parameters. By skipping millions of unnecessary mathematical equations, the processor does significantly less physical work and uses far less electricity.

3. What exactly is carbon-aware cloud routing?

Carbon-aware cloud routing is an automated logistics system utilized by massive cloud hosting providers. If a data center in California is running entirely on solar power during the day, the software routes heavy artificial intelligence training workloads to that location. When the sun sets, the software dynamically pauses the training and shifts the workload to a data center in Texas, where wind turbines are currently producing surplus green energy.

4. Why do traditional data centers require so much water for cooling?

When traditional graphical processing units perform massive mathematical operations, they generate an extreme amount of physical heat due to electrical resistance. Data centers must pump millions of gallons of chilled water through specialized cooling towers and server racks to absorb this heat and prevent the hardware from literally melting down during intensive machine learning tasks.

5. How does photonic computing save electricity in data centers?

Standard microchips use electrical currents traveling through microscopic copper wires to perform calculations, which generates massive friction and heat. Photonic computing replaces those electrical currents with microscopic beams of light traveling through silicon waveguides. Because light does not experience the same electrical resistance as electrons, the chip operates at a much cooler temperature and requires significantly less electricity to function.

Beyond Brute Force: The New Measure of Intelligence

Let’s take a step back and look at the bigger picture. For years, the tech industry operated as if the digital world had no physical footprint. We built power-hungry algorithms under the assumption that computing resources were infinite, often ignoring the strained power grids required to keep them running.

This turn toward sustainable AI isn’t just a passing corporate trend; it’s a much-needed reality check. What makes the work of these labs so compelling isn’t just the engineering marvel of photonic chips or off-grid servers. It’s a total shift in industry philosophy. We are finally moving past the era of solving problems simply by throwing raw, brute-force compute at them.

Moving forward, the true mark of an advanced AI won’t just be how smart the model is, but how efficiently it operates. The ultimate test is whether we can scale its brilliance without maxing out the planet.