Denmark has long been a global pioneer in digital governance, and by 2026, it has solidified its position as the world leader in responsible AI frameworks. Unlike the “move fast and break things” approach seen elsewhere, the Danish model is built on high trust, strict ethical transparency, and a unique regulatory environment that turns compliance into a competitive advantage. As the EU AI Act enters full enforcement in mid-2026, Danish companies are already ahead of the curve, proving that ethics and innovation can thrive together.

How We Selected Our 11 Danish Responsible AI Frameworks Facts

To identify the most “eye-opening” facts for 2026, we analysed the latest Strategic Approach to Artificial Intelligence from the Danish Ministry of Digital Affairs and the annual reports of the Danish Data Ethics Council. We prioritised initiatives that demonstrate a clear shift from theory to practice—specifically focusing on the D-Seal certification, the development of sovereign Danish language models, and the world’s first mandatory data ethics reporting laws. These facts represent the practical tools Danish firms are using to scale AI without losing the trust of their citizens.

11 Eye-Opening Facts About Danish Responsible AI Frameworks

The Danish approach is defined by “Trust by Design.” Here are the eleven facts that explain how this small nation is setting the global standard for the machine-intelligent age.

1. The World’s First Statutory Data Ethics Reporting

Denmark is the first country to turn data ethics from a “nice-to-have” into a legal requirement. Under the Danish Financial Statements Act, the nation’s largest companies are required to include a statement on their data ethics policy in their annual reports. If a company does not have a policy, they must explain exactly why. This “comply or explain” model has forced AI ethics into the Danish C-suite years before the rest of the world caught up.

Best for:

-

Corporate governance officers and ESG investors.

Why We Chose It:

-

It is the bedrock of the Danish responsible AI model.

-

It ensures that AI ethics is a board-level conversation, not just a technical one.

-

It provides a public benchmark for comparing corporate responsibility.

Things to consider:

-

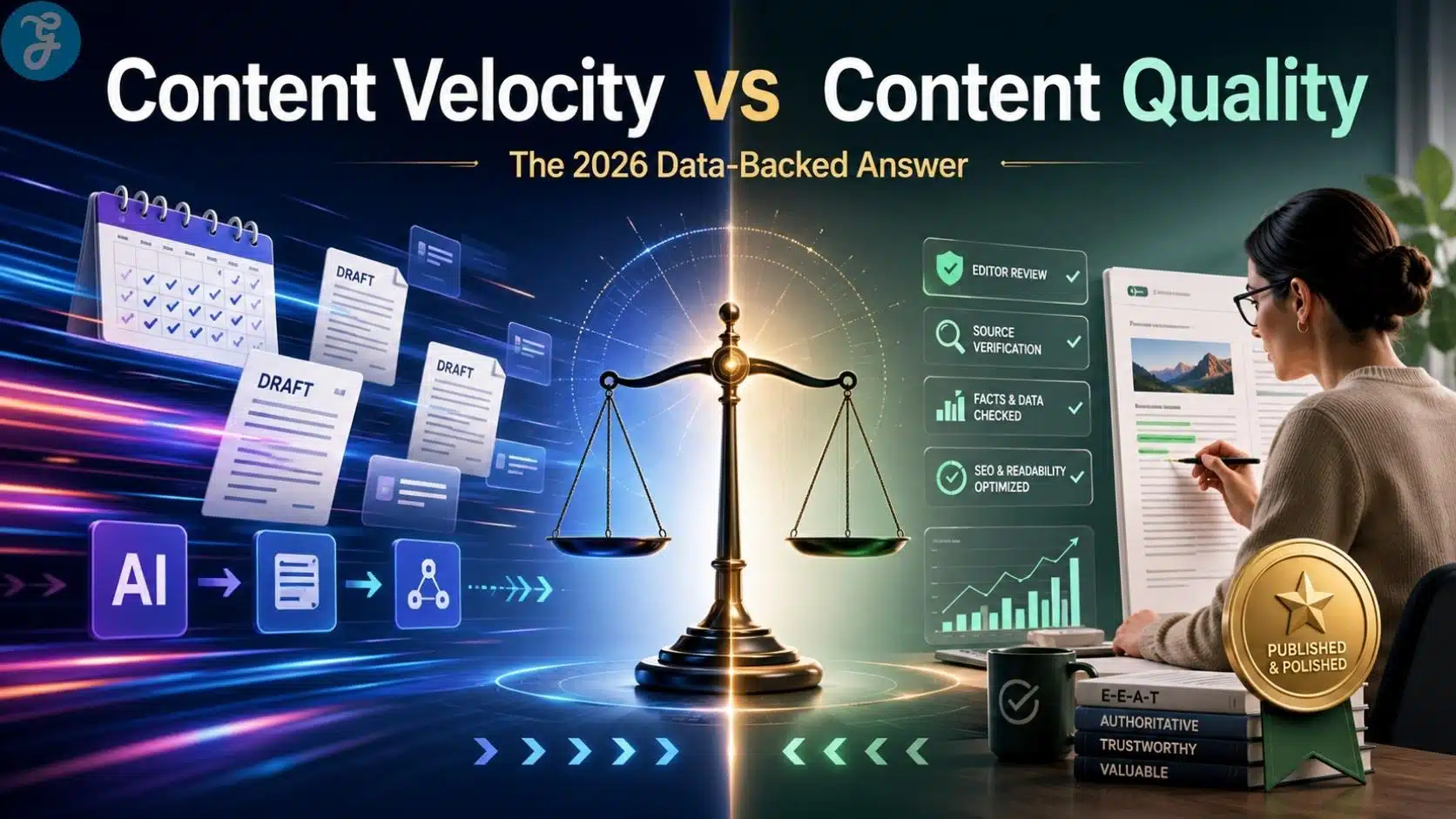

The quality of reports varies; 2026 is seeing a shift toward more “data-backed” evidence rather than vague promises.

2. The D-Seal: A “Fairtrade” Label for AI

In 2026, the D-seal has become the gold standard for digital trust. Developed by the Confederation of Danish Industry and the Danish Chamber of Commerce, this independent labeling scheme certifies that a company handles data and AI in a trustworthy and secure way. Much like a “Fairtrade” or “Organic” label, it gives consumers instant confidence that the AI they are interacting with has been audited for bias and security.

Best for:

-

B2C companies looking to differentiate themselves through trust.

Why We Chose It:

-

It turns a complex technical topic into a simple, consumer-facing mark of quality.

-

It is a collaborative effort between government, industry, and consumer groups.

-

It provides a clear roadmap for SMEs to achieve high-level compliance.

Things to consider:

-

Maintaining the seal requires annual audits, making it a “living” commitment.

3. Sovereign Danish Language Models (LLMs)

To avoid the inherent biases and cultural “hallucinations” of US-centric models, Denmark is building its own Sovereign LLMs. By 2026, the government and private sector have collaborated to launch high-quality, transparent language models trained specifically on Danish data and values. This ensures that AI used in Danish healthcare or law understands the nuances of local language and culture without “importing” external biases.

Best for:

-

Public sector agencies and local tech developers.

Why We Chose It:

-

It protects “Digital Sovereignty” and ensures cultural accuracy.

-

The training data is open-source and transparent, a key requirement for “Responsible AI.”

-

It provides a safe foundation for local firms to build niche applications.

Things to consider:

-

Local models require significant “green” compute power to remain sustainable.

4. Regulatory AI Sandboxes for “High-Risk” Systems

The Danish Business Authority has expanded its regulatory sandboxes to help firms navigate the EU AI Act. In these safe spaces, companies can test “high-risk” AI systems—such as those used in recruitment or credit scoring—under the direct guidance of regulators. This prevents legal bottlenecks, allowing companies to innovate while ensuring their frameworks are ironclad before they hit the mass market.

Best for:

-

Fintech and HR-tech start-ups.

Why We Chose It:

-

It moves regulation from “punishment” to “partnership.”

-

It provides companies with direct access to legal experts during the development phase.

-

It is a core part of the Danish responsible AI frameworks that speed up time-to-market.

Things to consider:

-

Admission to the sandbox is competitive and requires a high baseline of technical maturity.

5. The “Human-in-the-Loop” Operational Mandate

A non-negotiable pillar of Danish AI policy is Human Autonomy. By 2026, any AI system used for significant decisions in Denmark—whether in public welfare or private banking—must have a clear “Human-in-the-loop” mechanism. The frameworks are designed so that the AI suggests, but the human decides. This preserves dignity and ensures that automated systems never override democratic values.

Best for:

-

Public administration and high-stakes financial services.

Why We Chose It:

-

It is the most effective safeguard against “algorithmic cruelty.”

-

It maintains the “high-trust” relationship between citizens and institutions.

-

It is a key requirement for the “Human-centred” pillar of the national strategy.

Things to consider:

-

Human oversight must be meaningful; “rubber-stamping” AI decisions is actively discouraged by auditors.

6. Agentic AI is Designed as a “Co-Pilot,” Not a Replacement

While the world fears AI replacement, Danish firms are pivoting toward Agentic AI as a “co-worker.” By 2026, frameworks in companies like Maersk and Novo Nordisk focus on agents that handle complex, data-heavy workflows while leaving creative and ethical judgment to humans. The goal is “Augmentation over Automation,” a philosophy that has kept Danish labour unions supportive of AI adoption.

Best for:

-

Large-scale industrial and logistical operations.

Why We Chose It:

-

It addresses labour shortages without creating mass unemployment.

-

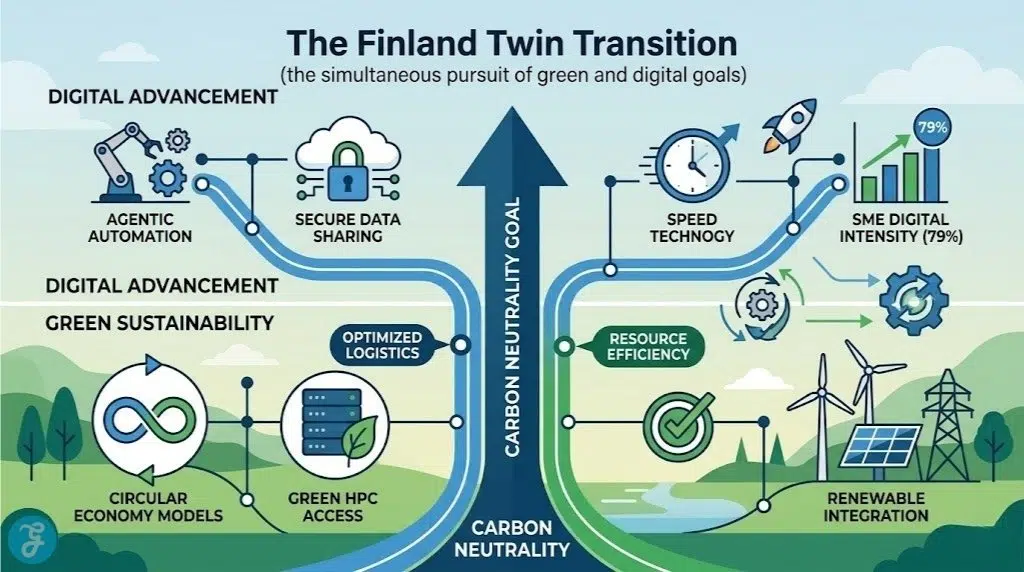

It focuses on the “Twin Transition”—making digital tools support green goals.

-

It aligns AI development with the Danish model of worker-employer collaboration.

Things to consider:

-

Successfully deploying agents requires massive internal re-skilling programs.

7. Real-Time AI Monitoring for Business Integrity

The Danish Business Authority uses AI to monitor the Central Business Register (CVR) in real-time to detect fraud. However, their framework is “responsible-by-design”: it is trained to identify risks without bothering reliable businesses. This “precision oversight” is a prime example of how the state uses the same tools it regulates to improve its own efficiency and integrity.

Best for:

-

Public sector innovators and regulatory tech (RegTech) firms.

Why We Chose It:

-

It demonstrates the “practice what you preach” approach of the Danish government.

-

It shows how AI can protect a “responsible business environment.”

-

It reduces administrative burden while increasing security.

Things to consider:

-

The algorithms used for monitoring must themselves be subject to the Data Ethics Council’s review.

8. The Independent Data Ethics Council

Established in 2019, the Danish Data Ethics Council is an independent body that advises the government and the public on the ethical use of data. By 2026, it has become a powerful watchdog, frequently issuing “red line” guidance on topics like facial recognition and synthetic data. Its existence ensures that the Danish responsible AI frameworks are constantly updated to reflect new societal concerns.

Best for:

-

Policy makers and legal researchers.

Why We Chose It:

-

It provides a “moral compass” that is independent of political cycles.

-

It fosters public debate, ensuring AI doesn’t happen “behind closed doors.”

-

It has been a model for similar councils across the EU.

Things to consider:

-

Their recommendations are not legally binding but carry significant “reputational weight.”

9. Linking AI to the “Green Digital Transition”

In Denmark, you cannot talk about AI without talking about the climate. The national roadmap mandates that AI development must support the Green Transition. Frameworks now include “carbon-tracking” for AI models, prioritizing projects that optimize energy grids or reduce waste in the “circular economy.” If an AI solution isn’t green, it’s not considered “responsible” in the Danish market.

Best for:

-

CleanTech start-ups and sustainability officers.

Why We Chose It:

-

It aligns AI with Denmark’s most critical national goal: carbon neutrality.

-

It turns “efficiency” from a financial metric into an environmental one.

-

It helps Danish tech firms win contracts in a climate-conscious global market.

Things to consider:

-

Large AI models consume significant water and power; cooling them with renewable fjord water is a growing trend.

10. Transparency in Automated Decision-Making (ADM)

By 2026, Danish law requires that any citizen affected by an Automated Decision-Making system has the right to an explanation. This “Right to Explanation” is embedded in the Joint Government Digital Strategy, ensuring that if a public service is denied or a health benefit is calculated by AI, the logic behind that decision is transparent and contestable.

Best for:

-

Citizen advocacy groups and public sector developers.

Why We Chose It:

-

It is the ultimate shield against “black box” governance.

-

It forces developers to build “Explainable AI” (XAI) from the ground up.

-

It protects civil liberties in an increasingly automated world.

Things to consider:

-

Explanations must be in plain language, not just technical code.

11. The Digital AI Taskforce for Large-Scale Rollout

To move from “pilot” to “production,” Denmark launched the Digital AI Taskforce. By 2026, this taskforce is responsible for scaling AI across municipalities and regions. Its framework focuses on “reusability”—ensuring that a responsible AI solution developed in Copenhagen can be safely deployed in Aarhus. This “build once, use everywhere” approach makes Denmark the most efficient AI adopter in the world.

Best for:

-

Municipal leaders and public-private partnership (PPP) investors.

Why We Chose It:

-

It prevents fragmented, “siloed” AI development.

-

It ensures that even small municipalities have access to “state-of-the-art” responsible AI.

-

It focuses on welfare, health, and employment—the core of the Danish social model.

Things to consider:

-

Scaling requires high-quality, standardized data across all regions.

An Overview Of Danish Responsible AI Frameworks

The Danish model is a “team effort” between the state, private industry, and independent watchdogs.

Overview Comparison Table

| Component | 2026 Role | Core Philosophy | Primary Outcome |

| D-Seal | Industry Certification | Trust-by-Design | Consumer Confidence |

| Data Ethics Law | Statutory Reporting | Comply or Explain | Boardroom Accountability |

| AI Sandbox | Regulatory Support | Innovate Safely | Legal Certainty |

| Danish LLM | Cultural Infrastructure | Sovereign Accuracy | Bias Reduction |

Our Top 3 Picks and Why?

-

The World-First Data Ethics Reporting Law: This is our top pick because it is the most aggressive and effective way to ensure AI ethics is taken seriously at the highest levels of business.

-

The D-Seal Certification: We chose this as the runner-up because it provides a practical, market-driven incentive for companies to do the right thing.

-

Sovereign Danish Language Models: This takes the third spot because it represents a major move toward “Digital Sovereignty” and protects the nation’s cultural integrity from global tech giants.

Securing Trust in a Machine-Intelligent Age

Danish companies aren’t just building AI; they are building Danish responsible AI frameworks that protect the social contract. By 2026, the Danish model has proven that transparency is not a burden—it is a superpower. For businesses looking to thrive in the 2020s, the lesson from Denmark is clear: the only way to scale AI is to build it on a foundation of absolute trust.