Managing a high-volume content roadmap in 2026 means facing a hard truth: evaluating AI image tool cost-per-output is no longer just about comparing monthly subscription fees. As audiences experience unprecedented digital burnout and generative search engines demand higher semantic precision, the imagery we attach to our narratives must work harder than ever.

We are moving past the era where simply generating an image was enough; today, establishing topical authority requires cinematic, 4K featured images that capture attention without adding visual clutter. To achieve this at scale, we must analyze these platforms as critical backend assets that directly impact our operating margins and editorial efficiency.

Transitioning from basic cost analysis to true ROI requires dissecting the hidden operational expenses that quietly drain content budgets.

The Hidden Economics of Visual Assets

Integrating generative AI into a high-volume digital publishing workflow demands a multidimensional approach to cost. The baseline API fee is only the starting point; the ultimate economic impact is determined by how well a model mitigates manual labor, aligns with sustainability targets, and drives organic discovery.

Evaluating these layers allows us to see the true cost of our visual infrastructure.

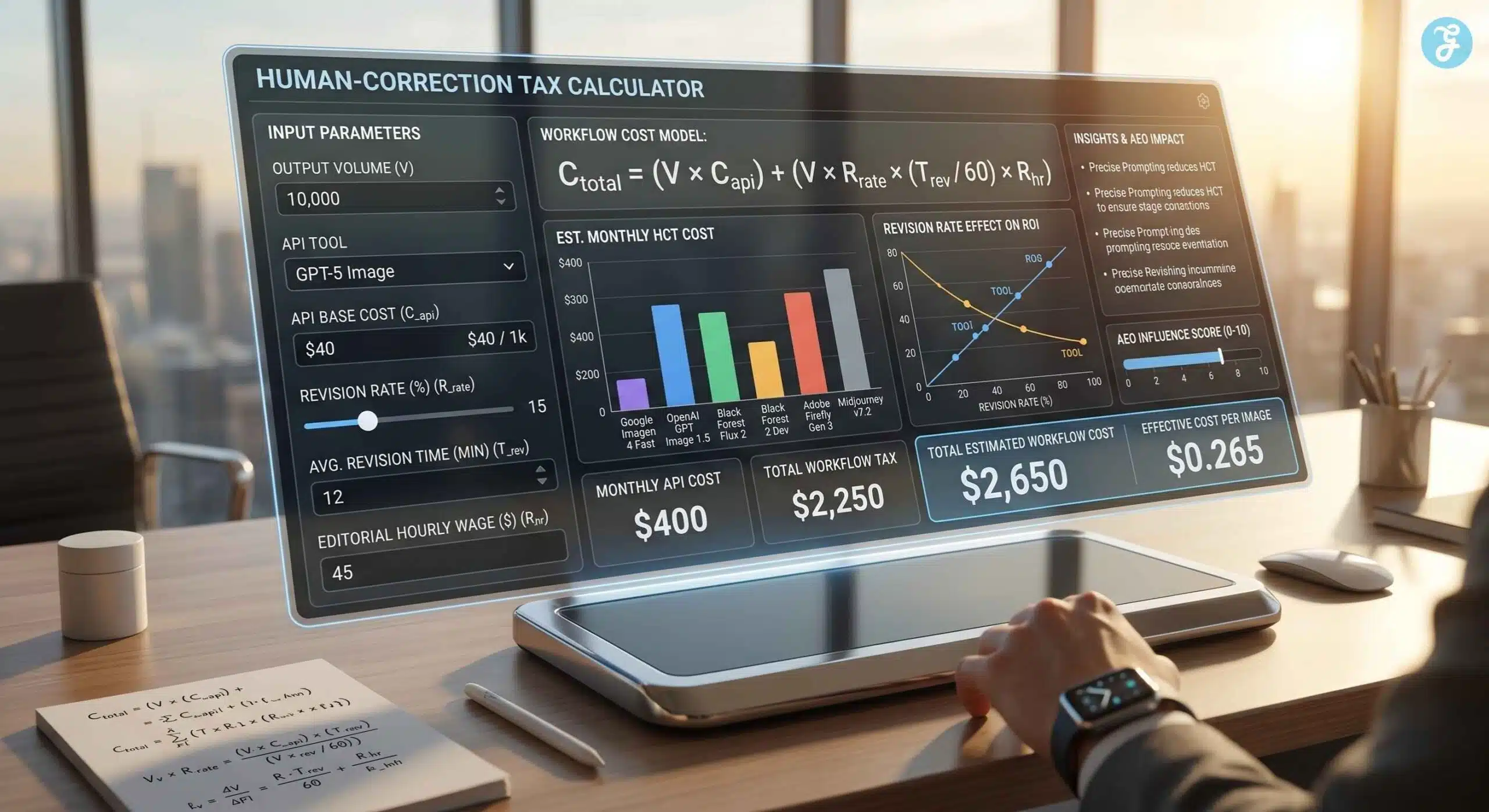

The Human-Correction Tax Formula

The most significant hidden expense in high-volume generative content is the “Human-Correction Tax.” When a model fails to adhere to semantic prompts or brand guidelines, the cost of manual editorial revision quickly outpaces the raw API fee. To accurately project operational expenses, you must calculate the total cost of production.

The fundamental calculation for total asset cost is expressed as:

C (total) = (V times C{api}) + ( V \times R_{rate} \times \frac{T_{rev}}{60} \times R_{hr})

Where:

- V is the total volume of images generated.

- C_{api} is the direct API cost per image.

- R_{rate} is the revision rate (percentage of images requiring human edits).

- T_{rev} is the average time spent revising a single image (in minutes).

- R_{hr} is the editorial hourly wage.

By factoring in the revision rate, models with slightly higher upfront API costs but superior prompt adherence often yield a lower effective cost-per-usable-output. Moving beyond labor costs, enterprise workflows must also account for their environmental footprint.

Here is an interactive calculator to help you estimate the true operational cost of your upcoming content batches by adjusting the specific parameters of your editorial workflow.

Sustainability and Compute Metrics: The New Corporate Mandate

As enterprise workflows scale, evaluating the environmental impact of AI models has transitioned from an ethical bonus to a strict vendor requirement. Generating complex visual assets demands massive compute power, primarily driven by high-end GPUs. For 2026, the energy efficiency per output is a critical differentiator.

Efficient models running on optimized architecture can process queries using roughly $0.3$ watt-hours, whereas heavier models requiring lengthy reasoning chains consume significantly more energy. Selecting a provider that transparently reports its carbon intensity and offers “Green Label” compliance ensures that your content operations do not contradict broader corporate sustainability goals.

AEO Influence Score

Answer Engine Optimization (AEO) dictates that digital assets must serve as precise, machine-readable answers. The ROI of an AI image tool is heavily influenced by how effectively its outputs, and the metadata wrapped around them, can be parsed by generative search interfaces.

Models that natively generate highly descriptive, semantically dense alt-text alongside the visual asset provide a distinct AEO advantage. This semantic clarity allows AI search interfaces to confidently pull these high-resolution images into localized visual summaries, transforming a standard graphic into a high-converting citation.

Understanding these economic and technical metrics allows us to properly evaluate the leading platforms on the market.

The 2026 Market Leaders: Cost and Capability Breakdown

To maximize the return on investment for upcoming content batches, analyzing the specific strengths and operational hurdles of the current leading platforms is essential. Choosing the right tool requires matching its core capabilities with your specific architectural and editorial needs.

Here is a breakdown of how the top contenders perform in a high-volume environment utilizing their most advanced 2026 architectures.

Google Imagen 4 Ultra

When balancing high-end visual fidelity with the need for robust, enterprise-level infrastructure, Google’s top-tier model provides a highly stable backbone.

Primary Application: Large-scale digital publishing workflows requiring a blend of flagship aesthetic quality and reliable API infrastructure.

Strategic Value: As the most advanced model in the Imagen 4 family, Ultra balances enterprise-grade scalability with exceptional 4K fidelity, making it a powerhouse for consistent batch generation across a massive content roadmap.

Operational Challenges: The higher compute cost compared to Google’s lighter models requires precise API parameter tuning to ensure budget predictability during high-volume spikes.

OpenAI GPT Image 2

Released in late April 2026, this model is a complete departure from the DALL-E era. It is a native multimodal foundation model that uses the same reasoning pipeline as ChatGPT’s GPT-5.4 backbone.

Primary Application: Professional infographics, complex layouts (menus, posters), and text-heavy editorial assets.

Strategic Advantage: It offers “magazine-grade” design with near-perfect typography (~99% accuracy) and supports 4K resolution. It features a “Thinking Mode” where the model acts as a visual thought partner, reasoning through the image structure before rendering.

Operational Challenges: Access to advanced reasoning features is currently restricted to ChatGPT Pro and Enterprise tiers, requiring careful management of specialized API credits.

Midjourney V8.1

For featured images that must resonate on a human level, countering algorithmic fatigue with deliberate, cinematic quality, this platform remains the benchmark for premium editorial engagement.

Primary Application: High-impact editorial covers and visual storytelling emphasizing modern, glassmorphism, and premium design aesthetics.

Strategic Value: Upgraded in the Spring of 2026, V8.1 remains the undisputed champion of photorealistic and artistic visualization. Its unparalleled visual fidelity drives higher click-through rates and social sharing, easily justifying the operational overhead for flagship pieces.

Operational Challenges: Navigating its ecosystem outside of a streamlined enterprise API interface still complicates automated backend publishing pipelines, often requiring manual intervention.

Adobe Firefly Image Model 5

In environments where strict commercial licensing intersects with brand identity, legal safety is just as valuable as generation speed.

Primary Application: B2B SaaS workflows and enterprise content ecosystems where commercial safety, copyright compliance, and brand alignment are mandatory.

Strategic Value: Firefly Image Model 5 guarantees commercial safety and offers legal indemnification while closing the aesthetic gap with its competitors. It completely eliminates the hidden legal friction associated with synthetic media, protecting the platform’s long-term topical authority.

Operational Challenges: The model occasionally prioritizes its strict safety and copyright filters over extreme creative variance, making it slightly less versatile for highly stylized, avant-garde editorial covers.

Black Forest Labs FLUX.2 [max]

When building proprietary infrastructure, relying entirely on closed ecosystems can create long-term bottlenecks. FLUX.2 [max] provides the ultimate developer control.

Primary Application: Custom backend integrations, specifically when routing generation through a custom microservice or a monorepo architecture.

Strategic Value: As the most advanced model in the FLUX.2 family, [max] bridges the gap between high-end aesthetic generation and absolute architectural control. It allows technical teams to fine-tune the pipeline for specific, highly consistent brand aesthetics without paying premium commercial API markups.

Operational Challenges: Maximizing its potential requires a robust technical foundation and active developer management compared to plug-and-play commercial endpoints.

The 2026 API Image Pricing and ROI Matrix

To accurately evaluate the return on investment, reviewing the direct API costs alongside platform latency provides the clearest picture of scaling potential with these newly updated models.

| Platform & Tier | Direct Cost (per 1k) | Latency (Avg) | Strategic Advantage |

| Google Imagen 4 Ultra | $60 | 4.1s | Enterprise scalability with flagship 4K fidelity |

| OpenAI GPT Image 2 | $53 (Med) / $211 (High) | 3.8s | Near-perfect text and reasoning-driven layouts |

| Black Forest FLUX.2 [max] | $70 | 3.2s | Maximum developer control and open-weight flexibility |

| Adobe Firefly Image Model 5 | $30 | 2.5s | Absolute commercial brand safety and indemnification |

| Midjourney V8.1 | $75 (Est.) | 35.0s | Unrivaled cinematic fidelity for premium editorial assets |

Beyond the API: The Human Cost of Visual Friction

Stepping back from the raw data and API benchmarks, managing a 2026 content roadmap reveals a deeper truth about modern publishing: we are constantly fighting digital burnout. When every platform can instantly generate thousands of synthetic images, the actual value of an asset shifts from its mere existence to its intentionality. The most profound realization this year isn’t about which model is a fraction of a cent cheaper; it is about how visual friction impacts the human editorial team.

If a developer spends hours tweaking a backend microservice to handle prompt errors, or an editor manually corrects bizarre artifacts just to meet a deadline, the tool is failing its primary purpose. Audiences are inherently seeking grounding elements, craving the deliberate imperfection of handwriting or the clean, uncluttered space of Swiss design, to counter the overwhelming algorithmic noise.

Therefore, selecting an AI image generation partner is fundamentally an architectural decision that dictates a brand’s visual soul. True operational efficiency is achieved only when the technology operates so seamlessly within our workflows that editors can return their focus entirely to the narrative, trusting the visual equity to support, rather than distract from, the core story.

The Micro-Joy Factor: Aesthetic ROI

In a wellness-driven economy, the “vibe” of an image is a financial metric. By 2026, the contrast between “obviously AI” and “artistically directed” will have a measurable impact on brand loyalty. Utilizing glassmorphism, minimalist layouts, and cinematic lighting doesn’t just look better; it reduces the cognitive load on the reader.

This “Micro-Joy” aesthetic is a strategic hedge against the $5 trillion wellness industry’s move away from digital clutter. When images feel like a curated gallery rather than a stock repository, user dwell time increases, directly improving your search authority.

Personal Insight: The Intentionality Factor

The paradox of 2026 is that while we have the tools to generate infinite content, the market has never been more starved for intentionality. In my observation, I see a clear divide forming between “content farms” that optimize for the lowest API cost and “authority hubs” that optimize for the highest semantic and aesthetic value.

For a high-level publishing operation, the goal isn’t just to fill a 16:9 frame; it is to build a visual language that feels as deliberate as a handwritten note in a world of automated scripts. This differentiation is the only way to truly combat the digital burnout that defines our current era.

When users are bombarded with generic synthetic imagery, their eyes glaze over. They crave the “Micro-Joy” found in a well-crafted graphic that actually adds value to the narrative. By prioritizing aesthetic rigor and cinematic quality, we aren’t just making things look “pretty”; we are signaling to both the reader and the search algorithms that this content was created with profound care.

This intentionality becomes the cornerstone of topical authority, separating those who simply produce noise from those who build a lasting legacy.

Architecting the Future of Visual Publishing

True ROI is found by eliminating the “friction-to-publish.” Integrating these tools directly into a custom microservice like Imagine Lab removes the manual bottlenecks that kill creative momentum. This structural shift allows us to focus on what humans and search engines actually value: unique perspectives and trusted authority.

We are no longer just publishers; we are curators of a digital experience. Whether leveraging Google Imagen’s scalability or OpenAI’s semantic precision, an AI tool’s ultimate value lies in erasing the gap between the initial editorial concept and the final published artifact. If our visual strategy creates friction, we leave topical authority on the table. The future belongs to those who use AI to amplify their humanity, not replace it.

Frequently Asked Questions: AI Image Tool Cost-Per-Output

1. What is the average cost-per-output for AI image generation APIs in 2026?

The market has definitively shifted toward reasoning-powered flagship models. As of May 2026, high-fidelity architectures like Google Imagen 4 Ultra average $0.06 per output ($60 per 1,000 images), while OpenAI’s GPT Image 2 standard outputs start around $0.053. When planning a high-volume editorial roadmap, budgeting between $35 and $75 per 1,000 images ensures you can leverage the latest semantic and cinematic capabilities of models like FLUX.2 [max] and Midjourney V8.1.

2. How do you accurately calculate the ROI of AI-generated images?

Basic ROI simply compares direct API fees to traditional stock photography costs. However, a true 2026 calculation must incorporate the Human-Correction Tax. You calculate the total workflow cost by adding the direct API fee to the hourly rate of your editorial team, multiplied by the time spent revising failed outputs. High-performance models like GPT Image 2 may carry higher upfront compute costs, but their near-perfect semantic adherence drastically reduces editorial friction, yielding a much higher total operational ROI.

3. How does the choice of an AI image tool impact Answer Engine Optimization (AEO)?

AEO in 2026 is driven by native vision reasoning and structured data. Search interfaces like ChatGPT and Google’s AI Overviews prioritize visual assets that act as precise, verifiable answers. AI tools that natively generate rich, semantically dense metadata and integrate seamlessly with answer-first content formats offer a massive structural advantage. Utilizing high-resolution, cinematic images from Midjourney V8.1, paired with structured, AI-readable context, ensures your assets are actively cited in zero-click environments.

4. Why is sustainability becoming a core metric for AI content operations?

Generating 4K visuals at scale is highly compute-intensive, and the global electricity demand from AI is projected to exceed the consumption of entire countries like Belgium by 2026. Consequently, “Green AI” is no longer optional for enterprise workflows. Selecting models from providers that transparently report both carbon intensity and water footprint metrics per 1k generations is essential. This ensures that scaling microservices like Imagine Lab complies with strict corporate “Green Label” mandates and modern environmental accounting standards.

5. Is it more cost-effective to use an API or a standard subscription tier?

For analysts managing high-volume publishing roadmaps, API access is the only viable path. While standard subscriptions work for individual creators, routing generation directly through a NestJS monorepo using the FLUX.2 [max] or Imagen 4 Ultra APIs allow for automated, concurrent batch processing. This eliminates the manual bottlenecks of closed web interfaces and provides the precise technical parameter control needed to maintain consistent brand aesthetics at scale.

6. What is the highest hidden cost when scaling synthetic visual assets?

Beyond the direct labor of the Human-Correction Tax, the largest hidden expense is the Iteration Multiplier. If a model requires three to five regeneration cycles to achieve the correct 2026 cinematic standard or typographical layout, your effective cost per usable image quadruples. Factoring this multiplier into your initial budget is critical; the most “expensive” API on paper is often the most cost-effective in practice because models with advanced reasoning hit the mark on the first prompt.