In a landmark achievement for open-source AI, Chinese startup DeepSeek has released DeepSeekMath-V2, the world’s first openly accessible model to secure gold medal-level performance at the International Mathematical Olympiad (IMO). This 685 billion parameter powerhouse solved five out of six problems on IMO 2025, scoring 210 out of 252 points—equivalent to a gold medal and placing third behind elite human teams from the US and South Korea. Released under the Apache 2.0 license on Hugging Face and GitHub, it democratizes access to mathematician-level reasoning, challenging proprietary giants like OpenAI and Google DeepMind.

Hugging Face CEO Clement Delangue called it “owning the brain of one of the best mathematicians in the world for free,” a sentiment echoed across tech communities. Building on DeepSeek’s prior successes, from the 7B model rivaling GPT-4 to this V2 leap, the release signals China’s rising dominance in efficient, open mathematical AI amid global competition. This article delves into its architecture, benchmarks, training, implications, and future, equipping readers with comprehensive insights into a tool poised to transform research, education, and beyond.

Model Architecture and Key Innovations

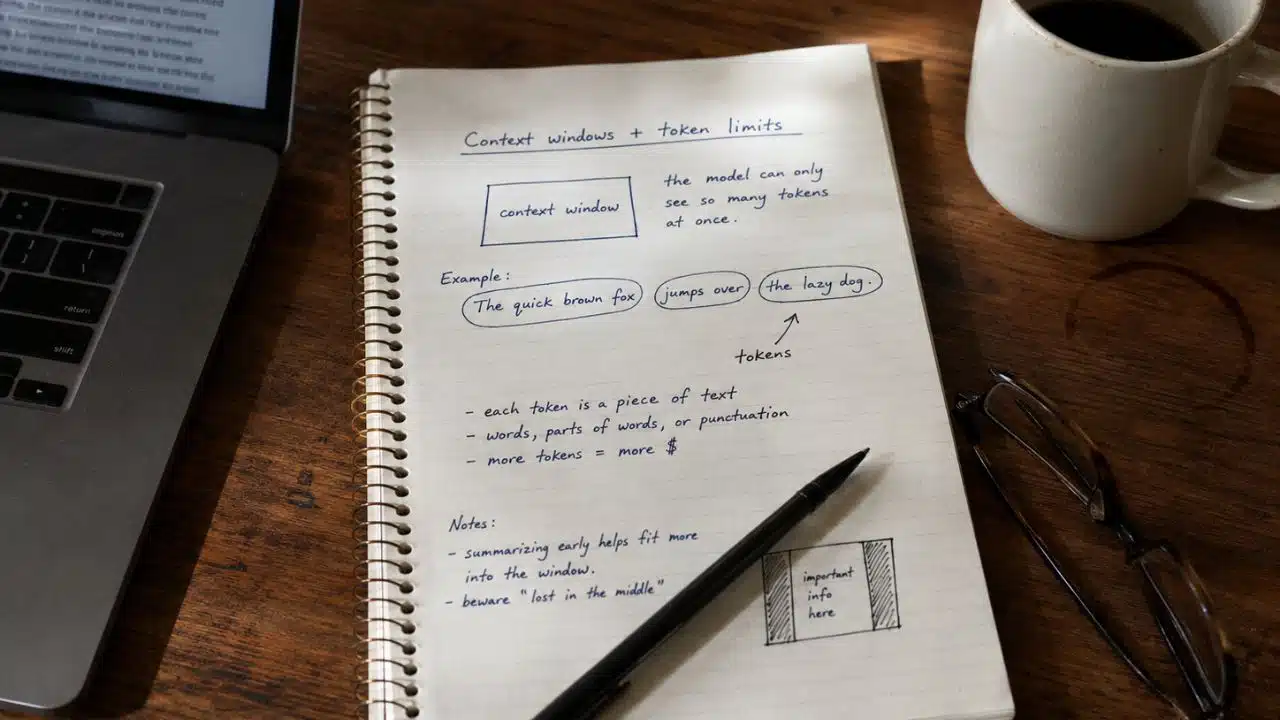

DeepSeekMath-V2 employs a sophisticated mixture-of-experts (MoE) transformer architecture with 685 billion total parameters, activating only a subset per token for high efficiency on standard hardware like 8x A100 GPUs. It inherits Multi-head Latent Attention (MLA) and DeepSeekMoE from DeepSeek-V2, compressing KV caches by 93% to manage 128K token contexts—vital for intricate proof chains in Olympiad problems. Sparse attention from DeepSeek-V3 further optimizes long-sequence processing, supporting formats like BF16, F8_E4M3, and F32 for versatile deployment.

The crown jewel is its verifier-generator framework, where a dedicated verifier LLM grades proofs on a {0, 0.5, 1} scale, mimicking human evaluators with detailed critiques on logic, completeness, and errors. Trained via reinforcement learning (GRPO) with meta-verifiers, the generator self-refines by addressing verifier feedback, rewarding honest error admissions over blind confidence. This closes the generation-verification gap, enabling scaled test-time compute for open-ended tasks.

| Feature | Description | Benefit |

|---|---|---|

| MoE Parameters | 685B total, sparse activation | 5.76x throughput vs. dense models |

| Context Length | 128K tokens | Handles full Olympiad proofs |

| KV Cache Compression | 93% reduction via MLA | Lowers VRAM needs |

| Verifier Scale | {0,0.5,1} grading with NL feedback | Proof rigor over answers |

| Supported Formats | BF16, F8_E4M3, F32 | Broad hardware compatibility |

Benchmark Performance Breakdown

DeepSeekMath-V2 dominates global math competitions. On IMO 2025, it achieved 83.3% accuracy (5/6 problems), earning gold equivalence. The 2024 Chinese Mathematical Olympiad (CMO) saw full solves on 4 problems plus partial on another, while Putnam 2024 yielded a staggering 118/120—surpassing the human top score of 90.

IMO-ProofBench highlights its proof strength: 98.9% on basic proofs and 61.9% on advanced, rivaling Google’s Gemini DeepThink and dwarfing GPT-5’s 20%. Scaled verification boosts these via iterations, outperforming traditional LLMs capped at 50-60%.

| Competition | DeepSeekMath-V2 Score | Human/Competitor Benchmark | Notes |

|---|---|---|---|

| IMO 2025 | 210/252 (83.3%) | Gold medal (US/S. Korea top) | 5/6 problems solved |

| CMO 2024 | Gold-level | N/A | 4 full + 1 partial |

| Putnam 2024 | 118/120 | Human high: 90/120 | Near-perfect 11/12 |

| IMO-ProofBench (Adv.) | 61.9% | Gemini DeepThink: ~62%, GPT-5: 20% | Proof-focused |

Training Pipeline and Efficiency

Training hybridizes massive math corpora (arXiv, theorem banks, synthetic proofs) with supervised fine-tuning (SFT), followed by RL where the verifier auto-labels hard proofs. This “verifier-first” loop reduces epochs by 20%, enhances anti-hallucination, and supports JSON outputs with traces. Compared to DeepSeek 67B, MoE cuts costs 42.5% and boosts speed 5.76x.

Ethical curation ensures balance across algebra (40%), geometry (30%), number theory (20%), and combinatorics (10%), minimizing biases. Deployment is straightforward: pip install from DeepSeek-V3.2-Exp repo, with multi-GPU kernels.

| Training Stage | Key Method | Efficiency Gain | Data Sources |

|---|---|---|---|

| Pre-Training | Hybrid math corpora | N/A | arXiv, theorems, synthetics |

| SFT | Supervised proofs | N/A | Labeled Olympiads |

| RL (GRPO) | Verifier rewards | 20% fewer epochs | Auto-labeled hard proofs |

| Overall vs. Prior | MoE optimization | 42.5% cost reduction | Balanced domains |

Historical Context and DeepSeek’s Evolution

DeepSeek’s journey began with DeepSeek-Math-7B in 2024, matching GPT-4 on GSM8K despite fewer parameters, evolving through V2’s MoE efficiencies. This V2 builds on DeepSeek-V3.2-Exp-Base, incorporating sparse MoE for scalability. Hangzhou-based, DeepSeek leverages China’s AI ecosystem to rival US leaders, open-sourcing to foster global collaboration.

Prior open models lagged: o1-mini at silver, but V2’s verification pushes gold. Community reactions on Reddit and LinkedIn praise its accessibility.

| Model Milestone | Release Year | Key Achievement | Parameter Scale |

|---|---|---|---|

| DeepSeek-Math-7B | 2024 | Rivals GPT-4 on GSM8K | 7B |

| DeepSeek-V2 | 2025 | MoE efficiency pioneer | 236B |

| DeepSeekMath-V2 | 2025 | IMO gold, open weights | 685B MoE |

| Competitors (Closed) | 2025 | Gemini DeepThink IMO gold | Proprietary |

Real-World Applications and Use Cases

Beyond benchmarks, DeepSeekMath-V2 accelerates theorem proving in physics, biotech, and cryptography, verifying complex derivations autonomously. Educators deploy it for interactive tutoring: input a problem, receive step-by-step proofs with critiques. Researchers fine-tune for domain-specific tasks, like optimizing quantum algorithms or protein folding math.

APIs enable JSON-verified outputs for apps, while distillation creates lighter 7B variants for edge devices. In industry, it streamlines formal verification, reducing engineer time by 50% on hardware proofs.

| Application Area | Use Case Example | Impact |

|---|---|---|

| Education | Interactive Olympiad prep | Step-by-step critiques |

| Research | Theorem acceleration (physics/biotech) | Scales open proofs |

| Industry | Formal verification (crypto/hardware) | 50% time savings |

| Development | API for math apps | JSON traces, distillation |

Challenges, Limitations, and Ethical Considerations

High VRAM (minimum 8x A100s) limits consumer access, with latency on non-math tasks. Sequential verification scales compute linearly, demanding optimization. Ethically, unbiased training mitigates biases, but over-reliance risks skill atrophy in students; transparent traces promote understanding.

Ongoing work targets interdisciplinary reasoning and smaller models.

| Limitation | Description | Mitigation Strategy |

|---|---|---|

| Hardware Requirements | 8x A100 GPUs min | Distillation to 7B |

| Non-Math Latency | Slower on general tasks | Fine-tuning pipelines |

| Compute Scaling | Linear verification growth | Sparse kernels |

| Ethical Risks | Potential skill erosion | Transparent critiques |

Future Directions and Industry Impact

DeepSeekMath-V2 heralds verifiable AI, potentially integrating with multimodal models for visual proofs. Community fine-tunes could spawn specialized variants, eroding proprietary moats. As open-source surges, expect Putnam-beating tools in education platforms by 2026.

It challenges US AI hegemony, with implications for global R&D equity.

Conclusion: A New Era of Democratic Mathematical Mastery

DeepSeekMath-V2 transcends benchmarks, embodying a paradigm shift toward self-verifiable AI that prioritizes rigorous reasoning over rote answers—unlocking human-like mathematical intuition for all. By open-sourcing gold-medal prowess, DeepSeek empowers billions: students tackling Olympiads, scientists proving breakthroughs, and developers building verifiable systems that propel innovation across fields. This isn’t just a model; it’s a catalyst for equitable progress, proving open collaboration outpaces closed vaults in forging tomorrow’s intellect. As communities iterate and deploy, the ripple effects will redefine what’s computationally possible, inviting every mind to engage with elite mathematics freely and faithfully. Expect accelerated discoveries in biotech proofs, climate modeling, and beyond, where verifiable AI becomes the great equalizer in the quest for knowledge.