Have you ever paused to wonder if the students in your life are using artificial intelligence to get ahead in school? You are definitely not the only one asking this question. Tools like ChatGPT can write essays or solve complex math problems with just a few clicks, and this has made cheating faster and easier than ever before. In fact, a recent report from the UK found nearly 7,000 proven cases of AI-assisted cheating in a single year. That is a massive jump from the past.

However, the conversation goes further. A 2025 survey in the United States found that 88% of students have used AI for assessments in some form, highlighting a widening Academic Integrity Crisis. This discussion breaks down the realities of AI-assisted cheating, explains why it matters to students, teachers, and concerned parents, and outlines smart, actionable ways to address it. Let’s take a closer look and bring clarity to the issue.

The Rise of AI in Education

Students are grabbing AI tools for homework help, essays, and even test answers faster than you can say “pop quiz.” Access to these smart assistants grows each day. It makes schoolwork feel more like a tech race than ever before.

How generative AI tools are being used by students

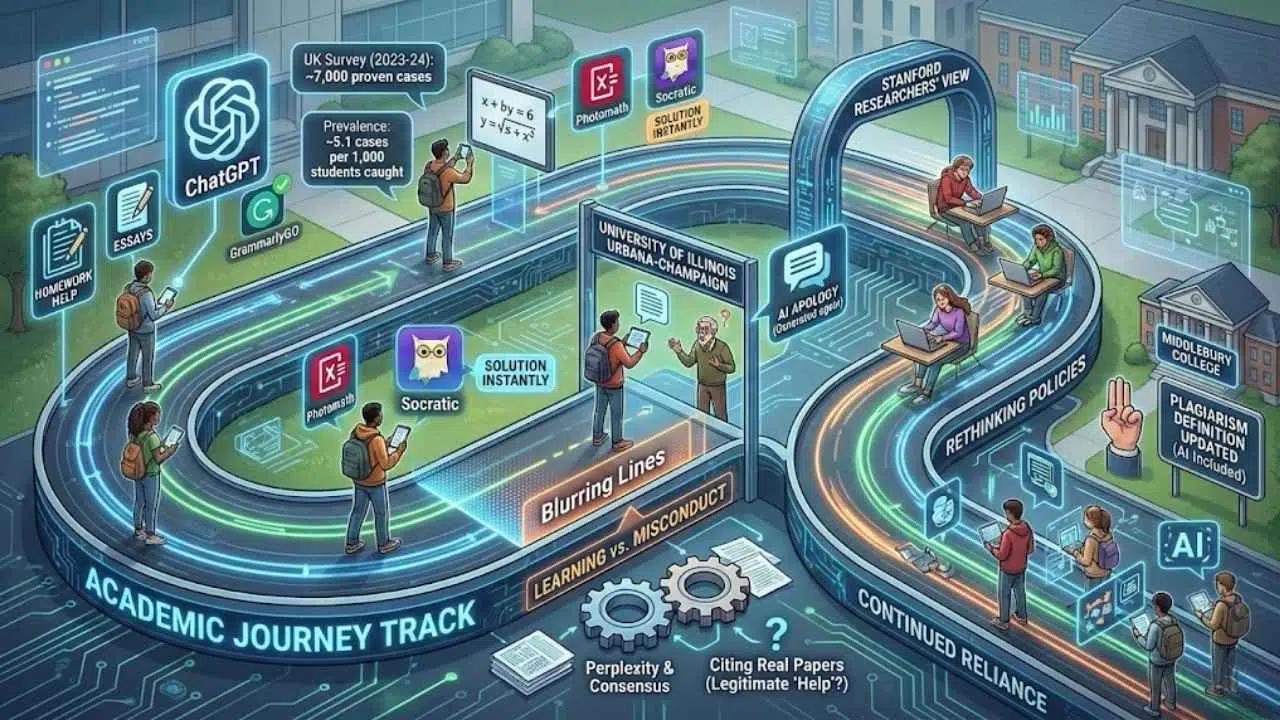

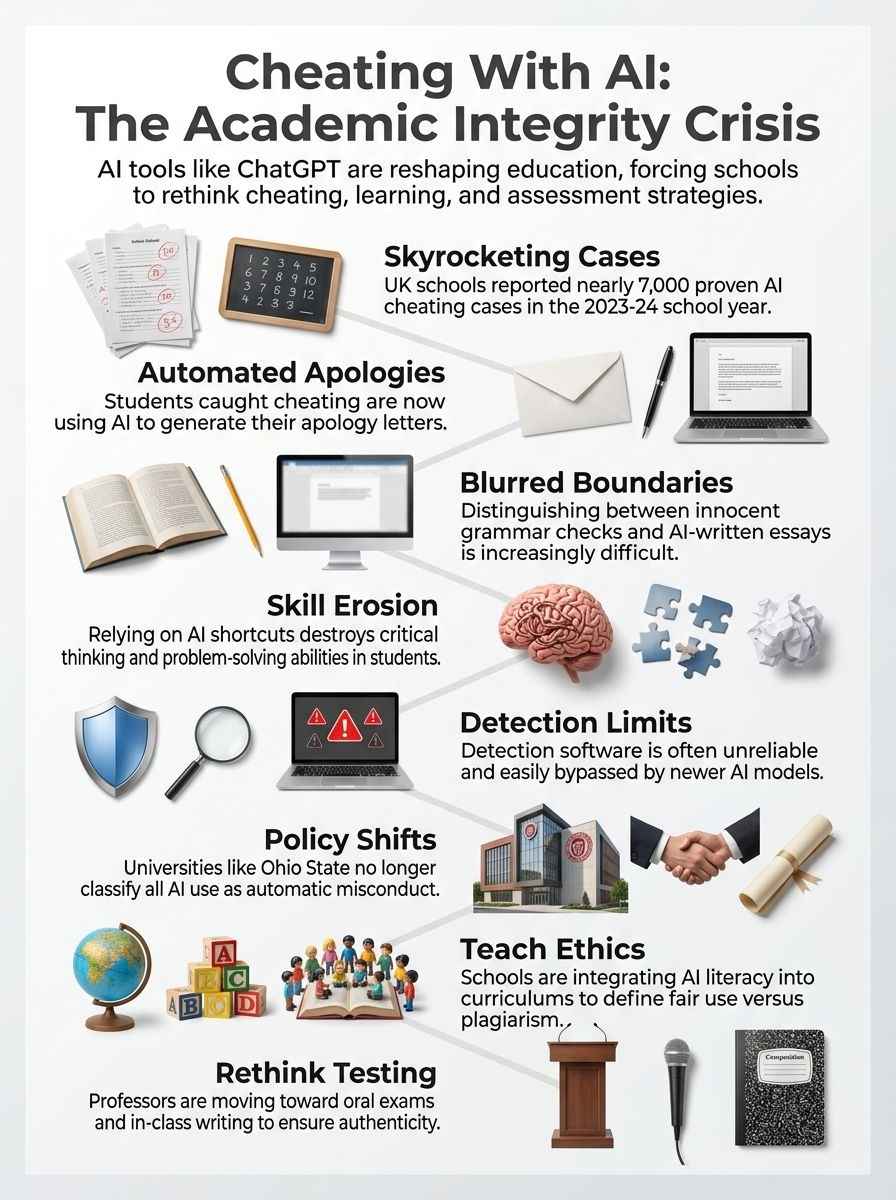

Teenagers are using ChatGPT to write essays, answer test questions, and even apologize for cheating. While the UK saw 7,000 proven cases recently, the trend is global. Students create entire research papers in minutes with just a prompt or two. At the University of Illinois Urbana-Champaign, professors famously caught learners using AI on assignments only to find those same students used AI again to generate their apologies. It is a cycle of reliance that is hard to break.

Small tasks get automated too. Tools like GrammarlyGO or Quillbot can rewrite sentences, check grammar, and brainstorm ideas in seconds instead of hours. Many students see these steps as harmless help rather than academic dishonesty. However, newer tools like Perplexity and Consensus are changing the game by finding real academic papers to cite, which makes the “help” look even more legitimate.

Stanford researchers say this new way of getting work done is booming. It often blurs the line between learning and misconduct. As a result, more universities are starting to question what truly counts as cheating with artificial intelligence technology.

The increasing accessibility of AI for academic tasks

AI technology sits in almost every student’s pocket now. ChatGPT can write essays on demand, but mobile apps like Photomath and Socratic allow students to snap a picture of a math problem and get the solution instantly. Many students use these tools to finish homework or answer test questions with just a few clicks.

Stanford education scholars found that this wave of AI use has fueled a cheating epidemic across schools and universities. A survey done in the UK revealed nearly 7,000 proven cases of cheating with AI tools during the 2023-24 school year. That equals about 5.1 cases out of every 1,000 students caught using artificial intelligence to cheat.

This surge is forcing schools to rethink their policies. For example, Middlebury College faculty recently voted to explicitly incorporate generative AI into their definition of plagiarism. Tasks that once seemed tough are easier now, and some students do not think small uses count as academic dishonesty at all.

Understanding the Academic Integrity Crisis

People debate what fair help means now that students use AI tools. The lines blur fast. One minute it is help, and the next it is plain cheating.

Defining academic integrity in the age of AI

Academic integrity means doing your own work, being honest, and not cheating. With AI tools so easy to use now, the rules feel less clear than before. Some students use these tools for small tasks and do not see it as a big problem. Others go so far as to have AI write full papers. Professors at the University of Illinois Urbana-Champaign call this major misconduct.

Universities are responding with updated rules. George Mason University, for instance, launched a new “Academic Standards Code” in 2025 to replace their old Honor Code. It focuses heavily on honesty and acknowledgment rather than just punishment. Meanwhile, Haverford College revised its policy to strictly prohibit unauthorized AI use while encouraging transparency when it is allowed.

The UK reported almost 7,000 proven cases of cheating with AI last year alone. That is about five out of every thousand students bending the rules with bots instead of books. Ethics matter more than ever because losing trust in assessment can shake education all the way down to its roots.

The blurred line between assistance and cheating

Some students use AI technology for quick grammar checks or topic ideas. Others let generative AI tools like Claude or Gemini write an entire essay or solve math problems step by step. That makes it tough to see where good help ends and cheating starts.

Stanford education scholars say using AI for a small task feels less wrong to many students than using it to do the whole assignment. This “gray area” is dangerous. A common pitfall is “AI-giarism,” where a student submits AI text as their own. Even if they edit it, many institutions still consider the core generation to be plagiarism.

Schools like Ohio State University have changed their rules because old definitions of plagiarism may not fit this gray area anymore. In 2023-24, almost 7,000 proven cases of cheating involving AI appeared in UK universities. Without clear lines drawn between studying and short-cutting learning itself, students remain confused.

The Impact of AI-Assisted Cheating on Education

Many students now use AI to finish work fast, but this shortcut steals real learning. With easy answers at their fingertips, some forget how to puzzle things out for themselves.

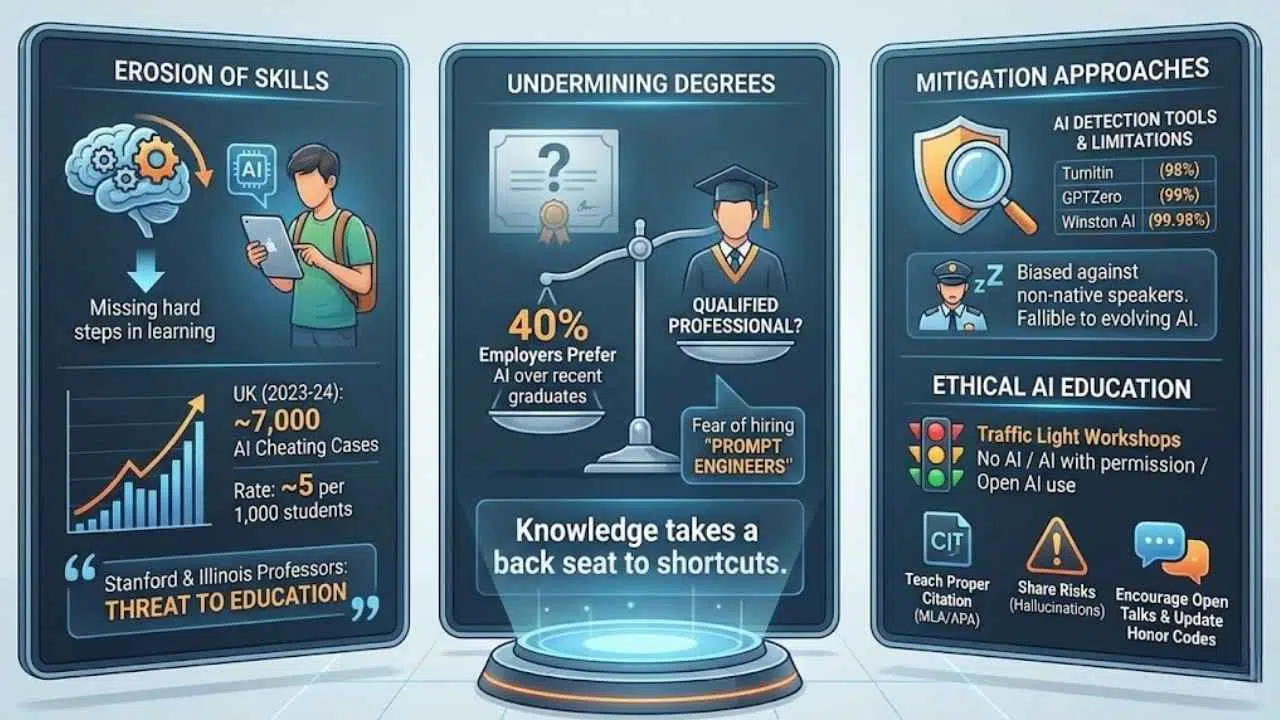

Erosion of critical thinking and problem-solving skills

Students who use AI to do their assignments are missing out on real learning. Their minds skip the hard steps, like finding facts or working through tough math problems. In 2023 and 2024, almost 7,000 cases of AI cheating were found in the UK alone. That is about five students out of every thousand caught using tools like ChatGPT for answers.

Stanford and Illinois professors see this as a big threat to education. Even students at top schools have admitted they let bots think for them. Some universities now ignore these violations, which sends a mixed signal about what counts as honest work. If people rely on AI technology for every answer, thinking skills could slowly fade away.

Undermining the value of academic degrees

Cheating with AI has cast a long shadow over academic degrees. Universities are changing the rules on whether using artificial intelligence counts as cheating. This makes it harder to know what an honest degree means anymore.

A recent report from Hult International Business School found that nearly 40% of employers said they might prefer to hire an AI over a recent graduate. They fear that graduates lack real skills. If students use tools to write their cover letters and do their homework, employers worry they are hiring a “prompt engineer” rather than a qualified professional.

“The foundation shakes if knowledge takes a back seat to shortcuts and misconduct fueled by technology in education.”

Degrees lose their power when anyone can hand in work written by ChatGPT. Employers may start thinking twice before hiring based on diplomas alone.

Current Approaches to Mitigate AI Cheating

Some tools claim they can spot AI-written text, but these tools often slip up. Teachers try to teach students how to use AI wisely, yet gray areas still cloud the rules.

AI detection tools and their limitations

AI detection tools can spot cheating in assignments, but they stumble more than you might think. Professors at the University of Illinois Urbana-Champaign caught students using chatbots to write papers, yet many AI-written texts slip right past these systems.

Here is how the top tools compare:

| Tool Name | Claimed Accuracy | Best Use Case |

|---|---|---|

| Turnitin | 98% | Standard for universities and colleges. |

| GPTZero | 99% | Educators looking for detailed sentence analysis. |

| Winston AI | 99.98% | Enterprise and publishers needing high certainty. |

Despite these high numbers, issues remain. A 2024 Stanford study found that detection tools are biased against non-native English speakers. They often flag innocent writing as AI-generated simply because the vocabulary is simpler. Detection software is like a security guard who naps from time to time. Newer AI writing models keep evolving and fool the checkers by sounding more like real students each day.

Educating students on ethical AI use

Teaching students about ethical AI use has become a key mission for schools and colleges. As AI technology keeps spreading, clear guidance is more important than ever.

- Run “Traffic Light” Workshops: Many universities use a simple system where red means “No AI,” yellow means “AI with permission,” and green means “Open AI use.”

- Discuss the “Why”: Professors discuss what academic integrity means now that tools like ChatGPT are here. They ensure students know the difference between fair help and misconduct.

- Teach Proper Citation: Instructors show how to cite content generated by artificial intelligence. Both MLA and APA have released specific guidelines for citing AI prompts and outputs.

- Share the Risks: Courses on digital ethics teach students the risks of using AI for plagiarism. This includes the danger of “hallucinations,” where AI invents fake facts.

- Highlight the Statistics: Students learn about recent numbers, such as the UK’s report of nearly 7,000 proven cases of AI-assisted cheating.

- Encourage Open Talks: Schools encourage honest conversations about why students cheat. Research from Stanford asks directly about peer pressure and burnout.

- Update Honor Codes: Institutions update honor codes to clearly mention both old-school and new digital misconduct.

- Debate the Value: Class discussions highlight why academic honesty matters. Media stories warn that too much reliance on AI could harm critical thinking skills.

- Review Real Cases: Teachers include lessons on spotting fake or biased outputs from chatbots. This helps students judge information instead of just repeating it.

The Role of Institutions in Addressing the Crisis

Schools and colleges shape how students use AI in their work by setting the ground rules early. Strong guidance helps kids learn to be fair, honest, and thoughtful—even when technology makes cheating tempting.

Developing policies for responsible AI use

Institutions need clear rules for using AI. Some universities have stopped counting all AI use as an academic integrity violation. Instead, they focus on “authorized vs. unauthorized assistance.” Policies should explain which tasks students can complete with AI and where it crosses the line.

With nearly 7,000 proven cases of cheating using AI tools in the UK’s 2023-24 school year, setting these boundaries is urgent. Schools must talk directly to students about AI technology. If a student uses artificial intelligence to write their entire paper, that counts as major misconduct. New policies need simple language so everyone knows what is fair and what breaks trust.

Integrating AI literacy into the curriculum

Teachers now see students using AI to cheat, apologize, and even finish homework. In 2023-24, schools in the UK caught almost 7,000 students cheating with artificial intelligence tools. Yet, many students still do not know what counts as fair help.

Adding AI literacy lessons helps clear up this confusion. Programs like the AI Literacy initiatives at the University of Florida are leading the way. Students learn how artificial intelligence works and where it crosses into cheating. Small group tasks can model good ways to use these tools while warning about risks. This gives learners a strong base for ethical decisions before they find themselves stuck between technology and temptation.

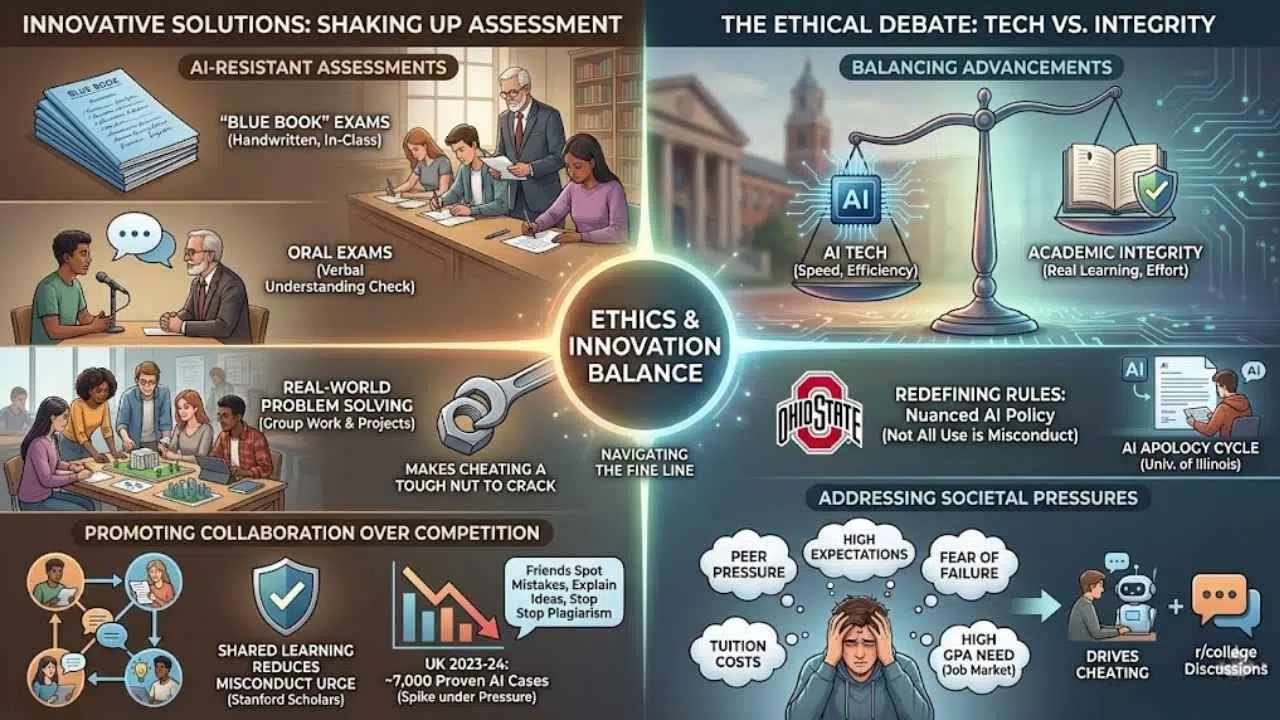

Innovative Solutions to Preserve Academic Integrity

Creative solutions are shaking up how schools protect honest work. Fresh ideas keep students on their toes and make cheating a tough nut to crack.

Creating AI-resistant assessments

Teachers across the country now face students using AI tools like ChatGPT for cheating. To stay ahead, professors are returning to classic methods. The “Blue Book” exam is making a comeback. This involves writing essays by hand in class without any devices.

Other universities are using oral exams. In this format, students must explain their answers verbally to a professor. This ensures they truly understand the material. Tasks focused on real-world problem solving or group work also limit the power of technology-based cheating. These changes nudge learners toward genuine effort while keeping assessments meaningful.

Promoting collaboration over competition in learning

Group projects can help students learn with and from each other. Working together builds skills in problem-solving and teamwork that solo work often misses. Stanford education scholars found that shared learning can reduce the urge for misconduct.

In 2023-24, UK schools confirmed nearly 7,000 cases of cheating using AI tools. This spike tells a clear story: students under pressure may turn to shortcuts when only grades matter. Sharing knowledge helps everyone grow stronger together. Friends who study together spot mistakes faster, explain tough ideas simply, and stop acts of plagiarism before they start.

The Ethical Debate Around AI and Academic Cheating

People often whisper about the fine line between using AI for help and flat-out cheating. These debates can spark heated arguments in classrooms and even family dinners.

Balancing technological advancements with integrity

Stanford scholars are digging into why students cheat with AI. Surveys show nearly 7,000 proven cases of cheating in UK schools last year. Some universities now say using AI does not always count as breaking the rules. For example, Ohio State University stopped treating all use of artificial intelligence as academic misconduct.

Students sometimes ask ChatGPT to write whole papers. While this saves time, it chips away at real learning. Professors from the University of Illinois Urbana-Champaign have even had students use AI to apologize after getting caught. Academic integrity now faces tough questions from both technology and society itself.

Addressing societal pressures that drive cheating

Peer pressure, high expectations, and fear of failure often push students to cheat. In 2023-24, a UK survey uncovered nearly 7,000 proven cases of cheating with AI tools. These numbers show real stress on young people in education today.

Discussions on forums like r/college reveal that many students cheat because they feel overwhelmed by tuition costs and the need for a high GPA to get a job. Some schools are changing the rules because so many learners use artificial intelligence for help. A big shift is happening as outside pressures shape policy in universities and colleges across the country.

Final Thoughts

Cheating with AI has shaken up schools and made everyone rethink what academic integrity means. We explored how students use tools like ChatGPT and why some see it as harmless help while others call it misconduct. With simple steps like teaching ethics, updating policies, and using smart assessments, both teachers and students can handle this new tech-driven world without losing trust.

Even small changes bring big results, like restoring real learning and keeping the value of a hard-earned degree alive. As someone who values honest effort, seeing young people grapple with these challenges gives me hope that honesty can still win out. So, stay curious and keep learning the right way.