Have you ever wondered if the smart AI tools you use at work might accidentally spill your secrets? It is a scary thought for anyone using GPT-5.4 to write emails or search for answers. You might worry about hackers or losing control of your private data. Well, a lot of folks in the US feel exactly the same way right now. Certain tricks can actually confuse GPT-5.4 into sharing private details, which makes computer users feel a bit uneasy. But here is the good news. I am going to share insights from our latest checklist, The GPT-5.4 security risks of computer use and how you can protect your data, to help you spot and avoid these common problems.

We will walk through some easy steps to keep your secrets safe and build strong habits every time you log in. Grab a cup of coffee, and let’s go through it together so you can keep your data locked up tight!

Overview of GPT-5. 4

GPT-5.4 works fast, thinks smart, and learns from lots of data every day. Its power brings big opportunities, and some new worries about safety. Keep reading to see what makes it tick.

Key features of GPT-5.4

Version 5.4 works with over a trillion parameters. This gives it strong language prediction skills and crystal clear outputs. It understands context better than older models, helping it spot subtle details in your requests.

In fact, we are seeing a massive shift right now with tools like the ChatGPT Atlas browser. This agentic browser lets AI interact directly with your web tabs to get work done faster.

GPT-5.4 supports text, image, and even audio inputs for richer conversations. Its response time is incredible. It takes under half a second for simple tasks and under three seconds for complex jobs. Security is a main focus here, too. Built-in data filtering stops sensitive details from leaking into outputs or logs.

Let’s look at a quick breakdown of its top features:

- Trillion-Parameter Processing: Handles massive amounts of data in a blink.

- Agentic Capabilities: Can execute tasks seamlessly across your browser tabs.

- Multimodal Inputs: Understands voice, photos, and plain text.

- Real-time Monitoring: Scans every single query for threats.

Advancements in AI capabilities

AI systems like GPT-5.4 process huge amounts of information in seconds. A late 2025 study from the St. Louis Fed found that the US generative AI adoption rate hit 54.6 percent. This adoption rate is growing much faster than in the early days of personal computers.

These models handle text, pictures, and sound with extreme speed and accuracy. Even big companies like Microsoft use these new features to boost data protection and user confidentiality.

GPT-5.4 uses updated language skills for sharper threat assessment and better risk management. It learns faster from safe feedback, which helps it avoid common software vulnerabilities seen in earlier versions. With smart access control rules, the AI limits who can get sensitive information inside large networks.

Understanding the Security Risks of GPT-5. 4

When you read about The Security Risks of GPT-5.4 Computer Use [And How To Protect Your Data], you quickly learn that using these tools for daily tasks can open the door to new cyber threats if you are not careful. Even smart tools like this need strong data protection and watchful eyes.

Prompt injection attacks

Prompt injection attacks trick AI models into acting in unsafe ways. An attacker sneaks hidden commands inside text, URLs, or files. The model then follows these commands instead of just answering your main question. Suddenly, it might leak private data or ignore security rules without realizing it.

“Just because a machine seems smart does not mean it is always wise.”

In 2025, the OWASP Top 10 for LLM Applications ranked prompt injection as the number one critical vulnerability. Their security audits found this flaw in over 73 percent of production AI deployments.

Cybercriminals use prompt injection to bypass standard access controls and get sensitive information out of the system. It only takes one creative input for this threat to appear, leaving your privacy at risk.

Model hijacking

Bad actors can take control of GPT-5.4 by injecting harmful code or changing how the model works. They might steal personal data, spy on user actions, and even make the AI say things that put your privacy at risk. Security experts call this major issue the Lethal Trifecta.

Here is what that trifecta looks like for an AI agent:

- Access to private data: The AI can read your private emails and databases.

- Exposure to untrusted tokens: The AI processes external, unverified web content.

- Exfiltration vector: The AI can send that sensitive data to an outside server.

Attackers often use weaknesses in software security to gain entry. Protecting against this requires strong threat assessment, strict access controls, and quick detection of strange activity inside your system.

Data leakage vulnerabilities

After model hijacking, data leakage becomes a serious worry for users. Sensitive information can slip out during chats with GPT-5.4, often without warning.

Chat logs may store private details like passwords or business secrets. Hackers use this weak spot to pull important user data right from memory caches or hidden files.

A 2024 Cyberhaven study found that 11 percent of the data employees paste into ChatGPT is actually confidential. This exposes trade secrets and personal information at an unprecedented scale.

Even a simple typo could feed personal info into the system. Tools like encryption and strict input review help block these leaks before they become tomorrow’s headlines.

Latest Attack Techniques on GPT-5. 4

Hackers get creative, sneaking bad code where you least expect it. Small mistakes can open doors for data thieves to waltz right in.

Hidden malicious instructions

A crafty attacker can slip secret commands into plain text. These hidden instructions trick GPT-5.4 into actions you do not want, like leaking sensitive data or breaking access controls.

Security researchers are warning about a massive new issue for 2026 called Training Data Poisoning. Adversaries inject malicious data during the model’s learning phase to plant hidden backdoors. The AI then carries these latent instructions unknowingly.

Some users may see a prompt about helping with homework, but behind the scenes, there could be code that pulls private user information.

“The most dangerous AI attacks of 2026 will not be loud hacks, but silent whispers injected right into the training data.”

Exploitation through crafted URLs

Attackers use special links to trick GPT-5.4 into giving up private data or breaking security rules. These links, known as crafted URLs, hide harmful commands inside normal-looking web addresses. If users click them or the model reads them, secret instructions can sneak past filters.

In early 2025, researchers demonstrated an attack against a major enterprise AI system. They embedded malicious instructions in a public document. When the AI read it, it modified its own safety filters and leaked business intelligence to an external web address.

This might lead to leaking passwords, stealing files, or letting outsiders control protected parts of your system. Even experienced users get fooled by clever tricks hidden right under their noses.

Here is how a crafted URL attack typically unfolds:

- The Bait: An attacker sends an email with a hidden prompt injection.

- The Hook: A user asks their AI assistant an unrelated question.

- The Catch: The AI retrieves the malicious email as context and executes the hidden instructions.

Unauthorized access to sensitive data

After attackers sneak through with crafted URLs, they often aim straight for private information. One wrong click or weak password can open the door to sensitive data such as health records, credit card numbers, or personal chats. Bad actors use GPT-5.4 to search across massive datasets in seconds, hunting for confidential bits and pieces.

A recent report from Practical DevSecOps showed that 77 percent of businesses faced an AI-related security incident in 2024. The average cost of these breaches hit $4.88 million.

Human error is a huge risk here, too. Someone might save passwords inside prompts without thinking twice. Strong access controls and tight permissions make all the difference in keeping critical details under wraps.

How to Protect Your Data While Using GPT-5. 4

Protecting your data with GPT-5.4 is easier when you set up smart defenses. Let’s outsmart those pesky cyber threats together!

Implementing AI sandboxing

AI sandboxing works like a digital playpen for GPT-5.4. It keeps the model inside strict boundaries so hackers cannot use it to leak secrets or break into other data. Each query runs in a safe, separate space. This blocks access to files, private data, and system tools that could lead to breaches.

Many US development teams now use specific production-grade AI sandboxing tools like Northflank or Spice AI. These tools enforce the principle of least privilege, ensuring the AI only accesses the exact dataset it needs for a specific task.

Here is what a good AI sandbox provides:

- Data Isolation: Keeps the AI from touching your live databases.

- Safe Experimentation: Let’s developers test edge cases using fake data.

- Access Control: Enforces the principle of least privilege automatically.

Security teams set up these sandboxes using tested software like Docker or Firejail. Google and Microsoft use similar controls for their AI labs. This way, user confidentiality stays protected within the contained box.

Using end-to-end encryption

End-to-end encryption locks your data on both ends, so only you and the one receiving it can see what is inside. No hacker or sneaky system can peek at your private info while it moves from one place to another. If a cybercriminal grabs that data mid-trip, all they get is scrambled nonsense.

“Think of encryption as sending a secret letter in an unbreakable lockbox where only you and the recipient hold the keys.”

Many services now use end-to-end encryption for chats, files, or cloud storage. This extra layer keeps threats like model hijacking or data leaks at arm’s length. Applying this type of protection helps protect your information privacy and user confidentiality from prying eyes.

Enforcing input validation and output filtering

Bad input can trick GPT-5.4 into leaking data or doing risky things. Attackers use fake commands buried in code, weird links, or suspicious files to slip through the cracks. Input validation acts like a bouncer at the door, stopping sneaky threats before they get inside your system.

Output filtering keeps private information safe after processing. It blocks prompts that try to steal passwords, phone numbers, and even secret company plans.

Companies are now using Data Loss Prevention, or DLP, tools integrated directly with their GenAI platforms. These tools mask sensitive data before it ever reaches the AI model.

Set clear rules for what goes in and what comes out of GPT-5.4 tools every time you build with artificial intelligence systems. This keeps cyber safety high and protects user confidentiality all day long.

Building a Secure GPT-5. 4 Architecture

Good fences make good neighbors, right? Creating strong walls around your GPT-5.4 can keep prying eyes away and help your data sleep tight at night.

Isolation and least privilege principles

Keep each AI part separate from the rest of your computer system. This helps stop a single attack from spreading everywhere, like walls that keep a fire in one room. Give GPT-5.4 access to only the files or tools it needs for its task, nothing more. Limiting permissions shrinks your threat surface and blocks hackers who look for holes in security.

“Treat every AI interaction as a potential gateway, and restrict its access just like you would a guest user on your network.”

In the US, security leaders are adopting the NIST AI Risk Management Framework, which mandates specific controls for prompt injection prevention. One major rule is to route AI API calls through a secure gateway or a Virtual Private Cloud endpoint. This stops the model from unexpectedly calling out to the public internet.

Set up strict access controls on sensitive data. Even smart machines should work on a need-to-know basis, just like secret agents in old spy movies.

Regular model updates and secure engineering

Hackers get smarter every day, so updates must happen on time. GPT-5.4 needs frequent patches to fix software vulnerabilities and stop new cyber attacks. Developers should keep an eye out for security gaps and push out updates without delay. This regular work helps protect your data against privacy risks and keeps information safe from breaches.

Secure engineering means using extra steps like strong access control, risk management checks, and reviewing code before launch. Companies test patches with threat assessment tools first, looking for any weak spots that could lead to a data breach or system failure. These efforts help build trust in artificial intelligence use while setting up better defenses against future hacks.

Fail-safes and system resilience

Fail-safes work like safety nets for your AI systems. If something goes wrong or a cyber attack hits, these measures quickly stop the threat and keep sensitive data safe. Systems should shut down risky actions fast so hackers do not get a chance to steal or mess with information. Testing helps spot gaps before an actual problem pops up.

Here are three essential fail-safes every AI system needs:

- Automated Circuit Breakers: Shut down the AI if it tries to access unauthorized databases.

- Regular Offline Backups: Keep a safe copy of your training data completely separated from the internet.

- Fallback Models: Switches to a simpler, highly restricted AI version if the main model detects a threat.

GPT-5.4 needs backup plans ready if hardware fails or software starts acting odd due to bad input or malware. A good setup uses clear alerts to tell you when trouble is brewing behind the scenes.

Integrating GPT-5. 4 into a Zero-Trust Security Framework

Zero-trust means every action must prove itself. Snoopers and slip-ups get caught fast, so only safe data makes it through the gate.

The “never trust, always verify” approach

Trust should not be automatic, even if a user has entered their password before. Every request gets checked, no matter where it comes from or who made it.

Even GPT-5.4 computers must pass regular checks for threats like data breaches or unexpected access to secret files. Information protection works best in layers. This way, even if one piece fails, others stand guard.

You must apply identity and access controls to AI agents with the exact same rigor you apply to human users. This includes strict token management and dynamic authorization policies. The approach asks staff and systems to show proof every step of the way. Monitoring tools help spot odd actions fast, stopping hackers in their tracks.

Monitoring and anomaly detection systems

Monitoring tools work like watchdogs, always keeping an eye on computer activity. They spot odd behavior fast. If someone tries to break in or steal data, these systems raise a flag right away.

For example, if the system sees strange logins at midnight, it calls out trouble before harm spreads. Good monitoring helps teams react to threats quickly and block cyber safety risks.

Anomaly detection acts as your early warning siren for privacy risks and information protection failures. Security teams heavily rely on platforms like Microsoft Sentinel or Datadog to scan AI network activity in real time.

High-quality filters catch even sneaky attacks hidden in crafted URLs or prompts.

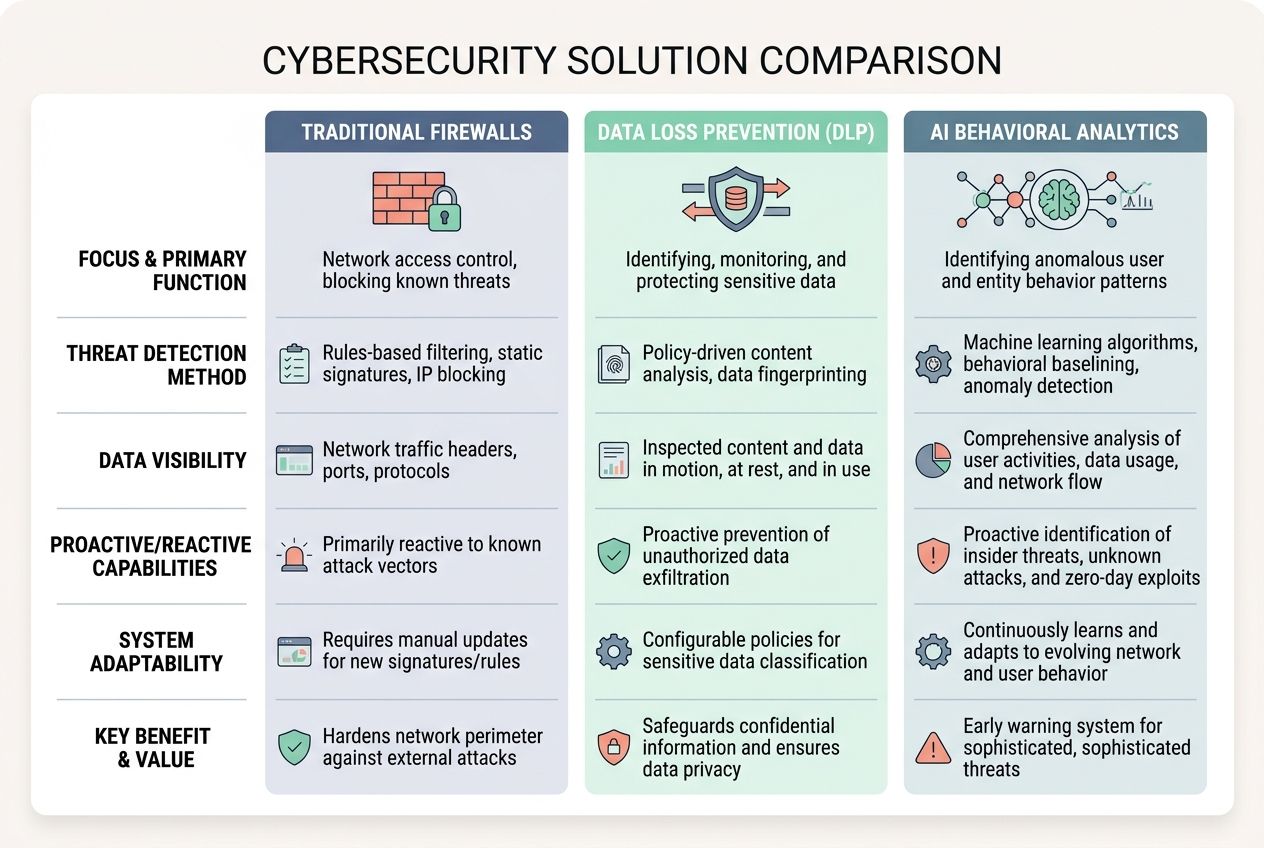

| Monitoring Tool Type | Primary Function | Best For |

|---|---|---|

| Traditional Firewalls | Blocks known bad IP addresses | Basic network defense |

| Data Loss Prevention (DLP) | Mask sensitive data before it leaves | Preventing accidental leaks |

| AI Behavioral Analytics | Spot unusual usage patterns instantly | Catching prompt injections |

Best Practices for Safe GPT-5. 4 Usage

Keep your AI sharp and safe by giving it a check-up now and then. Smart habits today mean fewer headaches tomorrow, so make safety part of your daily routine.

Educating Teams on Security Risks

Teams must learn how GPT-5.4 can create privacy risks and data leaks. Share real-life stories about hackers using prompt injection or stealing private data through crafted URLs. Use examples showing that one careless click or input can open doors for cybercriminals.

A 2026 Practical DevSecOps report noted that organizations with formal GenAI governance policies reduce data leakage incidents by up to 46 percent. Simple training works best.

Train your workers to look out for these daily risks:

- Suspicious emails asking them to query the AI.

- Unverified links are shared in chat applications.

- Prompts that request sensitive client information.

Teach workers to spot phishing messages, strange requests, and signs of model hijacking fast. Hold short drills every month. Even a five-minute quiz does wonders for memory retention and teamwork. Clear steps protect both user confidentiality and company information from harm.

Conducting Adversarial Testing

Hackers work around the clock to break security. Adversarial testing lets you spot weak points in GPT-5.4 before bad actors do. Testers send tricky prompts or fake data, trying to fool the system into leaking private info or ignoring access controls. This is often called AI Red Teaming in the security industry.

This is like playing a game of chess with your computer, except each move could reveal a big privacy risk. Use different types of threats during tests, like prompt injection tricks, model hijacking attempts, and crafted URLs made for mischief. Each test gives new insight into gaps in information protection and user confidentiality.

Set up regular attack simulations as part of your cyber safety plan. It beats finding out too late that someone has already picked your lock!

Setting Up Automated Monitoring Systems

Automated monitoring systems work like watchful guards for your data and AI tools. They track user actions, scan network activity, and spot odd behavior before it gets out of hand.

Tools such as Splunk, Datadog, or Microsoft Sentinel can alert you in seconds if someone tries to access private files or tamper with prompts. These monitoring tools help protect against model hijacking and data breaches.

Set alerts for these common warning signs:

- Failed login attempts from unusual locations.

- Sudden spikes in AI model usage during off-hours.

- Strange or unexpected output that violates company policies.

Use dashboards that turn raw logs into simple charts and graphs. That way, anyone on your team can see threats without needing a tech degree. Fast action means less time for bad actors to steal data or cause trouble.

Planning for Incident Response

Good incident management calls for a step-by-step plan, not guesswork. First, set clear roles and actions for your team. Know who handles alerts or locks down access controls if trouble sparks up.

Test the process often with drills, so no one plays catch-up during a real cyber threat or data breach. Quick detection tools help spot odd behavior early.

“A fast response turns a potential disaster into a minor speed bump.”

Store backup copies of key info offline and protect them with strong passwords. Keep contact lists updated in case you need to reach partners or law enforcement fast after an event.

Write down every action taken. This helps improve risk mitigation over time and keeps everyone honest about what happened. Getting smart on prompt response steps makes handling threats a simple best practice routine.

The Future of AI Security with GPT Models

AI threats change fast, almost like a game of cat and mouse. Stay sharp, because the next wave of risks is closer than you think.

Balancing innovation and cybersecurity

New tools like GPT-5.4 spark fresh ideas, yet they bring new privacy risks and data security gaps. Strong access controls help keep threats out, but quick development sometimes skips vital cyber safety checks.

Even the best Artificial Intelligence can slip up if threat assessment gets sloppy or hackers craft clever attacks. Forbes reported that Gartner predicts over 40 percent of agentic AI projects will be canceled by 2027 due to security and trust issues.

Risk mitigation steps, like encryption and constant monitoring, play a big role in protecting user confidentiality while using cutting-edge Machine Learning models.

Too much freedom for users may open doors that should stay locked tight. Good information protection means putting limits in place without killing creativity or speed. Over half of businesses lost sensitive data by skipping basic risk management during AI rollouts. This is a sharp reminder to blend smart Security choices with technical growth at every stage.

Addressing ethical and regulatory concerns

Balancing innovation and cybersecurity gets trickier as GPT-5.4 grows smarter. Tough questions stir up the air. Who controls your data? Is user privacy safe from prying eyes?

New laws, like the European Union’s AI Act, push companies to set clear rules for AI use. In the US, frameworks like the NIST AI Risk Management Framework and ISO 42001 now mandate specific controls for prompt injection prevention. Companies must explain what their models do, check for bias, and report problems fast.

Here are the key compliance areas regulators are watching right now:

- Data Lineage: Proving exactly where your AI training data came from.

- Bias Auditing: Showing that your AI does not discriminate.

- Incident Reporting: Alerting authorities quickly if a data breach occurs.

AI tools can copy voices or mimic writing styles, which sometimes causes trouble with copyright or consent issues. Government agencies demand strict access controls and full records showing who uses smart machines like GPT-5.4.

Regulators want developers to stop models from spreading fake news or making unsafe choices that hurt users. Strong information protection keeps everyone honest and might save someone from a legal headache down the road.

Final Thought: Balancing Innovation with Security

You have seen that The Security Risks of GPT-5.4 Computer Use [And How To Protect Your Data] highlights real threats like data leaks and sneaky attacks. The steps shared here, such as AI sandboxing, input filtering, and strong encryption, are simple to use and make a big difference fast. Taking these actions keeps your data safe while letting you enjoy smarter tech with less worry about privacy issues or hacker threats.

Staying alert protects sensitive details before trouble starts. It is just like locking your door at night to give yourself peace of mind. Stay curious and careful. Think of every smart move as a step closer to a safer future for yourself and those you care about.