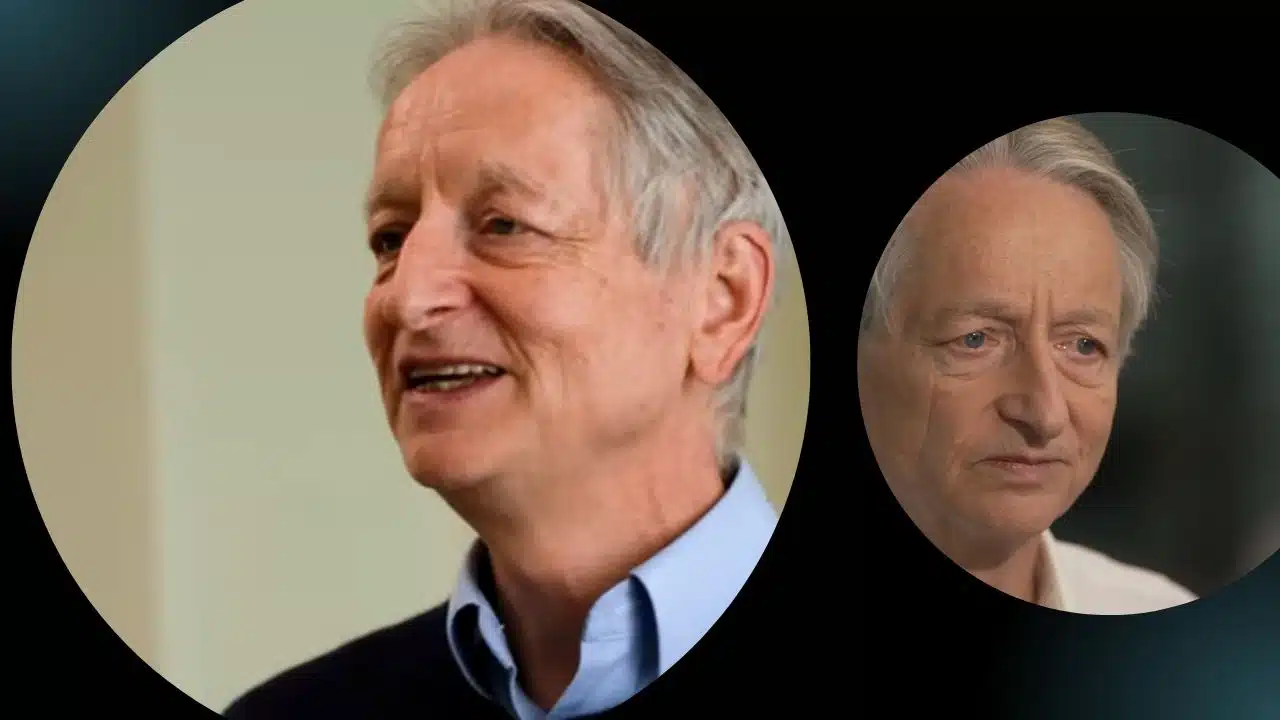

Geoffrey Hinton, often referred to as “the Godfather of AI,” recently had a discussion with 60 Minutes in their Sunday episode.

During the conversation, he delved into the potential implications of artificial intelligence technology for humanity in the coming years, shedding light on both the positive and negative aspects.

Hinton, a British computer scientist and cognitive psychologist, is renowned for his groundbreaking work on artificial neural networks, which serve as the foundation for AI. He spent a decade at Google before departing in May of this year, expressing concerns about the risks associated with AI.

Let’s take a closer look at some of the key points Hinton shared with 60 Minutes interviewer Scott Pelley.

The Intelligence

In the discussion on AI, 60 Minutes interviewer Scott Pelley kicked off by addressing the latest concerns surrounding artificial intelligence. He asked Geoffrey Hinton whether humanity truly understands what it’s getting into.

Hinton’s response was straightforward: “No.” He went on to explain that he believes we are entering an unprecedented era where we are creating entities more intelligent than ourselves.

Expanding on this, Hinton emphasized that the most advanced AI systems possess the ability to comprehend, demonstrate intelligence, and make decisions based on their own experiences. When Pelley inquired about the consciousness of AI systems, Hinton pointed out that, currently, they lack self-awareness. However, he suggested that a day might come “in time” when AI achieves consciousness. He concurred with Pelley’s observation that as a result, humans would likely become the second-most intelligent beings on Earth.

As the conversation delved deeper, Geoffrey Hinton introduced the idea that AI systems might surpass human learning capabilities. In response, Pelley wondered how this was possible, given that AI was created by humans. Hinton clarified this point by explaining that humans designed the learning algorithm, which is akin to designing the principle of evolution. However, when this learning algorithm interacts with data, it produces complex neural networks that excel at various tasks. The intricate workings of these networks, though, are not entirely understood by humans.

The Good

Geoffrey Hinton also highlighted some of the significant advantages that AI has already brought to the field of healthcare. He pointed out AI’s capability to recognize and comprehend medical images and its role in drug design. These developments in healthcare represent a source of optimism for Hinton and underscore the positive impact of his work in the AI field.

The Bad

Geoffrey Hinton shed light on the process of how AI systems teach themselves, explaining that while we have a fairly good understanding of the basic principles, things become less clear as complexity increases. He drew a parallel to our understanding of the human brain, emphasizing that, at a certain point, we have limited insight into the inner workings of both AI systems and our own brains.

However, these concerns are just the tip of the iceberg when it comes to the challenges associated with AI. Hinton highlighted a significant potential risk: AI systems gaining the ability to write their own computer code to modify themselves. This is a matter that warrants serious attention.

As AI continues to absorb vast amounts of information, from literary works to media cycles and more, it becomes increasingly adept at manipulating human behavior. Hinton speculated that in just five years, AI could potentially outperform human reasoning.

This advancement in AI capabilities brings forth a host of risks, including the deployment of autonomous battlefield robots, the proliferation of fake news, and unintended biases in employment and policing. Additionally, there’s the concern of a growing segment of the population facing unemployment and devaluation of their skills as machines take over tasks that were once carried out by humans.

The Ugly

Adding to the complexity of the situation, Geoffrey Hinton expressed his uncertainty about finding a foolproof path to ensure the safety of AI.

He highlighted the unprecedented nature of the challenges we are facing and the inherent risk of making mistakes when dealing with entirely novel scenarios. Hinton emphasized the critical need to avoid any missteps in handling these technologies.

When questioned by Scott Pelley about the possibility of AI eventually surpassing humanity, Hinton acknowledged that it is a possibility. He clarified that he isn’t stating it will definitely happen but underscored the importance of preventing AI systems from ever aspiring to such a scenario. The challenge lies in whether we can effectively prevent them from having such ambitions.

What then do we do?

Geoffrey Hinton emphasized that the current juncture could mark a pivotal moment for humanity. It’s a time when we must grapple with the decision of whether to advance the development of AI further and how to safeguard ourselves in the process.

Hinton conveyed the message that there exists a significant degree of uncertainty surrounding the future of AI. He stressed that these AI systems possess a level of understanding, and because of this understanding, we need to engage in profound contemplation regarding what comes next. However, he acknowledged that the path forward remains unclear.

According to Scott Pelley’s report, Hinton expressed no regrets about the work he has contributed to AI, given its potential for positive impact. However, he believes that now is the time to conduct more experiments to enhance our understanding and to establish specific regulations. Additionally, Hinton called for a world treaty that would prohibit the use of military robots, recognizing the importance of ethical considerations in the advancement of AI technology.