Have you ever wondered why a certain movie pops up on your screen, or why your loan application was denied while a neighbor’s was approved? Every single day, you interact with apps and websites that make split-second decisions about your life. They filter your job applications, suggest products, and even decide if you qualify for a mortgage. These systems do not always treat everyone fairly. This is an alarming rise of AI bias we need to talk about.

A recent 2025 study found that algorithms often reject qualified applicants from certain groups simply because the training data carries hidden prejudices. I am going to walk you through exactly how this happens. Grab a cup of coffee, and let’s go through it together so you can understand your rights and protect yourself.

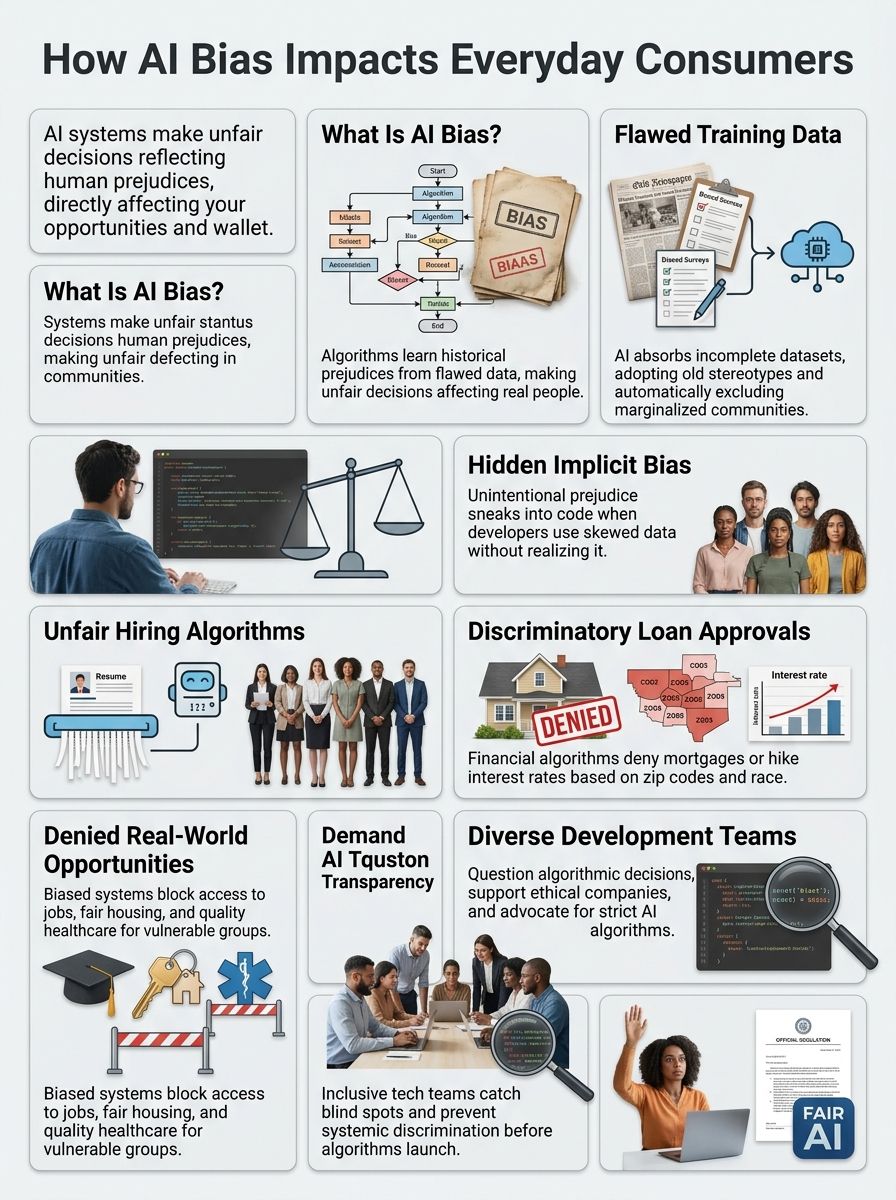

What Is AI Bias?

AI bias happens when artificial intelligence systems make unfair decisions that favor some groups over others. These systems learn from massive amounts of data. When that data reflects old prejudices or leaves out certain people, the AI picks up those same problems.

It then spreads them to millions of users. In 2026, state legislators across the US introduced over 40 bills just to regulate “personalized algorithmic pricing.” This shows how seriously lawmakers are taking the issue of unfair AI practices.

Definition of AI Bias

AI bias occurs because the step-by-step instructions that computers follow learn from data containing human prejudices. Machine learning systems absorb patterns from the information fed into them. If you teach a system using only biased textbooks, it will absorb those same biases.

A 2025 Pew Research survey found that 64% of US adults think AI will lead to fewer jobs. Many respondents expressed deep concern over flawed data making unfair choices.

The system then applies these flawed patterns to real decisions that affect you in hiring, lending, and healthcare. Here is a quick look at how this happens:

- The AI ingests historical data filled with past inequalities.

- The system assumes those past patterns are the correct rules to follow.

- It applies those flawed rules to new, real-world decisions.

- Consumers experience unfair rejections or skewed pricing as a result.

How AI Bias Manifests in Everyday Applications

Artificial intelligence systems touch your life more than you might realize. Your phone’s facial recognition feature might struggle to identify your face if you have darker skin tones. Loan companies use machine learning algorithms that reject qualified applicants based on zip codes or names that sound foreign.

Hiring tools scan resumes and automatically filter out candidates from certain schools or backgrounds. The bias is not always intentional, but the impact hits real people in very real ways.

“Data is a reflection of society, and biased data creates biased outcomes.” – Timnit Gebru, AI researcher and founder of DAIR

Social media platforms personalize your feed using machine learning bias that can trap you in echo chambers. Healthcare systems deploy AI tools that provide worse treatment recommendations to certain patients. Your user experience changes based on what an algorithm thinks about you.

Common Sources of AI Bias

AI systems pick up bad habits from the people who build them and the information they learn from. Problems start early, when developers feed incomplete or skewed data into machines.

Selection and Sampling Bias

Selection and sampling bias happen when the data used to train artificial intelligence systems does not represent the real world fairly. Imagine a company builds a hiring algorithm using only past employee records from a specific demographic group.

The algorithm learns from this narrow slice of data. It then makes decisions that favor similar candidates in the future. The 2025 Greenhouse AI in Hiring report showed that 35% of US job seekers believe AI just shifted bias from humans to machines because of skewed sampling.

Companies often grab whatever data sits easiest to access. This means real people face real consequences when algorithms make decisions about loan approvals or job applications.

Training Data Limitations

AI systems learn from data, much like how students learn from textbooks. If those textbooks contain errors or skewed information, the students will absorb those mistakes too. Training data limitations create exactly this problem in artificial intelligence.

The famous MIT “Gender Shades” project highlighted how major tech companies had a 33% higher error rate in classifying dark-skinned women because the training data lacked diversity. These gaps mean the AI system learns to replicate old biases rather than create fair outcomes.

| Good Training Data | Biased Training Data |

|---|---|

| Includes diverse ages, races, and genders. | Relies heavily on a single demographic group. |

| Reflects current societal goals and fairness. | Repeats historical inequalities and past discrimination. |

| Results in accurate outputs for all users. | Causes high error rates for underrepresented groups. |

If a healthcare AI system trains on data from wealthy hospitals only, it may not work well for patients in rural US areas. Developers must actively seek out data from underrepresented populations to fix these imbalances.

Algorithmic Bias

Algorithms make decisions every single day, and they often carry hidden prejudices. Algorithmic bias happens when mathematical formulas favor certain groups over others, usually without anyone noticing.

A hiring algorithm might reject qualified women because it was trained on historical data showing more men in certain jobs. Algorithms used for rent-setting are being heavily scrutinized, with 33 US state bills in 2025 alone targeting algorithmic price-fixing in the housing market.

Machine learning bias spreads these unfair patterns across millions of decisions, making discrimination feel automatic. Transparency in AI systems becomes critical here because you deserve to know why you got rejected for a job or denied a loan.

Human Bias Embedded in AI Development

People who build AI systems carry their own beliefs, experiences, and prejudices into their work. A developer might unconsciously favor certain groups over others while writing code. Teams that lack diversity tend to miss blind spots that could hurt consumers.

If your development team looks the same and comes from similar backgrounds, you will probably build systems that reflect those narrow viewpoints. Discrimination in AI often starts long before any algorithm runs.

To prevent this, tech companies should follow these steps:

- Hire diverse development teams to catch blind spots early.

- Include ethicists and sociologists in the design process.

- Run internal audits before launching a new feature.

- Listen to community feedback when testing new software.

Types of AI Bias

AI bias shows up in different forms. Each one affects how systems treat people in distinct ways.

Implicit Bias

Implicit bias sneaks into AI systems like a shadow you do not notice until the light hits it just right. This operates beneath the surface because the people who build these systems do not realize they are putting them there.

A machine learning model might automatically favor certain groups over others because the training data itself carries hidden prejudices. A 2026 Resume.org survey found that 57% of US companies already use AI in hiring, raising massive implicit bias concerns for everyday applicants.

If a healthcare AI system is trained on patient records from predominantly white populations, it will perform worse for patients of other races. This happens automatically, without anyone making a conscious choice to exclude you.

Explicit Bias

Explicit bias in AI systems occurs when developers or programmers intentionally build discrimination into algorithms. This type of bias reflects deliberate choices, not accidental mistakes. A company might program an AI hiring tool to reject applicants from certain zip codes.

Some early AI models explicitly filtered out ZIP codes in redlined US neighborhoods. This prompted Representative Yvette D. Clarke to introduce the Algorithmic Accountability Act of 2025. This legislation aims to hold companies accountable for intentional discrimination.

“Innovation should not have to be stifled to ensure safety, inclusion, and equity are truly priorities in the decision that affect Americans’ lives the most.” – Rep. Yvette D. Clarke

Marketing algorithms can deliberately target ads differently based on race or gender, affecting consumer trust. Companies that practice explicit bias face lawsuits, damaged reputations, and a loss of customer loyalty.

Societal Bias Reflected in AI Outputs

Beyond intentional prejudices, society’s existing biases seep into the technology in sneakier ways. If society treated certain groups unfairly for decades, the AI absorbs those patterns like a sponge.

Banks denied loans to people in specific neighborhoods for years. Now, loan approval algorithms repeat that same discrimination.

A March 2026 study in the International Journal of Research and Scientific Innovation noted that Hispanic and Black borrowers in the US face higher mortgage denial rates by AI even when controlling for income. The real problem is that AI amplifies these societal biases at a massive scale.

Here are a few ways societal biases creep into data:

- Historic bank lending practices are digitized as correct data.

- Past hiring preferences for specific demographics are treated as success metrics.

- Unequal access to quality healthcare creates skewed patient records.

- Historical arrest records lead to over-policing in specific neighborhoods.

Real-World Examples of AI Bias

AI bias shows up in real situations that affect your life right now. Companies use biased algorithms for hiring, loans, and healthcare.

Discrimination in Hiring Algorithms

Hiring algorithms promise to find the best candidates fast. The problem is that these algorithms often carry hidden discrimination that favors certain groups over others.

According to a 2025 Greenhouse report, only 8% of US candidates believe AI makes hiring more fair. In fact, a major resume screening study showed that AI preferred white-associated names 85% of the time.

| Human Bias in Hiring | AI Bias in Hiring |

|---|---|

| One manager might reject a great resume based on a name. | The system automatically rejects thousands of similar resumes. |

| Bias is localized to a single department or company. | Bias is scaled across entire industries using the same software. |

| Can be addressed through personal feedback and training. | Requires complex algorithmic audits and data retraining. |

Consumer rights matter here, since job seekers deserve transparency about how machines judge their qualifications.

Racial Bias in Facial Recognition

Facial recognition systems struggle with accuracy when they encounter people with darker skin tones. Studies show that these systems misidentify Black faces at much higher rates than white faces.

A May 2025 UPenn study confirmed that facial recognition performance degrades drastically with poor image conditions. This degradation disproportionately affects marginalized groups and creates real dangers in law enforcement.

Because of these risks, the New York State Senate advanced the Facial Recognition Technology Study Act (S3699) in March 2026. This act evaluates exact privacy and civil liberty concerns so you can receive fair treatment from these technologies.

Healthcare Inequalities Caused by AI Systems

AI systems in healthcare can amplify existing inequalities, leaving some patients behind. Algorithms trained on data from predominantly white populations often perform poorly for other racial groups.

In January 2025, the US FDA issued draft guidance for AI-enabled medical devices. This guidance requires a Predetermined Change Control Plan (PCCP) to monitor bias over time, which is a massive step toward protecting patients.

Here is why flawed algorithms directly harm real people:

- They miss critical diagnoses in underrepresented groups.

- They delay essential treatments based on faulty risk scores.

- They allocate hospital resources unfairly.

- They provide worse care recommendations to rural populations.

Biased Loan Approvals and Financial Services

Financial services face the same discrimination problems that plague healthcare systems. Banks rely on machine learning algorithms to decide who gets loans, credit cards, and mortgages.

The US Consumer Financial Protection Bureau (CFPB) has been actively monitoring AI in lending to ensure algorithms do not violate fair lending laws. A person’s zip code or employment history might trigger biased outcomes that have nothing to do with their actual ability to repay.

Approved applicants from marginalized communities often receive worse loan terms or higher interest rates than equally qualified borrowers. You deserve to know how algorithms evaluate your financial responsibility.

Why AI Bias Matters to Consumers

AI bias shapes your daily life, affecting everything from job opportunities to loan approvals. Here is why this directly impacts you.

Ethical Concerns and Social Inequity

Ethical concerns about artificial intelligence systems run deep because these tools make major decisions that affect real people’s lives. Algorithms approve loans and hire workers, yet many people never know they exist.

“The Algorithmic Accountability Act provides the American people with the ability to ensure that not only do these systems work as intended but their functionality is in compliance with US law.” – Mutale Nkonde, AI For The People

This lack of transparency creates a serious problem where you cannot challenge decisions that impact your future. Consumer rights demand that companies explain their decision-making processes and take responsibility for harmful outcomes.

Potential for Misinformation and Harmful Outcomes

Biased AI systems spread false information faster than truth can catch up. A hiring algorithm trained on flawed data might reject qualified candidates from certain groups, costing them real paychecks.

A 2025 Pew Research survey found that 66% of US adults and 70% of AI experts are highly concerned about getting inaccurate information from AI. These outcomes are not just numbers on a screen.

AI systems that misidentify faces in criminal investigations can lead police to the wrong suspects. Healthcare algorithms that underestimate disease risk leave patients without proper treatment.

The harm compounds when you do not even know that bias shaped the decision affecting you. Machine learning bias hides inside recommendations and search results.

Impacts on Access to Services and Opportunities

AI bias creates real barriers that shut people out of critical services. Loan companies use algorithms that reject qualified applicants from certain neighborhoods.

States are fighting back. California’s AB446 and Colorado’s HB1264 bills from 2025 aim to restrict “surveillance pricing,” where algorithms charge you more based on your data.

Here is how AI limits your opportunities:

- Job platforms steer you away from high-paying positions.

- Rental platforms deny housing applications using biased data.

- Insurance companies charge you higher premiums unfairly.

- Educational technology limits advanced learning opportunities.

Strategies to Mitigate AI Bias

Companies and developers can fight AI bias through practical steps that catch problems before they hurt real people. Smart fixes work best when teams act fast and stay honest.

Diversifying AI Development Teams

Diverse teams bring different perspectives to the table, which helps catch bias before it harms consumers. Organizations like AI4All are working hard to increase representation in the tech industry.

- Hire people from underrepresented groups in tech to create immediate benefits for algorithmic fairness.

- Recruit developers with varied life experiences to help identify discrimination in AI early.

- Partner with universities in diverse communities to build a larger talent pool.

- Involve diverse developers in testing phases to ensure customization works fairly for everyone.

Using Fair and Inclusive Training Data

Training data shapes how AI systems make decisions about you every single day. Companies must collect information that represents all people fairly.

The NIST Facial Recognition Technology Evaluation now actively tracks demographic differentials to push for fairer datasets.

- Collect data from many different sources and populations to avoid leaving out communities.

- Include people of different races, ages, and genders in your training datasets.

- Check your data for gaps and missing information, particularly in underrepresented groups.

- Remove outdated information that reflects old stereotypes and harmful assumptions.

- Audit your training data regularly for signs of skew or imbalance.

Conducting Regular Audits and Bias Impact Assessments

Companies need to check their AI systems regularly to catch bias. New York enacted a law in 2025 requiring state agencies to publish detailed information about their automated decision-making tools on public websites.

- Establish a formal audit schedule that examines AI systems at least quarterly.

- Test algorithms against diverse demographic groups to see if bias shows up differently.

- Collect data on outcomes for different user groups and compare results side by side.

- Document how AI recommendations affect real people, including tracking denied applications.

- Bring in outside experts who are not part of the original development team for a fresh perspective.

Implementing Transparent and Explainable AI Systems

Transparent AI systems show you exactly how machines make decisions that affect your life. In 2026, Illinois introduced the Algorithmic Pricing Transparency Act (HB 4248) to give consumers a non-personalized baseline price and an explanation of the algorithm.

- Document every step of how algorithms work so people understand the reasoning behind outcomes.

- Allow users to ask questions about decisions and receive clear answers in plain language.

- Create dashboards that display how machine learning processes information about individual consumers.

- Publish regular reports showing whether algorithms treat different groups fairly.

- Help consumers spot errors, challenge wrong decisions, and request corrections.

The Role of Consumers in Addressing AI Bias

You hold real power to fight AI bias. Your actions matter, and you can make a huge difference in how companies operate.

Raising Awareness of Bias in AI Systems

Consumers need to understand how artificial intelligence shapes their daily lives. Consumer Reports has been actively tracking state-level algorithmic pricing legislation to keep the public informed.

- Share stories about discrimination in AI systems with friends and family.

- Learn how facial recognition technology has failed people of color and educate others.

- Read reports from organizations tracking bias in automation and machine learning.

- Ask companies directly about their data representation practices.

- Challenge marketing claims that promise perfect personalization without proof.

Advocating for Ethical AI Practices

Raising awareness opens doors to real change, but advocacy transforms that knowledge into action. For example, US citizens can contact their representatives to advocate for the Algorithmic Accountability Act.

- Contact tech companies directly and ask them about their bias testing procedures.

- Support legislation that holds AI developers accountable for discrimination.

- Join consumer advocacy groups focused on algorithmic fairness.

- Vote with your wallet by choosing companies that prioritize ethical AI development.

- Demand transparency from apps and services you use daily.

Supporting Organizations That Prioritize Fair AI Development

Your choices matter when you support companies committed to fair artificial intelligence practices. Groups like the Algorithmic Justice League provide excellent resources for finding ethical tech providers.

- Research companies that publish transparency reports about their bias detection efforts.

- Look for organizations that hire diverse teams across engineering and leadership roles.

- Choose services from businesses that conduct regular algorithmic fairness audits.

- Favor companies offering explainable artificial intelligence features.

- Support brands that invest in inclusive training data collection.

The Future of AI and Bias Mitigation

Tech companies and governments are building new tools right now to catch AI bias before it harms people. These tools will make systems more trustworthy for everyone.

Advancements in Bias Detection Technologies

Researchers and companies now build smarter tools to catch bias before it harms people. These detection systems scan machine learning models and flag problems in real time.

Major tech players have released open-source bias detection platforms that anyone can access, like IBM’s AI Fairness 360 toolkit and Google’s What-If Tool. These tools work like a safety net, catching unfair outcomes before they reach consumers in hiring decisions or loan approvals.

Artificial intelligence companies also employ data scientists who specialize in algorithmic fairness. They test facial recognition systems on people with different skin tones, ensuring the technology works equally well for everyone.

Policy and Regulation for Ethical AI Development

Technology companies alone cannot fix AI bias. Governments must step in and create clear rules. The National Conference of State Legislatures reported that US states introduced over 100 AI-related measures in 2025 alone. This wave of legislation pushes organizations to prioritize ethical AI development and consumer rights.

Without rules, companies cut corners to save money. With rules, they invest in better data representation and algorithmic fairness. Transparency in AI becomes mandatory under new policies.

Building Trust in AI Through Accountability

Accountability acts as the foundation that holds AI systems responsible for their actions. Companies must open their doors to external audits and share how their algorithms make decisions.

A 2025 Greenhouse study revealed that 87% of US job seekers say it is extremely important for employers to be transparent about their AI use. This openness builds consumer trust because people know someone is checking for problems.

Accountability also requires real consequences for bad outcomes. If an AI system denies housing to people based on hidden racial patterns, that company must face penalties and fix the problem.

Final Words

AI bias shapes your daily life in ways you might not see coming. It affects your job applications, your loan decisions, and even your medical diagnoses. You have learned that bias sneaks into AI systems through flawed training data and homogeneous development teams.

Fixing this problem requires practical action from the companies building these tools. Support companies committed to fairness, and spread awareness about why this matters to everyone around you.

FAQs on AI Bias

1. What is AI bias, and how does it affect everyday consumers?

AI bias happens when computer programs make unfair choices because they learn from flawed historical data, which can directly hurt your wallet. For example, a National Bureau of Economic Research study found that AI mortgage algorithms often charge minority borrowers higher interest rates than white borrowers with the exact same credit scores. This means regular folks are unfairly penalized by the very technology designed to make decisions faster.

2. Why should regular shoppers care about the rise of AI bias?

You should care because retailers are increasingly using a tactic called surveillance pricing to charge you more based on your personal data. A 2025 Federal Trade Commission report warned that smart systems analyze your browsing history and location to quietly raise the price of simple items just for you. If these systems are biased, you could easily miss out on fair offers and pay inflated prices for a pair of shoes without ever realizing it.

3. Can AI bias show up in places other than shopping?

Yes, it pops up everywhere from loan approvals to job searches, often picking favorites without meaning to. A 2024 University of Washington study actually revealed that popular AI resume screeners preferred candidates with white-associated names 85 percent of the time. This hidden bias means someone else might get picked over you for reasons that are completely unfair.

4. How can I spot if an app or website uses biased AI?

You can spot hidden AI bias by watching for suspicious patterns, like a travel website suddenly raising flight prices after your third search, which you can easily test by checking the exact same route on a friend’s phone to see if the system is unfairly targeting your specific profile.

5. What are the three sources of bias in AI?

Three sources of bias in AI are the training data itself, errors in how the algorithm processes data, and human bias. Explore how these three sources influence the algorithm.