Listen to the Podcast:

Even though the AI race just started, AI and machine learning have been around for longer than most people think. AI systems are very important in many fields.

They speed up research and development in a wide range of fields, including healthcare, national security, logistics, banking, retail, and more.

AI has a long and interesting past. Here are some of the most important discoveries that have led to the most advanced AI models we have today.

1300-1900: Tracing the Roots of AI

AI has been talked about since the late Middle Ages, but computers didn’t appear until the mid-1970s. Scholars often thought about what would come next. Of course, they didn’t have the tools and skills to turn thoughts into reality.

- 1305: Ramon Llull, a Catalan theologian and saint, wrote Ars Magna at the beginning of the 1300s. It details mechanical methods for logical interreligious dialogues. In the last part of Ars Magna, called Ars Generalis Ultima, a diagram is shown for getting propositions from knowledge that already exists. It looks like training for AI.

- 1666: Gottfried Leibniz’s Dissertatio de arte combinatoria pulls inspiration from Ars Magna. It’s a machine that breaks down conversation into its simplest parts so that it can be analyzed easily. These formulas that have been taken apart are like the datasets that AI writers use.

- 1726: The Engine is first mentioned in the book Gulliver’s Travels by Jonathan Swift. It is a made-up machine that comes up with logical word sets and combinations. This means that even “the most ignorant person” could use it to write academic pieces on different topics. This is exactly what generative AI does.

- 1854: George Boole, an English mathematician, says that logical thinking is like being able to count. He says that people can use math to come up with ideas and figure out how to solve problems. By chance, generative AI uses complicated methods to make things.

Even though the first time period that looks at where AI came from is very long, there are some important events.

1900-1950: The Dawn of Modern AI

During this time, technological progress moved quickly. Researchers were able to test theories, ideas, and speculations because they had easy access to IT tools. They were setting the groundwork for the science of cybernetics.

- 1914: El Ajedrecista, which means “The Chess Player” in English, was made by the Spanish construction engineer Leonardo Torres y Quevedo. It’s an early example of automation. The Chess Player used its rook and king to checkmate the other player in the endgame.

- 1943: Walter Pitts and Warren McCulloch made a model of the organic neuron using math and computers. It does easy things that make sense. Researchers would keep using this algorithm for many years, which helped them make the neural networks and deep learning tools we use today.

- 1950: Computing Machinery and Intelligence was written by Alan Turing. It’s the first study paper to look at artificial intelligence, though he didn’t come up with the term AI. He calls them “machines” and “computing machinery.” In the problem statements of his theses, he mostly talked about how machines can be smart and use logic.

- 1950: Alan Turing made the Turing Test public. It’s one of the oldest and most common ways to test the correctness of AI systems through questioning.

Alan Turing’s work and the Turing Test, which tries to answer the question “Can machines think?”, mark the beginning of modern AI.

1951-2000: Exploring the Applications of AI Technologies

During this time, the word “artificial intelligence” was first used. After setting the foundation for AI, researchers started looking into how it could be used. Several industries tried it out. Researchers worked on medical, industrial, and logistics uses for the technology before it was offered to the public.

- 1956: Alan Turing and John Von Neumann were already looking into ways to make machines that could think logically. But John McCarthy didn’t come up with the word “AI” until 1956. It was first put forward by McCarthy, Claude Shannon, Nathaniel Rochester, and Marvin Minsky in a plan for a long-term study.

- 1966: Under the direction of the Stanford Research Institute, Charles Rosen made Shakey the robot. It might be the first “intelligent” robot that can do simple jobs, recognize patterns, and figure out how to get somewhere.

- 1997: IBM made Deep Blue, which uses its computers to play chess. It’s the first time a machine has played a full game of chess on its own and won. A world-class chess grandmaster also took part in the exercise.

During the middle part of AI’s development, the word “artificial intelligence” was first used. This was one of the most important things that happened.

2001-2010: Integrating AI Into Modern Technologies

Consumers were given access to new, cutting-edge tools that made their lives easier. They got used to these new things slowly. The iPod took the place of the Sony Walkman, gaming systems killed arcades, and Wikipedia beat the Encyclopedia Britannica.

- 2001: ASIMO was made by Honda. It has two legs and is controlled by AI. It can walk as fast as a person. But ASIMO was never sold to the public; Honda mostly used it as a study platform for mobility, machine learning, and robotics.

- 2002: iRobot made the robot that cleans floors. Even though the tool has a simple purpose, it uses an algorithm that is much more complex than what came before.

- 2006: A seminal study on machine reading was written by Michele Banko, Oren Etzioni, and Michael Cafarella at the Turing Center. It shows how well a system can understand words on its own.

- 2008: Google put out an app for iOS that can recognize speech. It was accurate 92 percent of the time, which was better than its predecessors, which were only accurate 80 percent of the time.

- 2009: Google worked on its robotic car for four years before it passed the first statewide test of its ability to drive itself in 2014. AI would be used by competitors to improve self-driving cars in the future.

Even though this period had some of the most famous tech of the last few decades, AI wasn’t really on most people’s minds. Personal and home assistants like Siri and Alexa didn’t come out until the next period.

2011-2020: The Spread and Development of AI-Driven Applications

During this time, companies started making AI-driven products that worked well. They add AI to things like virtual assistants, grammar checkers, computers, smartphones, and augmented reality apps.

- 2011: IBM made Watson, a computer system that can answer questions. The company put it up against two past Jeopardy champions to show how good it was, and Watson the computer won.

- 2011: Siri was made by Apple. It is a smart AI-powered virtual helper that iPhone users still use often.

- 2012: Researchers at the University of Toronto made a large-scale image recognition system that works 84 percent of the time. Keep in mind that older types made mistakes 25% of the time.

- 2016: World winner in Go since AlphaGo is a computer system that was taught to play Go by Google DeepMind. Lee Sedol played five games against it. Lee lost four times. This shows that well-trained AI systems are better than even the most skilled experts in their areas.

- 2018: OpenAI made GPT-1, which is the first example of a language in the GPT family. The BookCorpus collection was used to train developers. The model could answer questions about general information and talk like a real person.

During this time, people probably used AI apps without even realizing it, even though visual and voice recognition tools were still young for most people. AI research picked up speed toward the end of the decade, but not as much as what was to come.

2021-Present: Global Tech Leaders Kick off the Great AI Race

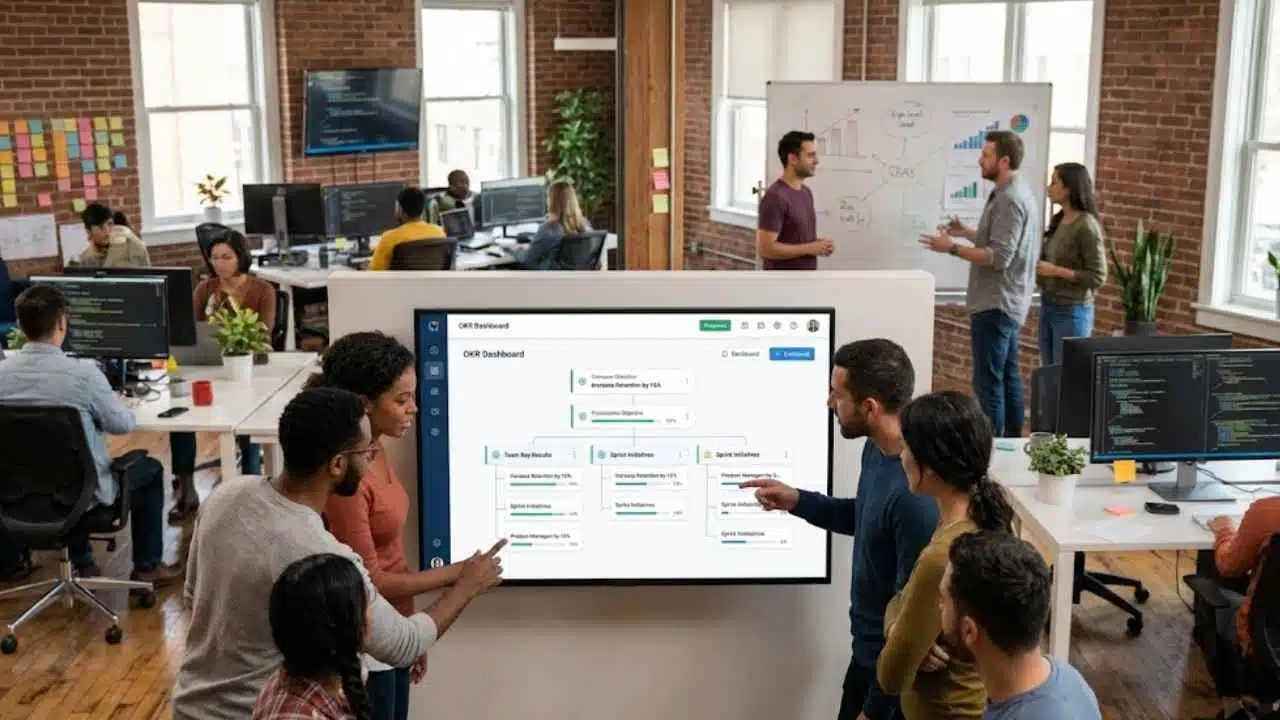

The big AI race has started. Language models are being made available by developers, and companies are looking into ways to add AI to their goods. At this rate, almost every object that people buy will have some kind of AI in it.

- 2022: With ChatGPT, OpenAI made a lot of noise. It’s a smart, AI-driven robot that runs on GPT-3.5, which is an update to the GPT model it made in 2018. During training, people who made it gave it 300 billion words.

- 2023: Other tech companies around the world did the same. Google put out Bard, Microsoft put out Bing Chat, Meta made an open-source language model called LLaMA, and OpenAI put out GPT-4, an updated version of its model.

There are also a lot of AI web apps and AI-based health apps that can be used or are being made, and there will be a lot more.

How AI Will Shape the Future?

AI is more than just chatting and making pictures. They help make progress in many areas, such as global security and market technology. AI helps you in more ways than you might think. So, instead of rejecting AI systems that are open to the public, you should learn how to use them yourself.

Simple AI tools like ChatGPT and Bing Chat can help you get your study going faster. Put them into your everyday life. Powerful language models can write hard letters, look up SEO keywords, solve math problems, and answer questions about general knowledge.