Have you ever stopped mid-meeting and thought, “Wait, should we really be using AI to make this decision?” You’re not the only one asking that question right now.

Business leaders across the US are adopting generative AI at a record pace. According to a 2025 study by the University of Melbourne and KPMG, 66% of people globally use AI regularly. Yet trust in these systems remains low, with only 46% of people willing to trust AI fully. That gap between adoption and trust is where ethics lives.

The good news? Getting this right is more straightforward than it sounds.

This guide walks you through what the ethics of generative AI actually mean for your business, what risks to watch, and the practical steps you can take to use AI with integrity every single day.

Understanding Generative AI

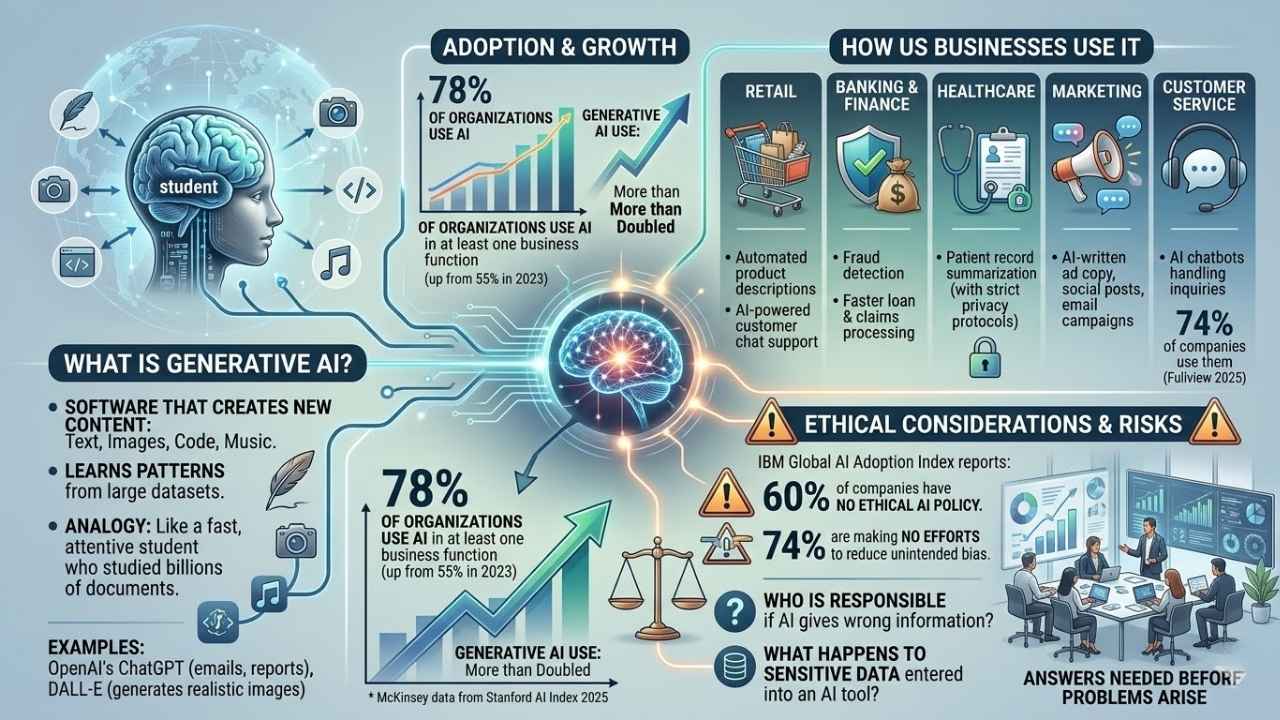

Generative AI is software that creates new content, including text, images, code, and music, by learning patterns from large datasets. Think of it as a very fast, very attentive student that studied billions of documents and can now produce original-looking work on demand.

What Is Generative AI?

Tools like OpenAI’s ChatGPT can write emails, answer customer questions, and draft reports in seconds. DALL-E, also from OpenAI, generates realistic images from simple text prompts. These are the types of tools many US businesses are already using daily.

The adoption numbers are striking. According to McKinsey data highlighted in the 2025 Stanford AI Index Report, 78% of organizations now use AI in at least one business function, up from just 55% in 2023. Generative AI, specifically, more than doubled in that same period.

That kind of growth is exciting. It also means the ethical considerations can’t wait.

According to an IBM Global AI Adoption Index report, 60% of companies using AI have no ethical AI policy, and 74% are making no efforts to reduce unintended bias in their systems.

How Businesses Use It

The applications are wide-ranging. Here’s how different industries in the US are already putting generative AI to work:

- Retail: Automated product descriptions and AI-powered customer chat support.

- Banking and finance: Fraud detection and faster loan or claims processing.

- Healthcare: Patient record summarization, with strict privacy protocols governing how models are trained.

- Marketing: AI-written ad copy, social posts, and email campaigns.

- Customer service: AI chatbots handling inquiries, with 74% of companies now using them according to Fullview’s 2025 AI statistics report.

Every single one of these use cases comes with ethical questions. Who is responsible if the AI gives a customer wrong information? What happens to sensitive data entered into an AI tool? These aren’t hypothetical worries. They are questions your team needs answers to before problems arise.

Key Ethical Principles for Generative AI

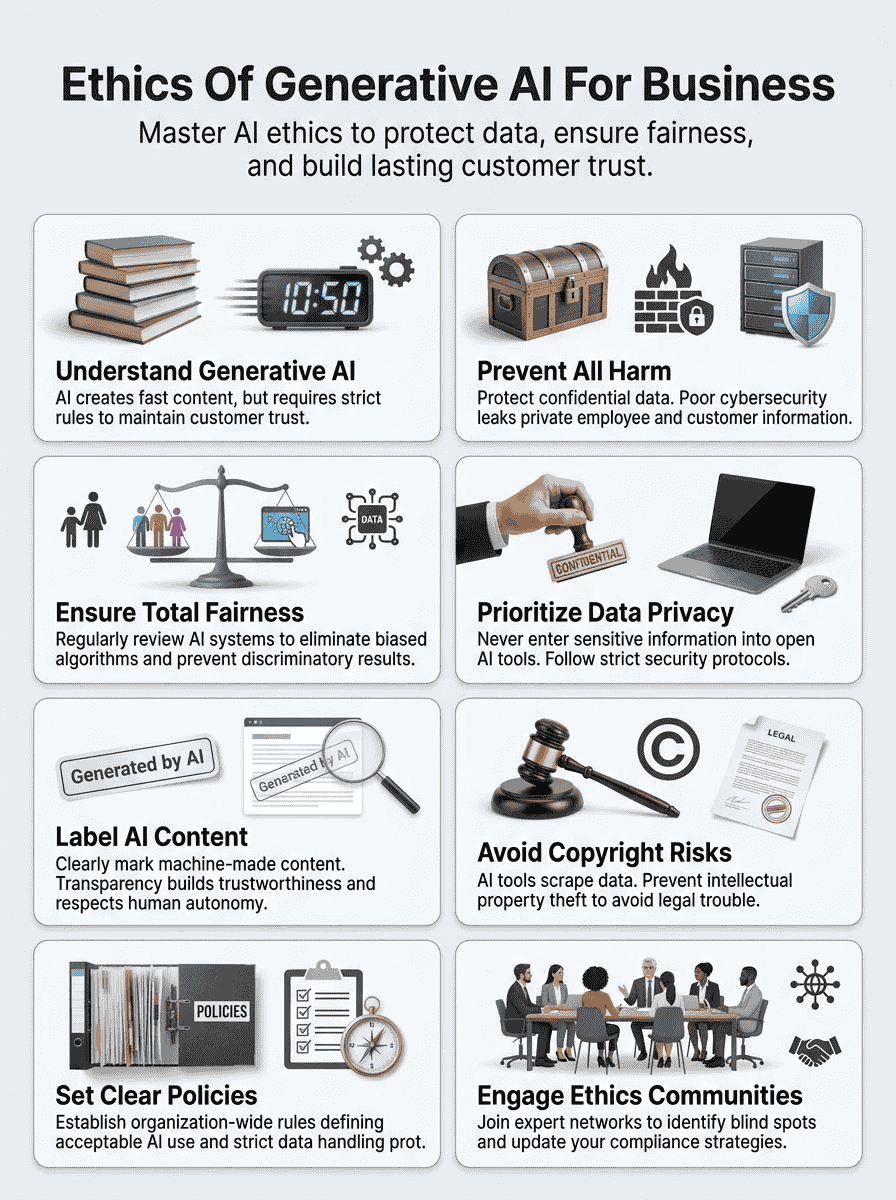

Ethical guidelines shape how artificial intelligence affects people’s lives, work, and privacy every day. With responsible actions and clear policies, businesses can build trust, promote fairness, and protect both data privacy and integrity in everything they do.

Do No Harm

The first and most fundamental principle is simple: AI must not create risks or cause harm to people. In practice, this means thinking through the consequences of every AI deployment before it goes live.

Consider a business entering confidential employee files into a public AI tool. If that tool stores your data or uses it for model training, sensitive information can be exposed. This isn’t a scare tactic. It’s a documented pattern of what happens when companies move fast without a plan.

Cybersecurity is a core part of this principle. The 2025 Stanford AI Index Report found that AI-related incidents jumped by 56.4% in a single year, with 233 reported cases in 2024 alone. The incidents range from data breaches to algorithmic failures that expose sensitive information.

Strong security practices, clear labeling of AI-generated content, and regular checks on how your AI tools handle data are the practical ways to live this principle every day.

Be Fair and Unbiased

Bias is one of the sneakiest problems in generative AI. An algorithm learns from historical data. If that data reflects past patterns of unfairness, the AI copies those patterns, and often amplifies them.

A 2025 study published through VoxDev found that AI hiring tools systematically favored some applicants over others with identical qualifications. This has moved beyond research into real courtrooms. In May 2025, a federal court in California certified a class-action case in Mobley v. Workday, Inc., allowing it to proceed on behalf of thousands of job applicants who alleged that Workday’s AI screening tool discriminated against them based on age, race, and disability.

The legal exposure here is very real. In New York City, a local law already requires annual bias audits for any automated employment decision tool. Colorado’s AI Act, effective in 2026, requires developers and deployers of AI hiring tools to use “reasonable care to prevent algorithmic discrimination.”

What can you do right now? Three practical actions stand out:

- Audit regularly: Run bias checks on your AI tools, especially those used in hiring, lending, or customer decisions.

- Use diverse training data: Work with vendors to understand what data their models were trained on.

- Keep humans in the loop: For high-stakes decisions, a human should review AI recommendations before action is taken.

Ensure Data Privacy

Data privacy and generative AI are closely linked, and the stakes are high. According to a 2025 KPMG report, 69% of business leaders cited concerns about AI data privacy, a significant jump from just 43% who raised the same concern in late 2024.

In the US, the regulatory picture is getting more complex. As of 2026, 20 states are now enforcing consumer privacy statutes, according to law firm White & Case. California’s CCPA, Virginia’s CDPA, and growing state-level AI laws all create obligations around how AI systems collect and use personal data.

The practical rule is straightforward: never enter confidential employee or customer information into a public AI tool unless you have confirmed how that tool stores and uses your data. Many popular generative AI platforms use conversation data for model improvement by default, so check the settings and terms carefully.

Protecting privacy isn’t just about avoiding fines. According to Deloitte’s 2025 Connected Consumer survey of 3,524 US consumers, 70% worry about data privacy when using digital services. Companies that handle data responsibly build lasting loyalty. Those that don’t can lose customer trust overnight.

Honor Human Autonomy

People have a right to know when AI is being used to make decisions that affect them. This is especially important in the workplace, where AI might influence performance reviews, scheduling, or even hiring and firing decisions.

According to the 2025 KPMG and University of Melbourne global AI study, 70% of people globally believe AI regulation is needed. That public mandate exists because many people feel they have lost some control over decisions that affect their lives.

Honoring human autonomy means giving people clear, honest information. Tell your customers when a chatbot is not a human. Tell employees when an AI tool is assessing their work. These conversations build trust and respect, and they protect you from legal exposure as disclosure laws expand across US states.

Promote Transparency and Accountability

Clear labeling of AI-generated content builds trust with customers. When a client receives a proposal, an email, or a report, they deserve to know if it was created by a machine.

Accountability means having a named human responsible for each AI system your business uses. Not a committee, not a vague policy. A person who is accountable for what that tool does and for fixing it when something goes wrong.

PwC’s 2025 US Responsible AI Survey found that organizations actively embedding governance into their AI operations are far more effective at communicating their AI priorities. Their research shows that 78% of respondents at the most mature governance stage rate themselves as “very effective” at responsible AI, compared to just 35% at earlier stages.

Posting your AI guidelines publicly, labeling AI content clearly, and holding leadership accountable are not optional extras. They are the foundation of a trustworthy AI strategy.

Risks and Ethical Challenges of Generative AI

Some risks can sneak into your business through generative AI, putting trust, data privacy, and fairness in a difficult position. Knowing what to watch for is the first step to staying ahead of these challenges.

Bias in AI Algorithms

AI algorithms do not always act fairly. They learn from data collected by people, and if that data carries bias, the AI will copy and often amplify it. A Stanford study published in October 2025 found that large language models like ChatGPT carry deep-seated biases against older women in the workplace. A separate test from August 2025 found that AI image evaluation tools gave lower “professionalism” scores to images of people with natural Black hairstyles.

These aren’t edge cases. They represent a systemic pattern that can affect hiring, customer service quality, and credit decisions.

Companies must review their AI models regularly. IBM’s AI Fairness 360, an open-source toolkit under The Linux Foundation, is one practical resource that provides over 70 fairness metrics and 10 bias-mitigation algorithms to help businesses assess and reduce bias in machine learning models.

The Bentley University-Gallup Business in Society 2025 survey found that concerns about ethics, accountability, and the unintended consequences of AI are “top of mind for many Americans.” Businesses that ignore these concerns will face growing resistance from customers and employees alike.

Intellectual Property Concerns

Copyright questions swirl around generative AI, and the legal situation is moving fast. As of late 2025, there are more than 50 active copyright lawsuits against AI developers in US federal courts, according to law firm Debevoise. The plaintiffs include major news organizations, book authors, music publishers, artists, and software companies.

The core concern is that many AI tools were trained on copyrighted material scraped from the internet, sometimes without permission or compensation to the original creators. In a landmark February 2025 decision, a Delaware federal court sided with Thomson Reuters against legal AI startup ROSS Intelligence, ruling that using copyrighted content to train a competing product was not fair use.

For your business, the practical risk is two-sided. First, your company might unknowingly produce AI-generated content that closely resembles someone else’s copyrighted work. Second, if your employees enter proprietary business information or trade secrets into an AI tool, you may be inadvertently handing that data to a third party.

The US Copyright Office clarified in January 2025 that content entirely generated by AI cannot be copyrighted. Only work with meaningful human creative input qualifies. This means labeling and documenting who did what in your AI-assisted content creation process has become both an ethical and a legal priority.

Data Security and Privacy Risks

Generative AI systems collect, store, and use huge amounts of employee and customer data. This creates serious cybersecurity exposure. According to Stanford’s 2025 AI Index Report, AI-related incidents jumped by 56.4% in one year, with 233 confirmed cases in 2024.

The financial stakes are steep. Research cited by Thunderbit found that organizations with unmonitored or “shadow AI,” meaning employees using AI tools outside official channels, faced data breach costs averaging $670,000 higher than companies with stricter controls. In the US, half of the workforce reported using AI tools at work without knowing whether it was allowed, according to the 2025 KPMG Trust and AI study.

Here are the key security safeguards every business should have in place:

- A clear, written policy on which AI tools employees are authorized to use.

- A list of data types that are never to be entered into any AI tool, such as payroll data, medical records, and customer account details.

- Regular cybersecurity training that covers AI-specific threats like prompt injection attacks.

- Periodic audits to detect unauthorized AI tool usage within the organization.

Misrepresentation and Misinformation

Generative AI makes it easier and cheaper to produce convincing fake content at scale. AI-generated images, audio, and text, if not labeled, can trick customers and damage trust quickly.

According to a 2025 BBC Research and Development report on AI and disinformation, most people now cannot reliably distinguish between authentic media and AI-generated content. This creates a serious responsibility for businesses. If your company publishes AI-generated content without labeling it as such, you risk misleading customers, even if that was never your intent.

The risk extends to internal decisions too. A 2025 industry survey found that 47% of enterprise AI users made at least one major business decision based on hallucinated or inaccurate AI content in 2024. AI tools can sound very confident while being completely wrong. Human review of AI outputs before any significant action is taken is not optional. It’s essential.

Best Practices for Ethical Use of Generative AI in Business

The good news is that using generative AI ethically doesn’t have to be complicated. These practical steps can help any business build a solid foundation for responsible AI use.

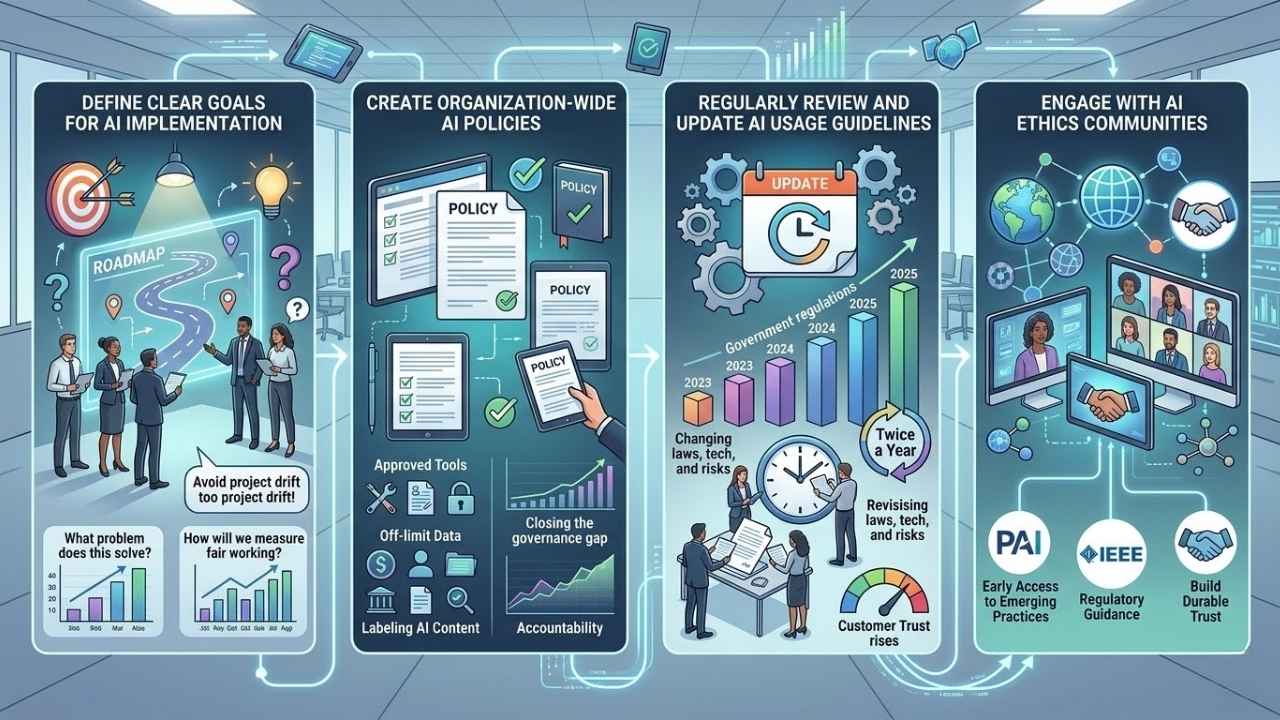

Define Clear Goals for AI Implementation

Clear goals keep AI projects focused and ethical. Before your company adopts any new AI tool, your team should be able to answer two questions clearly: What problem does this solve? And how will we measure whether it’s working fairly?

Without defined goals, AI projects drift. They start solving the original problem and then gradually expand into areas where no one has thought through the ethical implications. A customer service chatbot approved for answering FAQs shouldn’t start making credit decisions without a fresh ethical review.

Careful goal-setting also protects data privacy. Defining why you are collecting data, and what you will do with it, helps you stay compliant with state privacy laws from California to Colorado. It also makes it much easier to explain your AI practices to customers who ask.

Create Organization-Wide AI Policies

Strong, clear AI policies shape how employees use these tools every day. According to the 2025 KPMG global AI study, half of US workers admitted to using AI tools at work without knowing whether it was officially allowed. That is a significant governance gap, and it’s one that policies can close.

A good AI policy covers:

- Which AI tools are approved for which tasks.

- What types of data are off-limits for AI input, including customer records, financial data, and trade secrets.

- How AI-generated content must be labeled before it’s shared internally or externally.

- Who is accountable when something goes wrong.

- The consequences for misuse.

IMD’s 2025 AI Maturity Index, which assessed the top 300 companies in Forbes’ 2025 Global 2000 list, found that firms with publicly disclosed AI ethics principles and dedicated governance roles consistently ranked among the most mature AI adopters in every sector. Policy isn’t paperwork. It’s a competitive advantage.

Regularly Review and Update AI Usage Guidelines

Laws, technology, and risks shift fast. An AI policy written 12 months ago may already be out of date. The US federal government issued 59 AI-related regulations in 2024, more than double the number from 2023, according to a 2025 data privacy statistics report from Thunderbit. State-level laws are moving even faster.

Schedule a formal review of your AI guidelines at least twice a year. When new tools are adopted, new laws take effect, or a high-profile AI incident hits the news, treat it as a signal to review your policies sooner. The companies that stay ahead of compliance changes are the ones that build durable customer trust.

Keeping guidelines current also signals to your employees that leadership takes this seriously. That message matters.

Engage with AI Ethics Communities

You don’t have to figure this out alone. There are active communities, organizations, and resources designed specifically to help businesses use AI responsibly.

Some of the most valuable resources for US businesses include:

- Partnership on AI (PAI): A nonprofit that brings together industry, civil society, and academia to shape responsible AI practices. Members include Amazon, Apple, Google, IBM, Meta, and Microsoft.

- The IEEE Global Initiative on Ethics of Autonomous and Intelligent Systems: Produces detailed technical and ethical frameworks that businesses can apply directly.

- AI Ethics Lab: Provides consulting and research support for organizations building ethical AI programs.

- The Artificial Intelligence Ethics Council (AIEC): Co-chaired by OpenAI CEO Sam Altman, this initiative powered by Operation HOPE focuses on ethical AI for underserved communities and provides accessible guidelines for business leaders.

Participating in these groups gives your team early access to emerging best practices and regulatory guidance. It also shows customers and employees that your commitment to responsible AI goes beyond a policy document on a shared drive.

Benefits of Ethical AI Adoption for Businesses

People notice when a business stands up for integrity, transparency, and responsibility. Good AI ethics can set you apart from the crowd and open doors to lasting trust.

Enhanced Trust and Credibility

Companies that handle AI responsibly earn something money can’t directly buy: genuine customer confidence. And the data shows that confidence translates into real revenue.

Deloitte’s 2025 Connected Consumer survey of 3,524 US consumers found that customers who view their tech providers as excelling in both innovation and data responsibility spend 62% more annually on technology compared to customers who view their providers as lagging on both. That is not a small difference.

On the other side of the coin, 82% of surveyed US consumers said generative AI could be misused, up from 74% in 2024. Public concern is rising. Companies that address those concerns proactively, through clear labeling, honest disclosure, and strong data practices, will build the kind of credibility that competitors can’t easily copy.

Improved Decision-Making Processes

Ethical use of AI helps leaders make better choices. Transparent AI systems are easier to audit, which means mistakes and biases get caught earlier. Teams can spot problems faster when they know what tools are being used and how those tools reach their conclusions.

The “black box” problem, where complex AI models make decisions no one can explain, is one of the biggest sources of distrust inside organizations. According to a 2025 report from ThoughtSpot, 47% of enterprise AI users in 2024 made at least one major decision based on hallucinated AI content. Clear policies, human review processes, and explainable AI tools directly reduce that risk.

When leaders focus on fairness in their AI systems, the resulting decisions are more accurate and far less likely to trigger discrimination complaints or compliance violations. That’s a real operational benefit, not just a feel-good outcome.

Long-Term Sustainability

Long-term sustainability in AI starts with strong governance, transparent practices, and a genuine commitment to responsible innovation. Companies that build these foundations are not just avoiding problems. They are building something competitors will struggle to match.

IMD’s 2025 AI Maturity Index found that firms with embedded ethical oversight are consistently the top performers in their industries. General Motors took a concrete step in May 2025 by appointing a dedicated legal and ethical AI oversight lead, one of the first such roles in the automotive industry. That kind of institutional commitment is becoming a real competitive signal.

The regulatory environment is also pushing in this direction. As of 2026, 20 US states enforce consumer privacy statutes, and AI-specific legislation is expanding. Companies that have already built ethical governance frameworks will find compliance much less painful and expensive than those scrambling to catch up.

Final Thoughts

The ethics of generative AI isn’t just a compliance checkbox. It’s a business strategy that builds real trust with real people. Simple, consistent actions make a big difference: label your AI-generated content, audit for bias, protect private data, and keep your guidelines current. These steps build accountability, reduce risk, and show customers that your company takes integrity seriously.

Plenty of resources on AI governance are only a click away, from the Partnership on AI to IBM’s AI Fairness 360 toolkit. The tools and communities are there. Each step you take forward in ethical AI practice shapes your business as one people can count on.