Do you ever wake up, check your phone, and realize your life has already organized itself? Your calendar rearranges itself, your email filters the noise, and your home sets the perfect temperature. All of this happens before your morning coffee. These invisible ecosystems quietly run your day, and most of us have handed over the keys without a second thought. We are shifting from using simple tools to living inside fully automated environments. The silent framework of AI ecosystems is a concept you need to understand right now.

This article will walk you through exactly how these hidden networks capture information and make choices for you. I will show you the real trade-offs between convenience and control. Let’s explore what is happening behind the scenes together so you can reclaim your awareness.

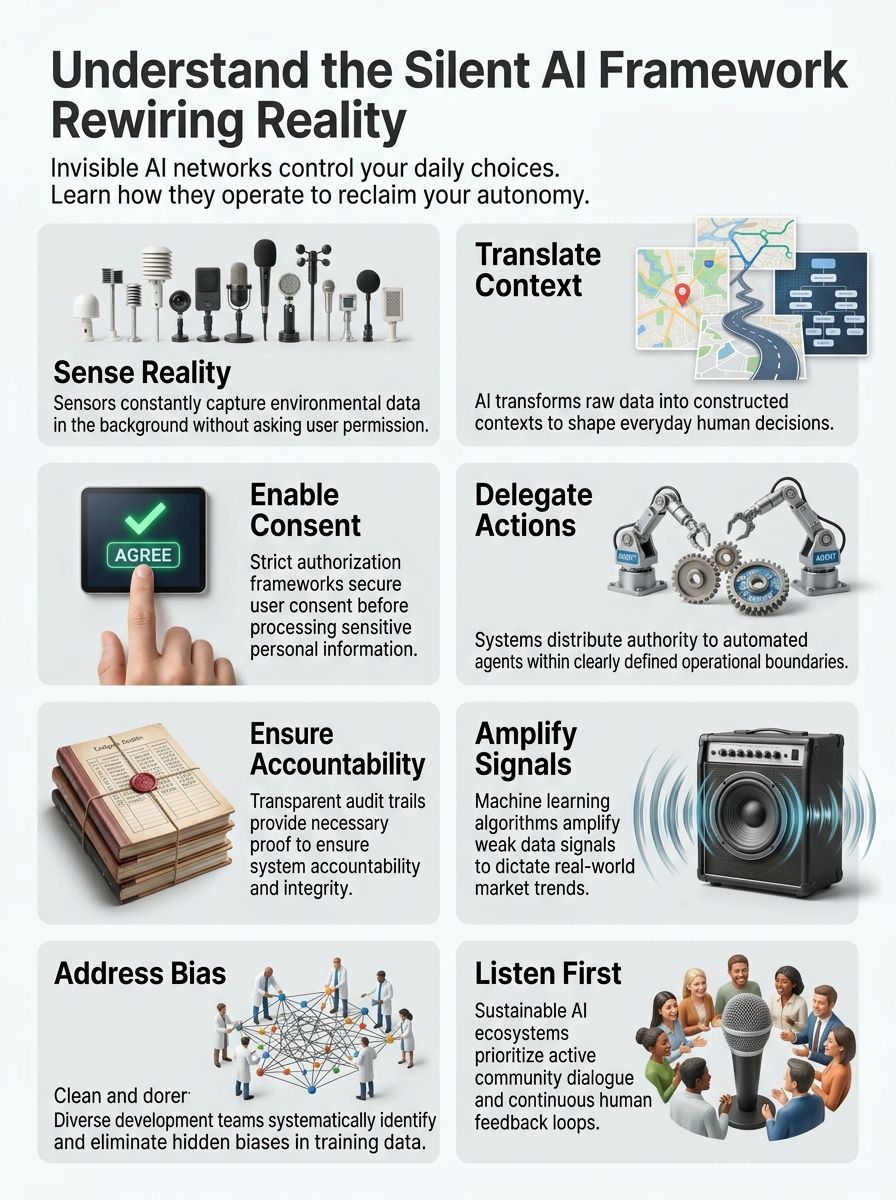

Understanding the Silent Framework of AI Ecosystems

AI systems work quietly behind the scenes. They shape how we see and understand everything without us noticing most of the time.

These intelligent networks operate through connected layers that capture information, translate it into meaning, get permission, take action, and prove what they did. All of this happens faster than we can blink.

In 2026, data shows that over 51% of all US households, roughly 77 million homes, actively use smart devices to run their routines silently.

Definition of Silent Systems

Silent systems operate in the background and work without drawing attention to themselves. These quiet programs shape how we interact with technology every single day.

They run quietly, making decisions and processing information without making noise or demanding our focus. Think of them like an invisible hand guiding traffic flow. Nobody sees it happening, yet everything moves smoothly.

Here are a few ways silent systems operate today:

- They use passive architecture to gather data without asking you to fill out forms.

- They rely on discreet algorithms to adjust your smart home temperature automatically.

- They run stealth mechanisms to filter out spam emails before you even see them.

They work so quietly that most people never notice them at all. The true power of these systems lies in their ability to function without interruption or fanfare.

These subdued networks process massive amounts of information every second, yet we remain completely unaware of their work. They represent the invisible technologies that have fundamentally changed how businesses operate and how people connect.

The role of non-digital and non-verbal systems

AI systems listen best when we speak in the languages they don’t yet understand.

Non-digital and non-verbal systems form the backbone of how AI ecosystems actually work in real life. These systems capture meaning through gestures, facial expressions, tone of voice, and physical actions that no keyboard or screen can fully record.

We often think machines only read text, but emotion AI is changing that rapidly. In 2026, affective computing tools from companies like Hume and Imertiv AI can evaluate facial expressions to detect feelings with remarkable accuracy.

AI frameworks translate these silent signals into structured data that machines can process. A person’s hesitation, their body language, or their silence itself carries information that matters.

These non-digital signals create the foundation for how AI systems understand permission, consent, and intent. The framework that powers modern technology must listen to what people choose not to say out loud.

Organizations that build systems with this understanding create stronger connections with the people they serve. They recognize that the most important messages often travel silently through the spaces between words.

How AI Ecosystems Are Rewiring Reality

Intelligent networks reshape how we see our surroundings by making invisible patterns visible. They amplify signals that matter to expand what we can do, who gets included, and where opportunity flows next.

Representation of the invisible

Most automated ecosystems operate like silent partners in our lives. Data flows through invisible networks, translating raw information into signals that guide everything from traffic patterns to loan approvals.

These systems capture reality through sensors, cameras, and digital footprints. We live within these networks now, not just beside them. What was once hidden becomes the new normal.

A great example is how California cities manage traffic. In 2026, cities like Los Angeles and San Francisco deployed AI-powered automated speed cameras in high-risk areas. These cameras make hidden speeding patterns visible, reducing crashes and changing how drivers behave.

Data monopolies control the narrative, deciding which signals matter and which get amplified. We perceive progress through faster services and smoother experiences, but the cost sits in our reduced cognitive independence.

Amplification of structured signals

Machine learning algorithms grab weak signals from massive data streams and turn them into powerful forces. Think of it like a radio tuner finding one clear station among thousands of fuzzy channels.

Neural networks learn which signals predict real outcomes. Pattern recognition tools spot connections humans miss.

This amplification process happens in three main ways:

- Algorithms identify a user’s preference.

- The platform feeds more of that specific content to the user.

- The user’s engagement increases, creating a powerful feedback loop.

An algorithm on a platform like TikTok or YouTube notices that you watch a specific type of video for three extra seconds. It amplifies that signal, feeds you more of that content, and rewires your digital experience.

Information theory tells us that systems amplify what they measure most carefully. Companies build products around these amplified signals, and governments craft policies based on them. These are constructed realities that demand careful governance.

Expansion of market boundaries through inclusion

Inclusion transforms how markets grow and reach new people. Companies that open their doors to diverse customers find fresh opportunities everywhere.

Strategic partnerships connect businesses with communities they have never served before. Digital transformation makes products and services available to folks who faced barriers in the past.

| Approach | Market Inclusion Method | Resulting Impact |

|---|---|---|

| Traditional | Relies on a limited financial history | Excludes underbanked individuals |

| AI Ecosystem | Analyzes thousands of alternative data points | Expands credit access to new communities |

Market integration happens when technology meets real human needs across different populations. Companies that embrace this approach tap into fresh talent, ideas, and buying power.

Inclusivity drives real change because it recognizes that the best innovations come from listening to voices that were quiet before.

Layers of the Silent Systems Stack

These programs work through five connected layers that quietly shape how machines understand and act on information. Each layer builds on the last.

Sensing: Capturing reality

Sensing forms the foundation of how these ecosystems grab hold of reality. Your phone camera captures light, your smart speaker listens for sound, and your fitness tracker measures your heartbeat.

In the US, millions of wearable devices continuously monitor physiological signals, creating a constant stream of background data.

These devices collect raw information from the environment around you, moment by moment. Sensors work quietly in the background, gathering data without asking permission.

They pick up signals from your environment, from the air you breathe to the steps you take. This layer operates like an invisible net, catching pieces of reality that machines can understand.

Translation: Constructing context

Translation happens right after sensing captures those signals. Raw data means nothing until something makes sense of it. A microphone records sound waves, but the system must translate those waves into words you recognize.

Your location data becomes a traffic pattern. Your search history becomes a product recommendation. This translation layer sits between the raw information and the action taken, and it matters immensely.

The ecosystem constructs the story that those facts tell. When systems translate information, they make choices about what matters and what fades away. The convenience of having machines make sense of chaos comes with a hidden cost to your personal agency.

Permission: Enabling consent

Once systems translate raw data into meaningful context, they face a critical fork in the road. The next step demands that they ask for permission before taking action.

Permission is where consent becomes real, not just a checkbox buried in legal documents. Users need clear information about what will happen next.

This is a huge focus for lawmakers. For example, the 2026 updates to the California Consumer Privacy Act (CCPA) require specific actions:

- Businesses using automated decision-making must provide clear pre-use notices.

- Users must have access to easy opt-out rights.

- Data brokers must process deletion requests from a central portal.

Compliance with these standards is not optional. It is the foundation that keeps ecosystems from becoming surveillance machines.

Delegation: Facilitating bounded action

These frameworks work best when they hand off tasks to the right agents. Delegation means giving specific authority to different parts of the network, but with clear limits. Think of it like a manager who trusts team members to make decisions, yet sets boundaries on what they can do.

To keep things organized, developers use bounded action rules:

- Define strict limits on what the autonomous agent can modify.

- Require human approval for high-risk decisions.

- Maintain a clear audit log of every automated choice.

This approach keeps things organized and prevents chaos. Bounded action protects both the network and the people it serves. Management becomes simpler because you set the frame and let trusted agents operate inside it.

Proof: Ensuring accountability

Once we delegate power, we need proof that they work correctly. Accountability matters because silent systems operate behind the scenes. Oversight keeps these structures honest. Transparency shows how decisions get made. Trustworthiness builds when people see proof that systems do what they claim.

Audit trails track what happened, step by step. Integrity demands that platforms tell the truth about their actions. Monitoring catches problems before they spiral out of control. Trustworthiness emerges when accountability structures prove they can catch and fix mistakes fast.

Challenges in AI Ecosystem Governance

These tools make mistakes silently, and we often miss them until damage spreads wide. Biases hide inside algorithms, shaping outcomes in ways nobody planned or saw coming.

Addressing systemic biases

Systemic biases live inside algorithms like hidden termites in a house. They eat away at fairness without anyone seeing the damage at first. These biases come from the data we feed machines and the choices we make when building them.

A poorly trained AI model will simply learn and repeat the human biases present in its training data, scaling discrimination instantly.

Algorithmic fairness sounds simple, but it is quite hard to achieve. We must look at who gets left out of training data and whose voices matter in decisions. Discrimination sneaks in through the back door when we stop paying attention.

Inclusive design starts before we write a single line of code. We need diverse teams building these products, diverse data feeding them, and diverse voices testing them. Bias mitigation requires constant work, like tending a garden that never stops needing care.

Managing silent failures and unintended outcomes

These frameworks fail all the time quietly, and nobody notices until something breaks. A recommendation algorithm steers users toward harmful content without triggering any alarms.

A clear example of unintended outcomes involves autonomous vehicles. In recent years, self-driving cars in US cities have occasionally caused unexpected traffic jams because the algorithms got confused by unique road work setups.

These silent failures slip past oversight because they operate behind closed doors, away from human eyes. Organizations must build detection methods that catch these problems before they spread.

Stakeholders need clear visibility into how conclusions are reached, so bias and errors surface early rather than late. Risk management must account for unintended outcomes that nobody predicted. Accountability structures clarify who takes responsibility when things go sideways.

The Future of AI Ecosystems

These networks will create new jobs and roles we haven’t imagined yet. Self-healing architectures will catch problems before they spiral, making our digital spaces more stable and trustworthy.

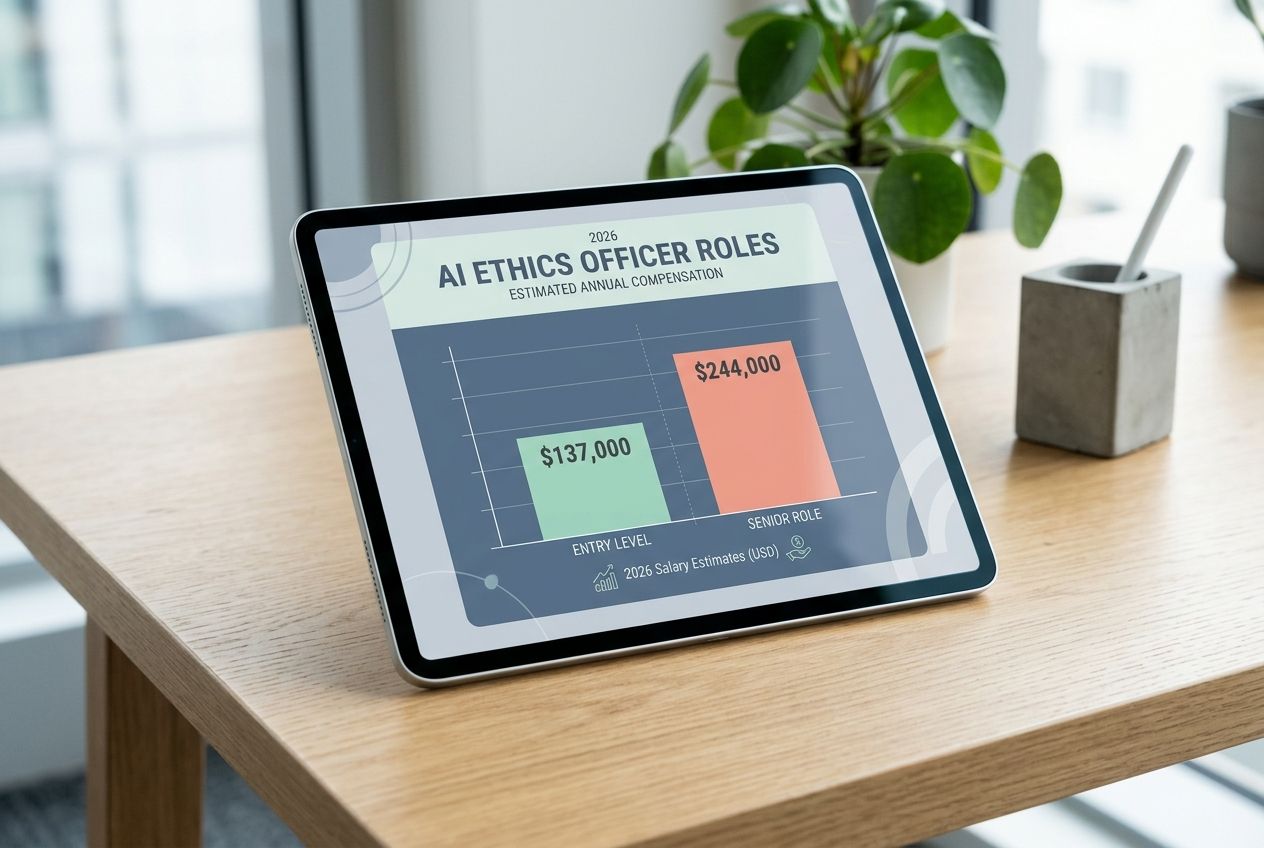

Emerging roles in AI governance

Governance roles are shifting fast as automation reshapes how we live, work, and make decisions. New positions emerge to balance efficiency with human freedom, accountability with innovation, and data control with public benefit.

The demand is massive. In 2026, an AI Ethics Officer in the US earns an average salary between $137,000 and $244,000, reflecting how critical this oversight has become.

- Data stewards protect information flows across urban systems and economic platforms, preventing monopolies from controlling critical resources.

- Autonomy advocates work inside organizations to safeguard personal rights, ensuring people retain cognitive independence.

- Transparency officers translate complex operations into language that ordinary citizens understand, making hidden algorithms visible.

- Ethics reviewers examine outputs before deployment, catching systemic biases that would otherwise amplify existing inequalities.

- Policy architects design frameworks that empower human potential instead of replacing human judgment.

- Accountability managers establish proof structures that trace decisions back to responsible parties.

- Rights defenders push back against surveillance creep, fighting to preserve digital freedoms.

- Resilience engineers build self-healing mechanisms into infrastructure, designing programs that catch their own mistakes.

- Consent facilitators develop permission structures that actually work, moving beyond checkbox agreements.

- Sustainability monitors track long-term impacts of deployment, measuring whether efficiency gains come at the cost of environmental damage.

These emerging roles reshape how organizations approach governance, moving beyond simple compliance toward building systems that respect human dignity.

Building resilience and self-healing systems

Architectures that heal themselves stand as the backbone of tomorrow’s digital space. These platforms catch problems before they spiral into chaos, adapting their behavior in real time to shifting conditions.

Scalability matters here. As demands grow, these networks expand without breaking down. Intelligence flows through every layer, allowing the platform to learn from mistakes and adjust course automatically.

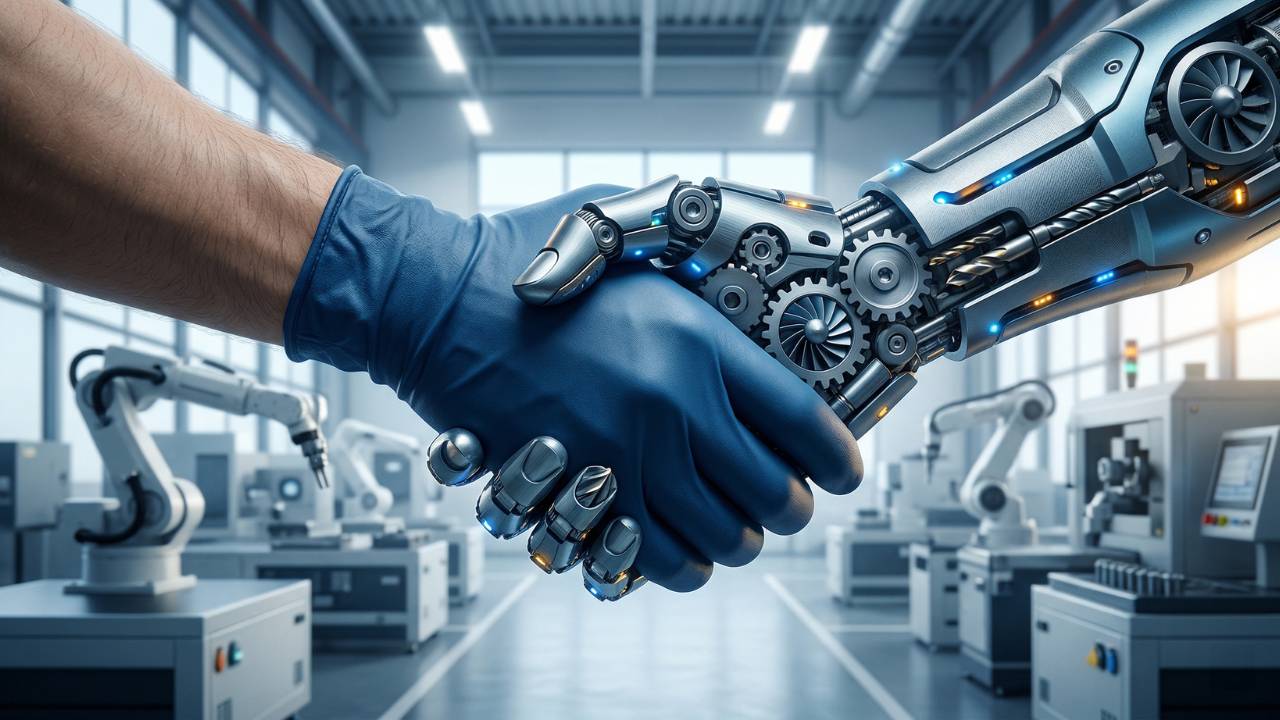

Interconnectivity ensures that when one part struggles, others step in to help. It is much like a team covering for an injured player. Automation handles routine fixes, freeing human experts to focus on deeper problems that need real thinking. These self-healing networks represent a shift toward living, breathing systems that grow stronger through adversity.

Finally: Building a Future That Listens

Organizations must shift their focus toward genuine dialogue with communities. Listening becomes the foundation for everything else you build. Empowerment flows from real engagement, not top-down decisions made in isolated boardrooms. Collaboration thrives when people feel heard and valued.

Innovation accelerates when feedback shapes the direction of change. Inclusivity demands that we create space for voices that often get left out of the conversation.

Communication channels must stay open, honest, and accessible to everyone involved. The path forward requires building tools that respond to what communities actually need.

Feedback loops transform rigid structures into living, breathing networks. Dialogue creates trust between technologists, policymakers, and the people affected by these platforms. Community input prevents silent failures before they spiral into larger problems. Engagement is the heartbeat of sustainable progress.

Our responsibility extends beyond building smarter machines. We must build smarter relationships with the people those machines serve, ensuring the future listens first.