The accepted mainstream narrative of Everyday AI Dependence 2026 is that artificial intelligence has finally democratised convenience, acting as a benevolent friction-remover in our smartphones and classrooms. This is a comforting, heavily engineered illusion. What the general public is missing is that we are not simply adopting a new suite of digital tools; we are participating in the largest unconsented cognitive outsourcing experiment in human history. By trading daily decision-making for algorithmic efficiency, populations are quietly transferring the architecture of human thought—how we deduce, how we choose, and what we value—to a handful of closed-source tech monopolies.

To truly comprehend the depth of this shift, we must first dismantle the polished consumer narratives and look at the underlying telemetry data.

The Convenience Narrative vs. The Data Reality

The front-stage politics of everyday AI present a utopia of reclaimed time. Tech executives routinely testify before regulatory bodies, pointing to the hours saved when a generative model drafts a parent’s email to a teacher, summarises a dense financial contract, or auto-generates a weekly meal plan based on biometric data. The surface illusion is one of unprecedented empowerment: AI handles the mundane so human beings can focus on the profound.

The back-stage reality, however, is documented in the dark data of intelligence agencies and macroeconomic analysts. Every time a user offloads a minor cognitive task, they feed a machine learning model with highly specific behavioural training data. The system learns the user’s specific risk tolerance, vocabulary limits, and emotional biases. More alarmingly, neuro-behavioural data from early 2026 indicates a rapid atrophy in users’ independent problem-solving capabilities. When people rely on an algorithm to constantly mediate their reality, they are being slowly trained to accept machine-generated consensus as objective truth.

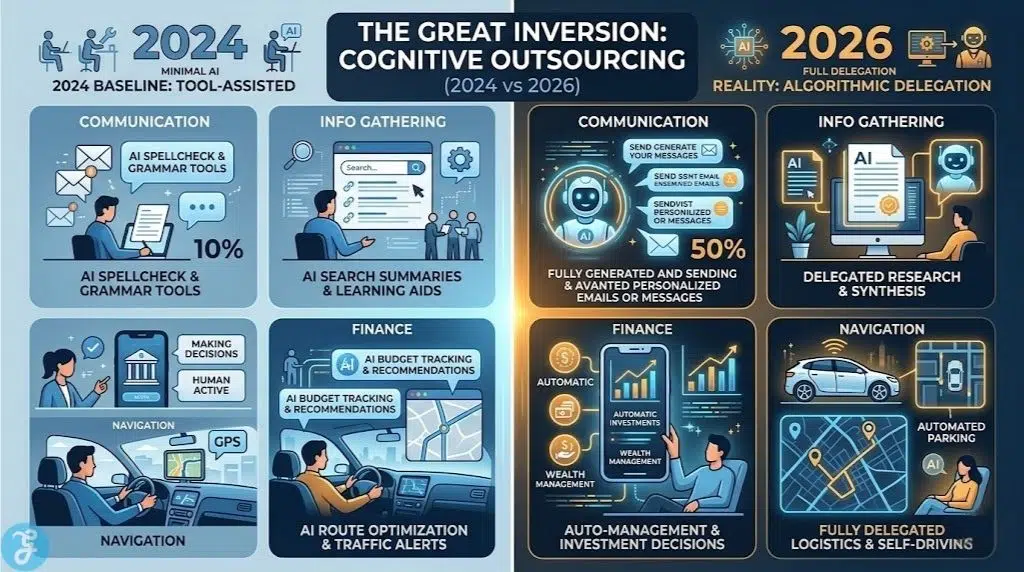

To illustrate how rapidly this cognitive outsourcing has accelerated, consider the shift in daily human reliance over the past two years.

| Cognitive Task Category | The 2024 Baseline (Tool-Assisted) | The 2026 Reality (Algorithmic Delegation) |

| Interpersonal Communication | Grammar and spell-check assistance. | Full-context generation of personal and professional emails. |

| Information Gathering | Keyword searching with user-led synthesis. | Accepting zero-click, AI-generated summaries without source verification. |

| Financial Navigation | Algorithmic trading for institutional investors. | Consumer micro-decisions and budget approvals fully automated by personal agents. |

| Spatial Navigation | GPS route suggestions with manual overrides. | Fully predictive routing, dictating physical movement based on sponsored commercial waypoints. |

The reality is not human empowerment; it is cognitive pacification. To understand the true scale of this dependency, we must decode the underlying strategic vectors driving the proliferation of consumer AI across three distinct arenas.

The Hidden Mechanics

The infrastructure of AI dependence is not accidental; it is a highly coordinated effort that intersects with national security, global finance, and international law. We must break down the analysis using these three strategic lenses.

The Security and Intelligence Angle: The Surveillance of Thought Processing

Intelligence agencies are no longer just monitoring intercepted communications; they are actively analysing cognitive dependencies. When a population relies on a centralised AI for daily tasks, that AI becomes a single point of failure and a prime vector for psychological operations. If a foreign adversary or a domestic intelligence apparatus can subtly tweak the semantic weights of a widely used consumer AI, they can quietly shift public sentiment on a mass scale without deploying a single piece of obvious propaganda. This is known as “semantic nudging.”

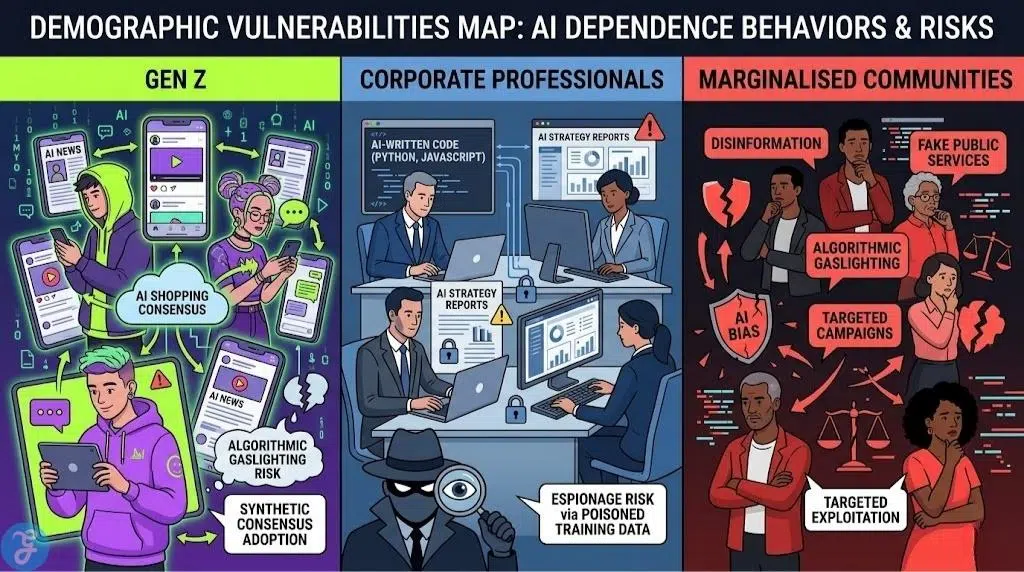

Furthermore, the trust metrics driving this reliance are heavily segmented and quietly studied by intelligence sectors. Recent 2026 data reveals significant fault lines across different populations, creating distinct, categorised vulnerabilities. The intelligence community uses these metrics to model how easily specific demographics could be compromised by compromised algorithms.

The following table breaks down how different demographic dependencies translate into direct security vulnerabilities.

| Demographic Vector | 2026 Adoption Behaviour | Intelligence Exploitation Vulnerability |

| Gen Z (18-29) | Near-total integration; AI acts as the primary mediator for news, social interaction, and purchasing. | Highly susceptible to algorithmic gaslighting; prone to adopting synthetic consensus without independent verification. |

| Corporate Professionals | Heavy reliance on AI for document synthesis, strategy generation, and code deployment. | High risk of industrial espionage via poisoned training data or subtly altered generative outputs. |

| Marginalised Communities | High scepticism due to historical algorithmic bias, leading to fragmented or defensive usage. | Vulnerable to targeted disinformation campaigns exploiting existing distrust in automated public sector services. |

Security vulnerabilities are only one side of the coin; the financial incentives driving this technology are equally aggressive.

The Economic Undercurrent: Monetising Cognitive Surrender

Follow the money, and the endpoint of consumer AI becomes clear: the total monopolisation of human intent. The current economic model of Silicon Valley relies entirely on reducing “friction.” However, in human psychology, friction is the exact space where critical thinking occurs. By eliminating the friction of choosing a brand, drafting a message, or summarising a document, tech giants effectively eliminate the space where consumers might change their minds or discover an un-sponsored alternative.

The actual product being sold in 2026 is not the $20 monthly AI subscription; it is the predictive certainty of the user’s behaviour. Once a user depends entirely on an ecosystem to process their daily life, their cognitive loyalty is locked. They become an asset that can be predictably monetised, their choices steered invisibly toward partner integrations or premium tiers. The advertising industry has fundamentally shifted from convincing humans to bidding for algorithmic preference.

This structural economic shift has violently altered how businesses acquire and retain customers.

| Economic Metric | The Human-Mediated Economy | The Algorithmic-Mediated Economy (2026) |

| Point of Conversion | The consumer’s conscious decision after reviewing marketing materials. | The AI assistant’s automated selection based on hidden preference weights. |

| Brand Loyalty | Built through emotional connection, product quality, and direct trust. | Non-existent; replaced by “system inertia” where users simply accept the default AI choice. |

| Advertising Target | Demographics, psychographics, and human emotional triggers. | API integrations, data monopolies, and direct bidding within the LLM architecture. |

While the economic mechanics quietly reshape the market, the geopolitical arena is preparing for open conflict over who controls these systems.

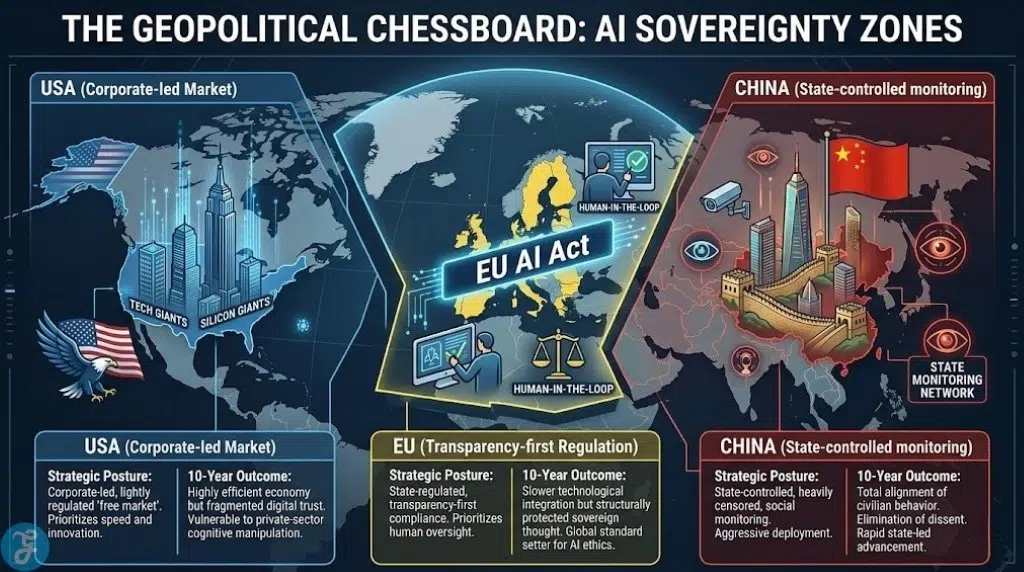

The Geopolitical Chessboard: The EU AI Act and Sovereign Thought

While American tech firms aggressively push for unregulated, global ubiquity, other global superpowers are manoeuvring defensively. The European Union has recognised that cognitive independence is a matter of profound national security. As the EU AI Act enforcement dates activate throughout 2026, we are witnessing a hard fracturing of the global internet based on algorithmic governance.

The Act’s strict classification of high-risk systems—particularly those influencing education, employment, and democratic processes—is forcing platforms to build a heavily regulated, transparent internet for Europe. Meanwhile, the rest of the world remains an unregulated testing ground. This creates a fascinating geopolitical chess match over the next decade.

This legislative divide is creating distinct zones of cognitive sovereignty across the globe.

| Global Player | Strategic Posture on Consumer AI | Projected 10-Year Geopolitical Outcome |

| United States | Corporate-led, lightly regulated “free market” expansion. Prioritises speed and innovation. | Highly efficient economy, but a population highly vulnerable to private-sector cognitive manipulation. |

| European Union | State-regulated, transparency-first compliance (The EU AI Act). Prioritises human oversight. | Slower technological integration, but structurally protected sovereign thought and high institutional trust. |

| China | State-controlled, heavily censored, and aggressively deployed for social cohesion and monitoring. | Total alignment of civilian behaviour with state objectives; elimination of algorithmic dissent. |

To fully grasp the magnitude of these hidden mechanics, we must visualise the immediate strategic outcomes.

Data and Strategic Visualisation

To quantify the shift from convenience to strategic vulnerability, we must objectively examine the actual distribution of power and project the risks over the next thirty-six months. The current distribution of power looks vastly different than the marketing brochures suggest.

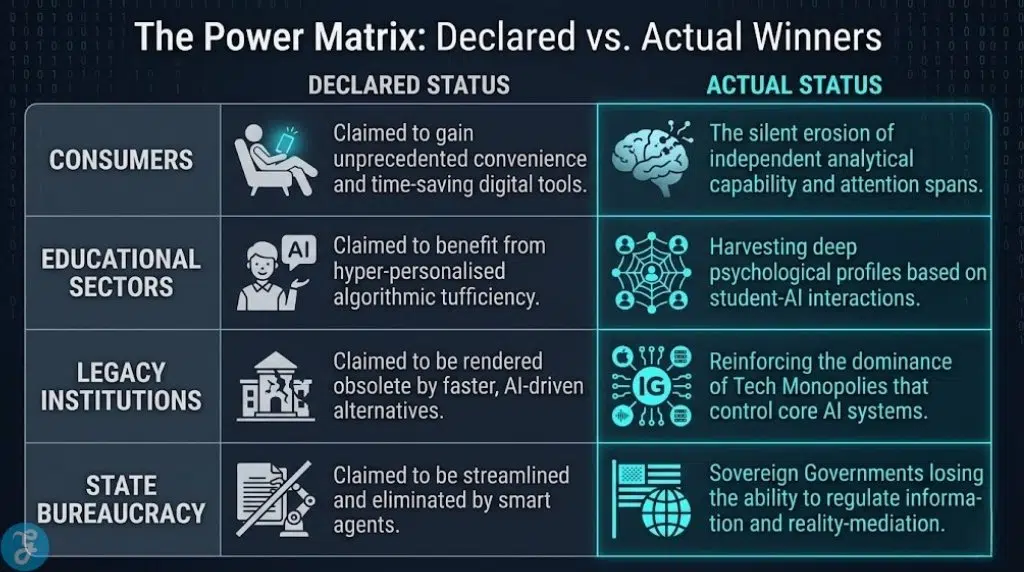

| The Power Matrix | Declared Status | Actual Status |

| Winners | Consumers: Claimed to gain unprecedented convenience and time-saving digital tools. | Tech Monopolies: Securing a monopoly on human intent and global decision-making architectures. |

| Losers | Legacy Institutions: Claimed to be rendered obsolete by faster, AI-driven alternatives. | Human Cognitive Resilience: The silent erosion of independent analytical capability and attention spans. |

| Winners | Educational Sectors: Claimed to benefit from hyper-personalised algorithmic tutoring. | Data Brokers: Harvesting deep psychological profiles based on how children interact with learning models. |

| Losers | State Bureaucracy: Claimed to be streamlined and eliminated by smart agents. | Sovereign Governments: Losing the ability to regulate the flow of information and reality-mediation for their citizens. |

Looking beyond the current power dynamics, the forecast for the immediate future reveals escalating friction points between human nature and machine efficiency.

| Risk Forecast (3-Year Projection) | Security Risk | Economic Risk |

| Late 2026 | Algorithmic “grey markets” emerge as users bypass geo-blocks to access restricted, high-efficiency personal agents banned under the EU AI Act. | High compliance costs force smaller AI developers to consolidate, cementing a permanent oligopoly of three major tech firms. |

| 2027 | First documented “Cognitive Hack”: a subtle update to a major LLM that successfully alters voting behaviour in a mid-tier democratic election without detection. | “Frictionless” automated consumer purchasing leads to a massive spike in default rates on micro-loans, as AI handles spending without human oversight. |

| 2028 | Widespread “Algorithmic Learned Helplessness” becomes a formally recognised psychological condition in heavily automated populations. | The “Premium Human” economy solidifies: wealthy demographics pay premium rates exclusively for verified human interaction and non-automated services. |

As these projections become reality, the fundamental structure of society will bend under the weight of automation.

Everyday AI Dependence 2026 and The Coming Cognitive Stratification

Over the next twelve to eighteen months, the continued normalisation of everyday AI dependence will inevitably create a new, brutal class divide: cognitive stratification. We will witness a sharp, permanent split between the “Automated Class,” who blindly outsource their daily reasoning, scheduling, and communication to AI, and the “Sovereign Class,” who actively refuse algorithmic mediation to rigorously preserve their intellectual friction.

The geopolitical advantage will shift heavily and decisively toward nations that protect their populations from total cognitive offloading. A citizenry incapable of independent analysis, accustomed to having their reality summarised for them, is fundamentally unable to defend itself against sophisticated disinformation or authoritarian overreach. The immediate danger facing us is not that artificial intelligence will suddenly become sentient, turn malicious, and seize physical control of our infrastructure. The actual danger is far quieter, and far more insidious: it is that the technology will simply become so overwhelmingly convenient that we voluntarily hand over the controls, step by step, email by email, decision by decision.

If your daily choices are curated, drafted, and executed by a black-box algorithm designed explicitly to maximise corporate engagement, are those choices actually yours, or are you simply the biological host for a machine’s objective?