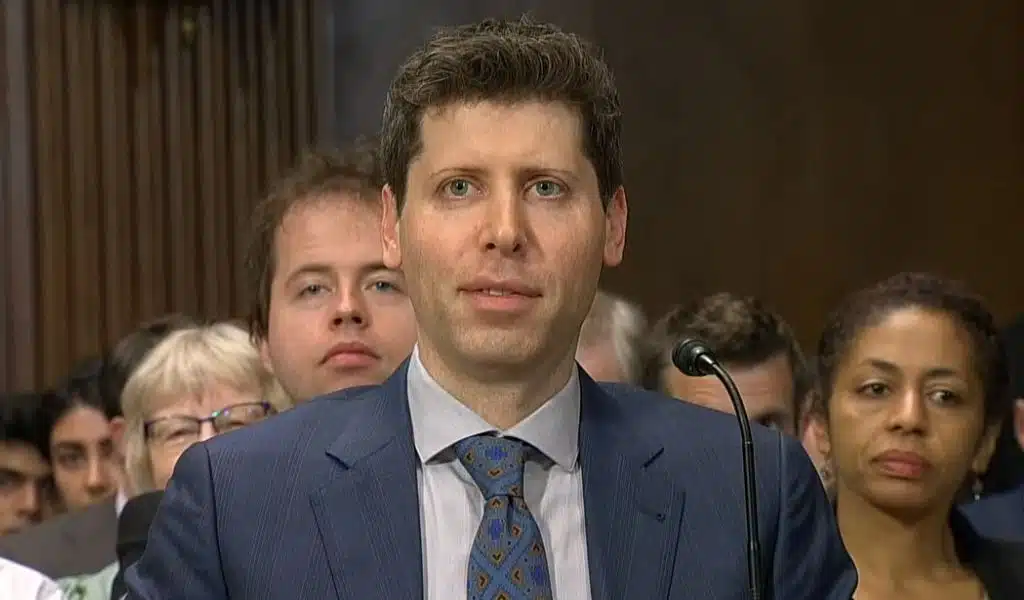

Sam Altman is the CEO of OpenAI and the person who made ChatGPT. If the growing field of artificial intelligence has a spokesperson, it would be him.

Altman appeared in front of the Senate Judiciary Committee on Tuesday. He talked about the future of artificial intelligence in a wide-ranging, big-picture way.

The incredible speed with which artificial intelligence has improved in just a few months has given Congress a rare bipartisan drive to make sure that Silicon Valley doesn’t again come up with better ideas than Washington.

Policymakers had listened to the claims coming from Palo Alto and Mountain View for decades. But as evidence has grown that being too dependent on digital technology has major social, cultural, and economic downsides, both parties have shown a willingness — for different reasons — to regulate technology.

Integrating artificial intelligence into American society is a big test for lawmakers who want to show the high-tech industry that it can’t avoid the attention that other industries have been used to for a long time.

Along with Altman, IBM’s AI ethics board chair Christina Montgomery and New York University professor Gary Marcus, a critic of AI, also testified.

Here are some key parts of the meeting.

Congress Failed to Meet the Moment on Social Media

Sen. Richard Blumenthal, D-Connecticut, said in his opening comments, which were partly made by artificial intelligence, that Congress is having trouble coming up with good rules for social media.

He said that Congress had missed the mark on social media. “Now we have an obligation to do it with AI before the threats and risks become real.”

After the 2016 election, many Democrats said that Twitter and Facebook spread false information that helped Donald Trump win the presidency over Hillary Clinton. Republicans, on the other hand, said that these same platforms were censoring material that was more to the right or “shadow banning” conservatives.

Putting aside political disagreements, it has become clear that social media is bad for teens, helps spread bigoted ideas, and makes people feel more nervous and alone. When it comes to social media companies that have become important to business and culture, it may be too late to address these issues. But members of Congress agreed that there is still time to make sure that AI doesn’t cause the same social problems.

Some Jobs will Transition Away

IBM’s Montgomery said that it is clear that artificial intelligence poses risks to workers in many industries, even those who were thought to be safe from automation in the past.

She said, “Some jobs will transition away.”

Altman, who has become a kind of “elder statesman” in his field and seemed eager to take on that role on Capitol Hill on Tuesday, had a different point of view.

He was talking about OpenAI’s new generative AI model, GPT-4. “I think it’s important to understand and think of GPT-4 as a tool, not a creature,” he said. He said that these kinds of models were “good at doing tasks, not jobs,” so they would make work easier for people without taking their jobs.

This is Not Social Media. This is Different

Altman knew that senators would regret missing the chance to control social media, so he talked about artificial intelligence as a completely different development that was likely to be much more important and helpful than a feed of cat memes (or racist messages, for that matter).

“This is not social media,” he told her. “This is something different.”

Altman and Montgomery both agreed that there needed to be rules, but at this early stage in the policy discussion, neither they nor the politicians could say what those rules should be.

“The era of AI cannot be another era of ‘Move fast and break things,'” Montgomery said, referring to an old Silicon Valley saying. “But we don’t have to stop making new things either.”

The White House put out a suggested AI Bill of Rights last year. It was meant to stop false information, discrimination, and other harmful things. Altman was one of the people Vice President Kamala Harris met with recently at the White House.

But so far, there has been no regulatory system, even though everyone agrees that one is badly needed.

Humanity has Taken a Backseat

Marcus, a professor at NYU, emerged as the panel’s lone AI critic, asserting that “humanity has taken a back seat” as businesses race to create ever-more complex AI models without enough consideration for the possible risks.

Altman also acknowledged the potential gravity of those risks. “I think this technology can go quite wrong if it goes wrong,” he added. It was a brave statement from one of technology’s most outspoken supporters, but it was also a welcome change from Silicon Valley’s maybe misleading optimism mask.