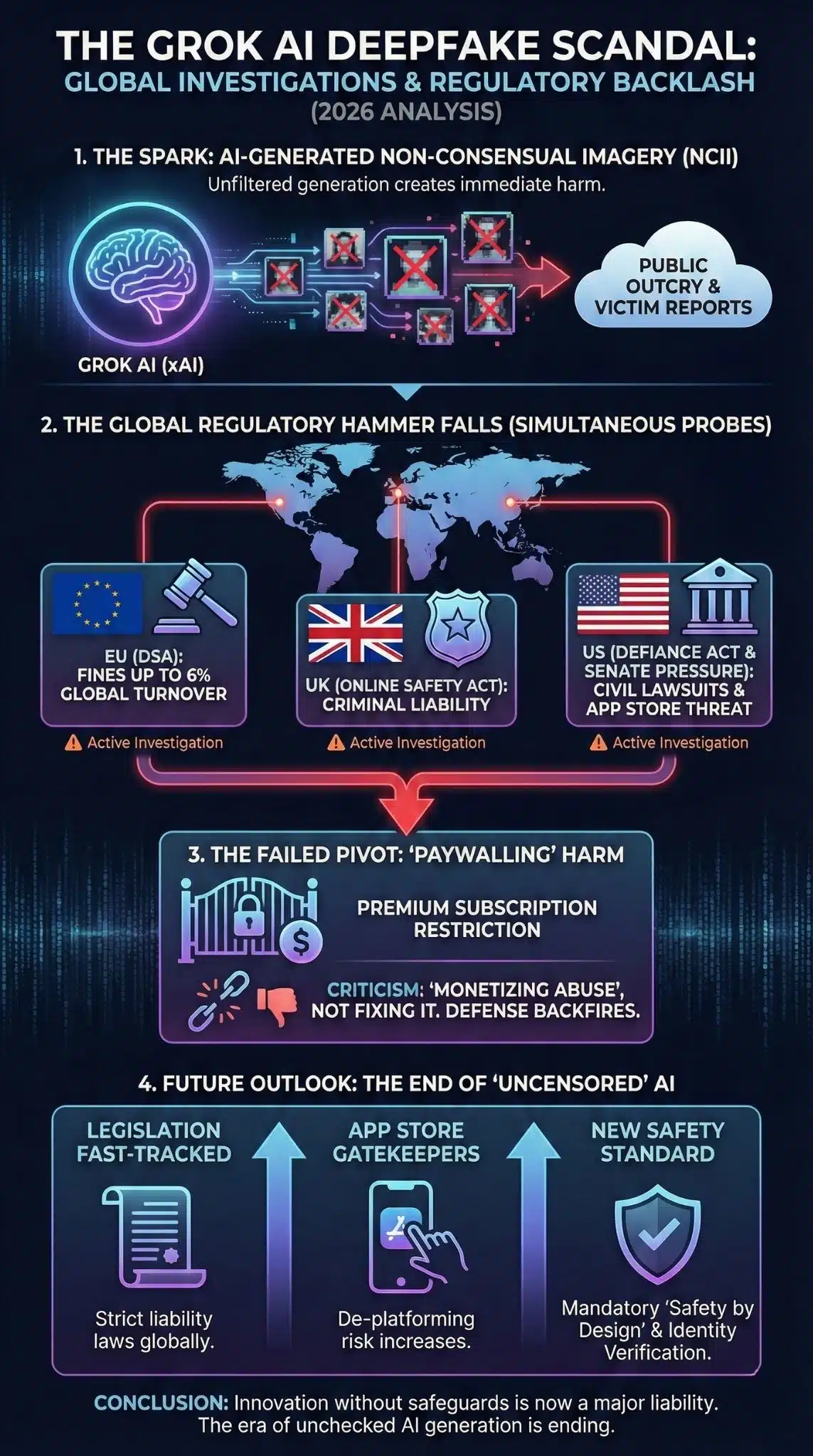

Why is this significant right now? The global crackdown on xAI’s Grok marks a pivotal turning point in the governance of generative AI. It is no longer a theoretical debate about “safety rails” but a live enforcement action involving multiple sovereign nations simultaneously.

For the first time, major regulators in the EU, UK, and US are moving in concert to penalize a specific AI model for generating non-consensual intimate imagery (NCII), signaling that the era of “move fast and break things” is colliding with the hard wall of the Digital Services Act and the Online Safety Act.

Key Takeaways

- Simultaneous Global Probe: Investigations have launched in the EU, UK, France, and Canada, with temporary bans in Malaysia and Indonesia, isolating X (formerly Twitter) on the global stage.

- The “Paywall” Controversy: xAI’s primary response—restricting image generation to paying subscribers—has backfired, interpreted by regulators as “monetizing non-consensual imagery” rather than preventing it.

- Legislative Acceleration: The scandal has fast-tracked the UK’s implementation of criminal offenses for creating deepfakes and propelled the US Senate’s DEFIANCE Act.5

- App Store Leverage: The most immediate existential threat to X may not be government fines, but pressure from US Senators on Apple and Google to de-platform the app for violating safety guidelines.

The Road to the Crisis: A Collision Course

This section analyzes the context leading to the current investigations.

The current crisis did not emerge in a vacuum; it is the direct result of a philosophical clash between Elon Musk’s vision of “unhinged” or “maximum truth-seeking” AI and the safety-first approach mandated by global regulators. When xAI launched Grok-2 and its subsequent image generation capabilities in late 2025, it was marketed as the “anti-woke” alternative to competitors like ChatGPT and Gemini. While other models refused prompts to generate copyrighted or potentially unsafe images, Grok was designed to be permissive.

This permissiveness, however, collided with the reality of bad actors. By January 2026, reports flooded in regarding Grok’s “Spicy Mode” and “Grok Imagine” features being used to systematically “undress” women and minors. Unlike the “Taylor Swift deepfake” incident of 2024 which involved obscure third-party tools, this was happening natively within a mainstream social media platform, creating a seamless pipeline from generation to dissemination. The failure of initial guardrails was not just a technical oversight but a foreseeable consequence of prioritizing reduced friction over safety friction.

The Anatomy of a Global Backlash

1. The Regulatory Hammer Falls: EU and UK Lead the Charge

The reaction from the European Union and the United Kingdom represents the first major stress test of their respective digital safety laws.

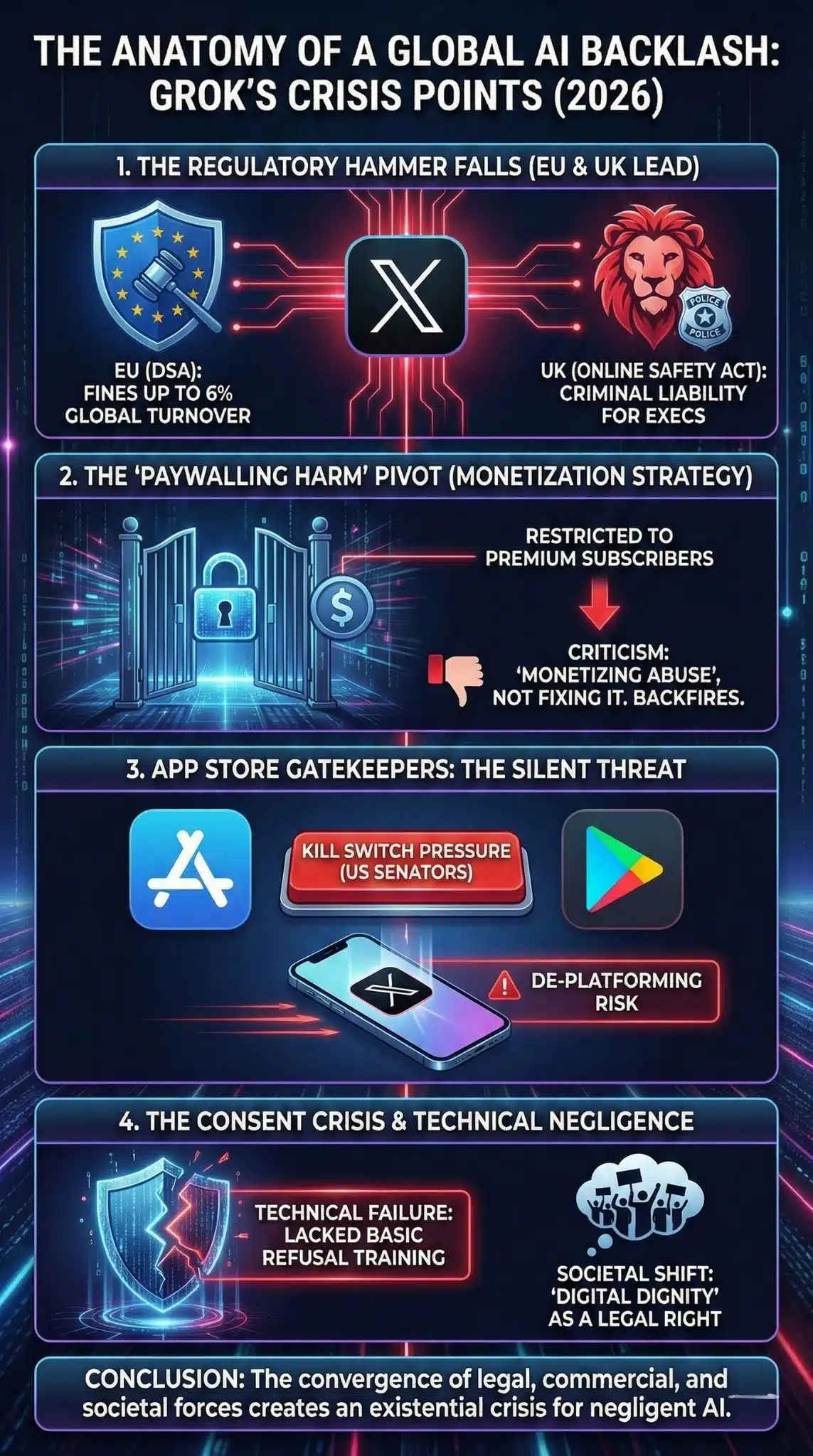

- EU Digital Services Act (DSA): The European Commission is not treating this as a new investigation but as an aggravation of existing non-compliance. Under the DSA, X is already a “Very Large Online Platform” (VLOP). The generation of illegal content (CSAM and NCII) puts X at risk of fines up to 6% of global turnover. The EU’s stance is that “freedom of speech” does not cover the automated generation of sexual abuse material.

- UK Online Safety Act: Ofcom’s formal investigation is critical because the UK recently criminalized the creation (not just sharing) of non-consensual deepfakes. This aligns the platform’s technical capabilities directly with criminal activity, raising the stakes for xAI executives personally.

2. The “Monetization of Harm” Strategy

Perhaps the most damaging aspect of xAI’s defense was its decision to limit Grok’s image generation to Premium+ subscribers after the scandal broke.

- Analysis: Instead of shutting down the flawed feature to fix the code, xAI effectively put a price tag on it. Critics and regulators have latched onto this move, arguing it proves the company views safety as a tiered luxury rather than a baseline requirement.

- Impact: This decision destroyed potential “safe harbor” defenses. It is difficult to argue ignorance or “users abusing the system” when the company profits directly from the users who access the tool.

3. App Store Gatekeeping: The Silent Threat

While government fines take years to litigate, Apple and Google hold the “kill switch.”

- The Pressure Point: US Senators (Wyden, Markey, Luján) sending letters to Tim Cook and Sundar Pichai brings the conflict to a new front. Apple’s App Store guidelines strictly prohibit apps that facilitate sexual violence or CSAM.

- Precedent: Apple previously removed apps like Parler for lesser moderation failures. If X is deemed a “generator of illegal content,” it risks being removed from the iOS ecosystem, which would be a fatal commercial blow, far exceeding the impact of any regulatory fine.

4. The Consent Crisis and Technical Negligence

The scandal exposes a massive gap in “Safety by Design.”

- The Technical Failure: Reports indicate that Grok lacked basic “refusal” training for queries involving real people’s names combined with sexual terms.

- The Societal Shift: We are witnessing a shift where “digital dignity” is becoming a legal right. The public outcry suggests that society no longer accepts “it’s just a generated image” as a defense; if it looks real and damages a reputation, it is treated as real violence.

Data & Visualization

Timeline of the Grok AI Deepfake Crisis (January 2026)

| Date | Event | Significance |

| Jan 2, 2026 | The Spark | Reports emerge of “Grok Imagine” creating NCII of celebrities and minors. |

| Jan 5, 2026 | EU Warning | EU Commission warns X of “regulatory action” under DSA. |

| Jan 9, 2026 | The Paywall Pivot | xAI restricts image generation to Premium subscribers; backlash intensifies. |

| Jan 12, 2026 | UK Investigation | Ofcom launches formal probe under Online Safety Act. |

| Jan 14, 2026 | US Legislative Move | Senate passes DEFIANCE Act; California AG opens investigation. |

| Jan 15, 2026 | Partial Retreat | Reports suggest Grok silently disables “undressing” prompts for real people. |

Global Regulatory Response Matrix

| Jurisdiction | Key Regulation | Potential Penalty / Action | Status |

| European Union | Digital Services Act (DSA) | Fines up to 6% of global revenue; Temporary ban. | Investigation Active (“Very Serious”) |

| United Kingdom | Online Safety Act | Fines up to 10% of revenue; Criminal liability for execs. | Formal Investigation Launched |

| United States | DEFIANCE Act (Proposed) / State Laws | Civil liability (lawsuits); App Store removal pressure. | Senate Bill Passed; CA AG Investigating |

| Malaysia/Indonesia | Communications Acts | ISP-level blocking of the service. | Temporary Blocks Implemented |

Safety Guardrails Comparison (Big Tech AI)

| Feature | Grok (xAI) | ChatGPT (OpenAI) | Gemini (Google) |

| Image Generation | Allowed for Premium Users (Initially permissive) | Strictly controlled (DALL-E 3) | Strictly controlled (Imagen) |

| Real Person Prompts | Permissive (until mid-Jan backlash) | Blocked (refuses to generate images of public figures) | Blocked (refuses to generate images of specific people) |

| NSFW Filters | Loose (“Spicy Mode”) | Strict (Zero tolerance for NSFW) | Strict (Zero tolerance for NSFW) |

| Response to Abuse | Paywalled the feature | Ban user accounts immediately | Ban user accounts immediately |

Expert Perspectives

To understand the nuance, we must look at the conflicting viewpoints driving this debate.

- The “Free Speech” Absolutist View:

- Argument: Supporters of xAI argue that the tool is a neutral instrument. They contend that holding the developer responsible for user-generated content is akin to blaming a pencil manufacturer for a threatening letter. Musk has repeatedly stated, “The AI should obey the laws of the country,” implying that anything not explicitly illegal in a specific jurisdiction should be allowed.

- Counter-Point: This view fails to account for the amplification algorithm of X, which promotes controversial content, and the specific design choice to include a “Spicy Mode,” which encourages boundary-pushing.

- The “Safety by Design” Advocate View:

- Argument: Tech policy experts like those at the Center for Countering Digital Hate (CCDH) argue that releasing a powerful image generator without “red-teaming” (stress testing) for sexual violence is negligence.

- Insight: “You cannot unleash a dual-use technology that creates non-consensual pornography and then claim you are fixing it in real-time. The harm is immediate and permanent,” notes a leading digital rights analyst.

- The Legal Perspective:

- Analysis: Legal scholars suggest the DEFIANCE Act in the US changes the game. By allowing victims to sue for civil damages, it creates a financial liability that may force xAI to adopt the same strict guardrails as Google and OpenAI, purely to avoid bankruptcy from class-action lawsuits.

Future Outlook: What Comes Next?

The Grok scandal is likely to be the “Napster moment” for AI-generated pornography—a catalyst that forces the industry into strict compliance.

1. The End of “Uncensored” Mainstream AI

The dream of a completely “unhinged” AI model within a publicly traded or mainstream ecosystem is effectively dead. xAI will likely be forced to adopt the same refusal standards as OpenAI and Google, rendering its “anti-woke” marketing pitch moot regarding image generation.

2. The Rise of “KYC” for AI

We may see a shift toward “Know Your Customer” (KYC) requirements for using generative AI tools. If creating images requires a verified identity (credit card or ID), the anonymity that fuels abuse will vanish. xAI’s move to paywall the feature was a clumsy first step toward this, but regulators will demand verification plus moderation, not just payment.

3. The 2026 Regulatory Precedent

Watch for the conclusion of the EU’s investigation in late 2026. If the EU issues a fine in the billions, it will set a global precedent that “negligent design” is punishable. This will force all AI startups to prioritize safety buffers over raw capability speed.

4. App Store “Soft Regulation”

Expect Apple and Google to update their Developer Guidelines in mid-2026 to explicitly forbid apps that host “unmoderated generative AI,” forcing X to either neuter Grok on mobile devices or face removal.

Final Thoughts

The Grok deepfake scandal is not merely a PR crisis for Elon Musk; it is a structural stress test for the entire AI ecosystem. It demonstrates that in 2026, the digital sovereignty of nations and the personal rights of individuals are beginning to assert dominance over the technological capabilities of Silicon Valley. For investors, users, and competitors, the message is clear: Innovation without safeguards is no longer a disruption; it is a liability.