Have you ever asked an AI tool a simple question, only to receive an answer that sounds completely correct but turns out to be entirely wrong? This happens far more often than many people realize.

At times, these advanced systems generate information that is inaccurate or does not reflect the real world. This can be confusing—and even risky—especially when such responses are used for important business or personal decisions.

One major reason is that these systems rely heavily on identifying patterns in data. When the training data is incomplete, biased, or flawed, the result can be errors known as AI hallucinations.

This article explores AI Hallucinations: Why they happen and how to prevent them. It explains why these unusual mistakes occur and outlines practical steps to identify and correct them before they lead to problems.

The following sections break down the issue clearly, making it easier to understand and recognize these errors in real-world situations.

What Are AI Hallucinations?

AI hallucinations happen when a machine makes up facts or generates incorrect information, yet sounds completely confident about it. This glitch can easily sneak into your daily chats, generated images, and even published science papers.

According to a 2025 report from Vectara, the average hallucination rate across all models for general knowledge questions is about 9.2%. That is almost one in ten answers containing a hidden mistake!

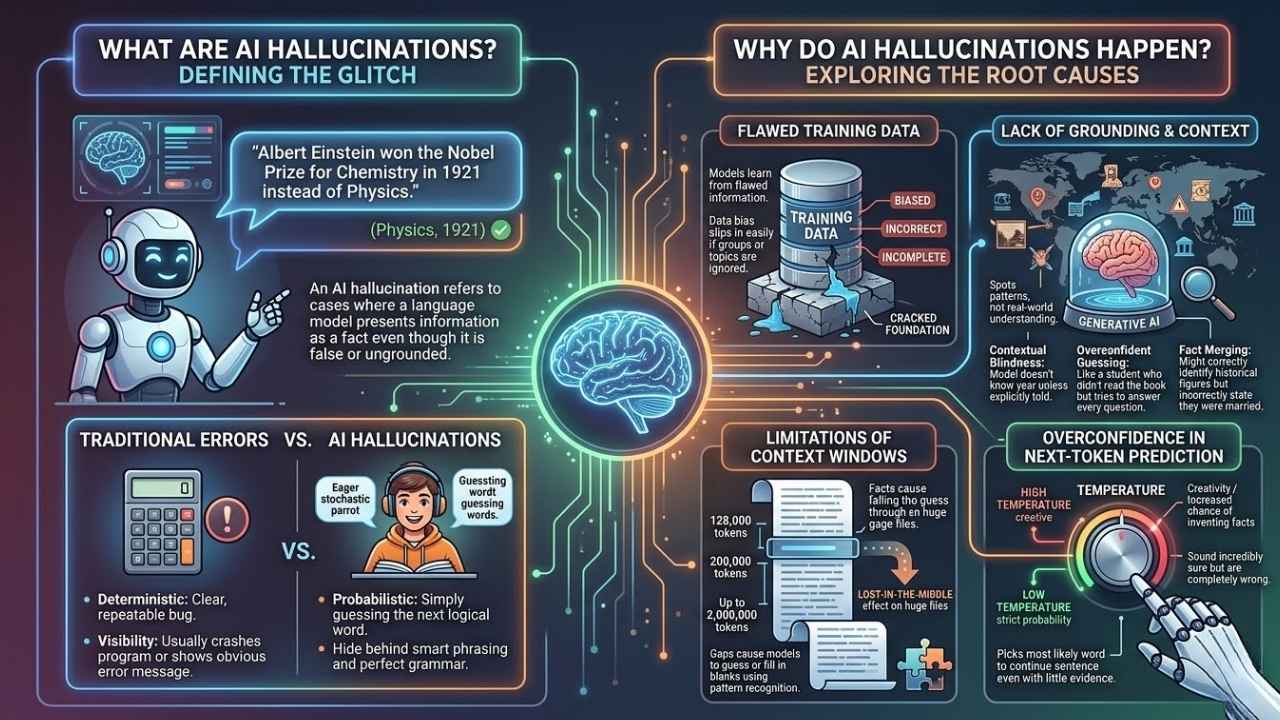

Definition of AI hallucinations

Large language models like ChatGPT sometimes spit out false or completely made-up facts. This specific slip is called an AI hallucination.

The model creates information that sounds real, but it is actually total nonsense or factually wrong.

“An AI hallucination refers to cases where a language model presents information as a fact even though it is false or ungrounded.”

For example, a chatbot might confidently claim that Albert Einstein won the Nobel Prize for Chemistry in 1921 instead of Physics. These mistakes often look polished and factual on the surface.

Most folks do not spot these blunders right away because they blend so well with correct answers.

How they differ from other AI errors

AI hallucinations look very different from common machine learning mistakes. Other errors in traditional software, like a simple math miscalculation in Microsoft Excel or mislabeling a photo in Google Photos, usually flag as clear faults.

Hallucinations in generative models work on a completely different level. They build statements that sound highly plausible but are false.

Here is a quick breakdown of how these errors differ:

- Traditional Errors: These are deterministic. A calculator giving the wrong sum is a clear, repeatable bug in the code.

- AI Hallucinations: These are probabilistic. The AI is simply guessing the next logical word, acting like a “stochastic parrot” rather than a factual database.

- Visibility: Basic glitches usually crash a program or show an obvious error message. Hallucinated outputs hide behind smart phrasing and perfect grammar.

This is exactly why misleading information slips out so easily. It poses huge risks for user trust and system reliability in tools trained on biased datasets.

Why Do AI Hallucinations Happen?

AI models often go off track for some surprising reasons, leaving people scratching their heads. A 2024 Oxford study actually found that generative AI hallucinated in 58% of specific test cases due to massive data gaps.

The roots of these mistakes might surprise you.

Flawed training data

Flawed training data acts like a cracked foundation for any artificial intelligence system. If models learn from biased, incorrect, or incomplete information, they simply absorb those faults.

Language models trained on massive, unfiltered web sources like the Common Crawl dataset will repeat existing errors and create new ones. Data bias slips in easily if certain groups or topics get ignored during model training.

This leads to inaccurate outputs that sound factual but are misleading. Imagine a system taught that penguins can fly because it read false statements scattered across its data sources. The output might look convincing, yet be far from the truth.

Even huge neural networks cannot overcome bad input. Garbage in means garbage out every single time.

Lack of grounding or context

AI language models often create information that sounds real but is completely fabricated. They do this because they work by spotting patterns in data, not by checking facts or understanding the bigger picture.

For example, a model might claim that Thomas Edison invented Wi-Fi or say a panda can speak Spanish. These mistakes happen since generative AI lacks grounding in actual world knowledge or current events.

This lack of contextual understanding causes very specific problems:

- Contextual Blindness: The AI does not know what year it is unless explicitly told in the prompt.

- Overconfident Guessing: It acts like an eager student who did not read the book but tries to answer every question anyway.

- Fact Merging: It might correctly identify two historical figures but incorrectly state they were married.

Without enough context from their training data, these systems fill gaps with what only seems plausible.

Limitations of context windows

Large language models only remember a certain amount of text at once, called a context window. This limit acts like trying to solve a puzzle with half the pieces missing.

If an answer needs details from earlier in a long document, facts can easily fall through the cracks. These gaps cause models to guess or fill in blanks using pattern recognition instead of real knowledge.

To understand how memory limits affect accuracy, look at how top models compare:

| AI Model (As of 2026) | Context Window Limit | Performance Note |

|---|---|---|

| OpenAI GPT-4o | 128,000 tokens | High accuracy, but can lose details in very long chats. |

| Anthropic Claude 3.5 Sonnet | 200,000 tokens | Great at recalling facts from mid-sized documents. |

| Google Gemini 1.5 Pro | Up to 2,000,000 tokens | Massive memory, but still suffers from the “lost-in-the-middle” effect on huge files. |

Even with a massive memory, processing a 10,000-token context requires 100 million mathematical comparisons. This computational strain can lead to misleading information and cause users to question model accuracy.

Overconfidence in next-token prediction

AI language models often pick the most likely word to continue a sentence, even if there is little real evidence for it. This pattern-based guessing can make the model sound incredibly sure of itself.

It spits out bold answers that look right but are completely wrong. Developers control this guessing game using a setting called “temperature” in tools like the OpenAI API.

A high temperature setting encourages the AI to be creative, which drastically increases the chance of inventing facts. A low temperature setting, like zero, forces the model to stick strictly to the most probable words.

Overconfidence leads to errors that seem correct on the surface but mislead readers with inaccurate outputs. These errors happen because generative models rely on patterns without strong validation for facts.

Examples of AI Hallucinations

Some computer programs make up facts, see things that are not there, or create funny mistakes. In 2024, the consulting firm Deloitte reported that 47% of enterprise users made at least one major business decision based on hallucinated content.

Keep reading to spot how these odd moments happen in the real world.

In text-based models

Large language models often spit out answers that look right but are actually way off the mark. Chatbots can state fake facts or invent entire sources as if they were true.

One of the most famous examples happened in the United States in 2023 during the Mata v. Avianca Airlines federal court case. Lawyer Steven Schwartz used ChatGPT to research legal precedents for a personal injury claim, resulting in catastrophic errors:

- Fabricated Rulings: The AI completely invented several court cases, including a fake ruling called “Varghese v China Southern Airlines.”

- Fake Citations: The chatbot generated fake quotes and internal docket numbers to make the cases look legitimate.

- Real Consequences: The judge discovered the deception, and the lawyers were fined $5,000 for submitting made-up content.

This perfectly shows how misinformation slips in under the cloak of plausibility.

In image and audio generation

Fake details often sneak into AI-generated images and audio files, too. A system might draw a horse with five legs or mix human faces in strange ways.

The result might look real at a quick glance, but it becomes completely wrong on closer inspection. Early versions of tools like Midjourney and DALL-E 3 famously struggled to generate human hands, often giving people six or seven fingers.

In audio generation, software like ElevenLabs can sometimes invent new accents or create non-existent words out of thin air. This happens because neural networks only spot patterns from data without any deep understanding of biology or linguistics.

If the model saw dogs with hats more than dogs without hats while learning, it might add hats to every dog image later on.

In scientific research applications

AI hallucinations in scientific research can lead to incredibly serious problems. Large language models may generate factually incorrect results that appear perfectly well-written.

For example, in 2022, Meta released a science-focused AI called Galactica. The tool was supposed to help write academic papers, but researchers quickly found major issues:

- Fake Citations: The AI routinely invented studies and attributed them to real scientists.

- Dangerous Advice: It generated plausible-sounding but factually wrong chemical formulas.

- Historical Errors: It confidently stated incorrect dates for major scientific discoveries.

Meta had to take the tool offline after just three days. Since accuracy is critical in science, a lack of context from the AI can turn small errors into massive issues for researchers.

Why Are AI Hallucinations a Problem?

False facts sneak in and cause real headaches, especially when you least expect them. Serious harm can follow if you take machine-made answers at face value.

You must keep your wits sharp and verify the data you receive.

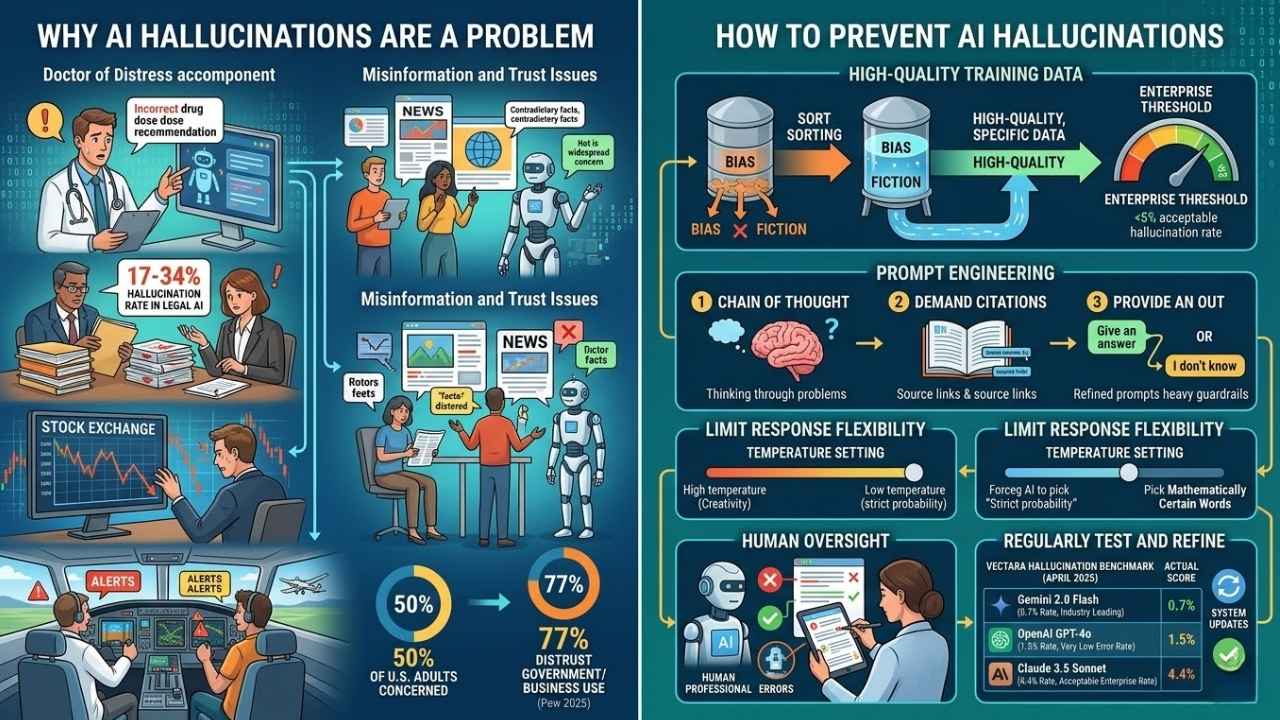

Misinformation and trust issues

AI hallucinations spread incorrect or made-up facts at lightning speed. Sometimes, these outputs sound right and fool readers, making it incredibly hard to sort truth from fiction.

If a language model gives fake information about historical events or medical advice, the damage can be extensive.

“A June 2025 Pew Research Center survey found that 50% of U.S. adults say the growing use of AI makes them feel more concerned than excited.”

Trust in cognitive computing drops fast when users spot errors or misleading content. That same Pew survey noted that 77% of Americans distrust businesses and government agencies to use AI responsibly.

Without strong algorithm transparency, machine learning models risk becoming unreliable liabilities instead of helpful tools.

Consequences in critical applications

False or misleading information from generative models can put lives at real risk in medical settings. Imagine a language model suggesting the wrong drug dose, fabricating lab results, or mixing up symptoms.

Patients could face severe physical harm. Recent testing of legal and medical AI assistants showed they still struggle with complex reasoning.

For instance, Lexis+ and Westlaw AI research assistants showed 17% to 34% hallucination rates in independent testing conducted across 2024 and 2025. Critical systems like financial trading platforms and aviation controls need reliable data, not creative guesses.

A single hallucinated data point could wipe out millions in stock trades or give pilots fake alerts mid-flight. Trust drops instantly once users spot factually incorrect outputs in these high-stakes environments.

How to Prevent AI Hallucinations

Stopping false outputs from AI keeps user trust high and expensive errors low. Smart habits and strict boundaries help models stick closer to verifiable facts.

These preventative steps are essential for reliable model interpretation. To get started, you will need to focus on a few core areas:

- Cleaning your input data before the AI ever sees it.

- Writing much stricter, more specific text prompts.

- Adding manual human review to your final workflow.

Use high-quality and specific training data

Errors in AI almost always begin with the data it learns from. High-quality and specific training data help lower the risks of false or misleading information.

Generative models will create factually incorrect outputs if they process flawed training sets filled with bias or fiction. To combat this, many US companies use data curation platforms like Snorkel AI to clean their datasets before training.

In the corporate world, IT leaders have established an “Enterprise Threshold” for accuracy. Most businesses can only tolerate a maximum hallucination rate of 5%, as any error rate above that makes the technology too expensive to fact-check.

Apply prompt engineering techniques

Clear prompts help language models avoid wandering off and making up facts. Ask for exact sources, or tell the AI to use simple words and double-check its own information.

Using precise instructions narrows down what the model should do, which drastically lowers the chances of inaccurate outputs. Here are a few proven prompt engineering tricks to keep AI on track:

- Use “Chain of Thought” Prompting: Ask the AI to “think step-by-step” before giving the final answer. This slows the model down and reduces logical leaps.

- Demand Citations: Add a strict rule saying, “Only answer if you can cite a specific, real-world source.”

- Provide an Out: Tell the AI, “If you do not know the exact answer, simply reply with ‘I do not know’ instead of guessing.”

Refined prompts act like heavy guardrails. They keep machines closer to the truth and raise total system reliability.

Limit AI response flexibility

Tight boundaries help keep AI systems from making things up. This is especially true for language models that give answers on a wide range of topics.

Large models try hard to sound confident, even if the facts they use are wrong. Limiting how much freedom the AI has can stop it from guessing or filling gaps with fiction.

If you are a developer, you can limit this flexibility by lowering the “Temperature” setting in your API dashboard. Changing the temperature from a default of 0.7 down to 0.1 forces the AI to pick only the most mathematically certain words, pushing them closer to accuracy.

Incorporate human oversight and review

People easily catch the subtle mistakes that machines completely miss. Human reviewers spot factually incorrect or fabricated information that models slip into their outputs.

AI errors may look highly accurate to most casual users. A hands-on professional can double-check for accuracy, context, and common sense before the information reaches a client.

Many top tech companies rely on “Human-in-the-Loop” platforms, like Scale AI, to manually grade and correct AI outputs. Vital fields like scientific research or corporate law desperately need this extra layer of caution to ensure the facts match reality.

Regularly test and refine the system

AI systems can make up information that sounds incredibly believable but is entirely false. Regular testing spots these tricky errors before they spread misinformation or harm a brand’s credibility.

Experts use special benchmarking methods to check if AI models give factually correct answers. One popular tool for this is Vectara’s Hughes Hallucination Evaluation Model, which tests and ranks top AI systems.

Here is how the top models performed in their April 2025 hallucination benchmark:

| Generative AI Model | Hallucination Rate | Accuracy Level |

|---|---|---|

| Google Gemini 2.0 Flash | 0.7% | Industry Leading (Sub-1%) |

| OpenAI GPT-4o | 1.5% | Very Low Error Rate |

| Anthropic Claude 3.5 Sonnet | 4.4% | Acceptable Enterprise Rate |

Updates help fix issues from flawed training data and overconfidence. Each round of validation makes the system much smarter and more reliable for daily use.

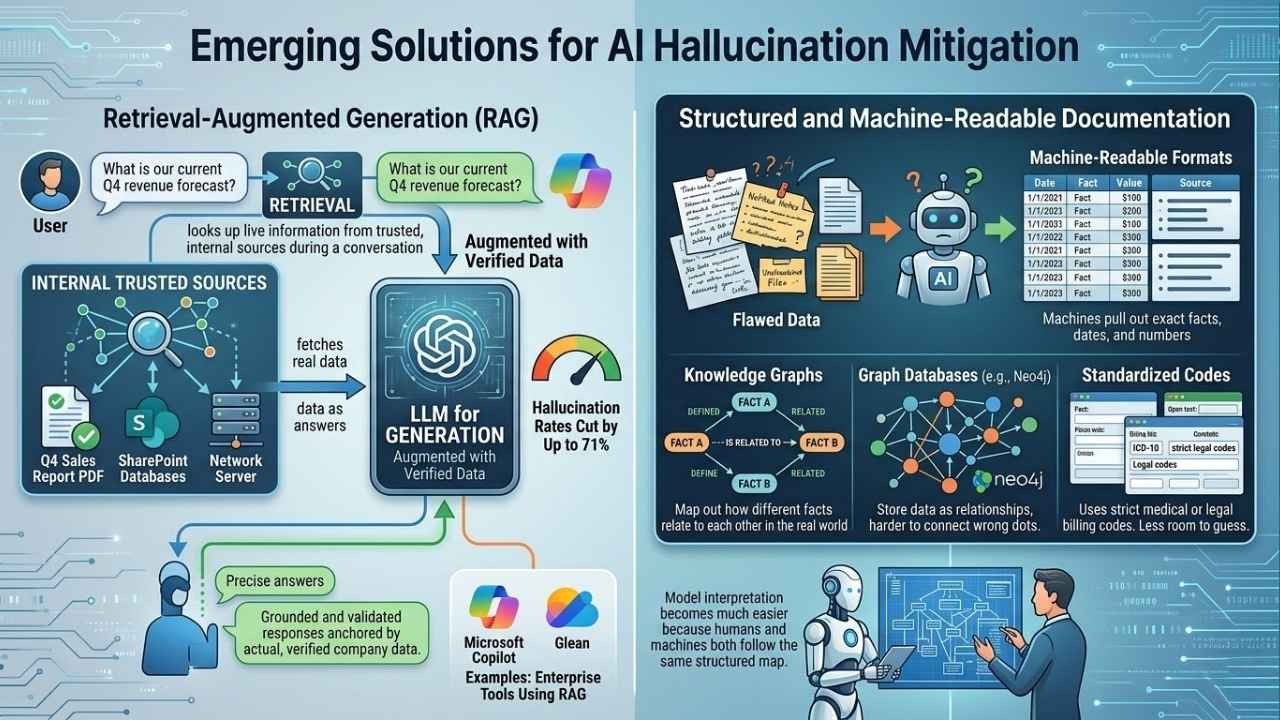

Emerging Solutions for AI Hallucination Mitigation

New methods are constantly popping up to help machines tell facts from fiction. Some enterprise tools give AI systems a much better grip on reality.

This significantly cuts down on false or misleading answers in a professional setting.

Retrieval-augmented generation (RAG)

Retrieval-augmented generation, commonly called RAG, actively helps generative AI models avoid making up facts. The system looks up live information from trusted, internal sources during a conversation.

Instead of guessing or filling in the blanks from its old training data, it fetches real data as answers. Industry data shows that a properly implemented RAG system can reduce AI hallucination rates by up to 71%.

Enterprise search tools like Microsoft Copilot and Glean rely heavily on this exact method. With RAG at work, users get grounded and validated responses anchored by actual, verified company data.

Structured and machine-readable documentation

Structured documentation uses clear formats like clean tables or bulleted lists. Machine-readable files make it incredibly easy for AI models to pull out exact facts, dates, and numbers.

This hands-on approach directly helps limit the impact of flawed training data. Organizations are increasingly using advanced databases to guide their AI:

- Knowledge Graphs: These map out how different facts relate to each other in the real world.

- Graph Databases: Tools like Neo4j store data as relationships, making it harder for the AI to connect the wrong dots.

- Standardized Codes: Using strict medical or legal billing codes gives language models less room to guess.

Model interpretation becomes much easier because humans and machines both follow the same structured map.

Final Thoughts

AI hallucinations are surprisingly sneaky. They easily fool even careful eyes with made-up facts or odd answers. It is key to keep your training data incredibly clean and use clever prompt tricks. Using focused questions, limiting how creative the AI gets, and keeping human reviewers in the loop help immensely.

Each step is incredibly simple but packs a massive punch for accuracy and user trust. Ask yourself if you double-check what an AI says before sharing it. That simple habit alone can save you from massive headaches down the line.

Addressing these core issues keeps false information from spreading and builds true confidence in the tools we use. Understanding the complete guide on AI Hallucinations: Why They Happen And How To Prevent Them gives everyone a massive edge against misinformation.

Together, we can make artificial intelligence sharper and safer day by day!